Social Statistics with R

I dedicate this book to my parents — to my late and beloved mother, who gave me love, life, and light, and to my dear father, whose warmth and kindness are the fount from which my spirit is sustained.

1 Chapter 1: Introduction

Welcome!

This book is based on my lecture notes for the Social Statistics courses that I have taught since 2014 at various institutions, including New York University, the University of Wisconsin–Madison, and the University of Pennsylvania. At NYU, I served as a statistics tutor while pursuing a master’s degree in economics and sociology. During my doctoral studies at the University of Wisconsin–Madison, I was a teaching assistant for several statistics courses offered by the Sociology and Statistics Departments. Toward the end of the Ph.D. program, I also served as the instructor of record for the Social Statistics course. At the University of Pennsylvania, during my postdoctoral fellowship, I taught a capstone data science course focusing on machine learning techniques for master’s students.

Since joining the University of California, Santa Barbara, as a faculty member in the Department of Sociology, I have decided to compile my lecture notes into a publicly accessible, user-friendly book with integrated R-Studio software applications—one that other instructors can readily use for their own courses.Over the past few years, I felt there was a clear need for a book that presents R code in a user-friendly way, so I decided put to gether this manuscript. It must be noted that the book will go thourgh many iterations in the next couple of years for refinement and improvement. To ensure consistency in statistical formulas and in the course of preparing this online book manuscript, I have also relied on a number of references, which I have detailed at the end of book.

It is my hope that this book proves useful to both instructors and students who teach and learn in large undergraduate courses in social statistics—courses that demand both conceptual clarity and practical applications of statistical learning with a widely used software such as R-Studio. The materials assembled here are intended to be user-friendly and accessible, to provide a coherent framework for teaching statistical reasoning in the social sciences, and to encourage students to view data not as an abstraction but as a language through which social realities can be understood and examined. As such, most of the examples are social scientific in nature. It is made available online prior to its final print publication, and I intend for it to pass through several iterations of refinement. I welcome all forms of feedback from colleagues across institutions worldwide.

Statistical Learning

Statistical tools are the methodological backbone of modern quantitative social science. They enable us to organize, analyze, and translate complex data about the world into sensible and measurable insights. The sociological tradition often seeks to quantify disparities in income, wealth, health, education, social capital, cultural capital, and civic participation among others to delineate how individuals are stratified or grouped into distinct “strata.” Statistical learning makes it possible for social and natural scientists to test hypotheses whose findings are widely applicable and generalizable. Social scientists employ tools such as surveys to glean information about economic, social, and political spheres of life. The data from those surveys are cleaned and analyzed, a set of hypotheses are generated, and the results of those analyses are disseminated.

Advances in the social sciences, much like in natural science and engineering, are propelled by the systematic collection and analysis of data. Yet social phenomena are inherently dynamic and variable: the same survey, experiment, or observational study can produce different outcomes even under different conditional backgrounds. This variability arises not only from the complex interplay of human behavior, institutions, and environments. In this context, social statistics serves as the critical toolkit for navigating uncertainty, enabling researchers to detect meaningful patterns amid individuals and contextual differences.

Because social data are often complex and multidimensional, the proper design and analysis of studies demand rigorous statistical methods. Social statistics thus equip scholars to create valid sampling strategies, develop robust estimation models, and apply techniques that distinguish ‘effects’ from random variation or bias. By doing so, it allows researchers to draw reliable and generalizable conclusions about the social world. Statistical learning therefore allows social scientists to delineate broad trends in important issues—issues such as gender inequality, income mobility, poverty, unemployment, homelessness, political partisanship, and civic participation among others—and hopefully, for policy-makers to pay attention in order to mitigate them. In short, social statistics is indispensable for transforming messy social realities into concrete evidence that advances knowledge and informs decisions at the highest levels of research and governance.

With the rise of information technology and the unprecedented availability of high-quality data, we are now able to study the social world in ways that were once unimaginable. Fluency in statistical learning has therefore become essential—not only for methodological rigor but also for professional versatility across disciplines and industries. Methodologically, it enables us to design stronger studies, manage large and complex data sets, and apply advanced analytical techniques that enhance the credibility and generalizability of our findings. Mastery of social statistics allows us to draw defensible conclusions, engage meaningfully in interdisciplinary debates, inform public policy, and contribute to the cumulative knowledge that shapes our understanding of society.

At the heart of statistical reasoning lie several foundational concepts that structure how we make sense of data. Population and sample distinguish between the broader group we wish to understand and the subset from which we gather information. Variables represent measurable characteristics that can vary across individuals or cases, while distributions describe how these values are spread or concentrated. Measures of central tendency—such as the mean, median, and mode—summarize where the center of the data lies, whereas measures of dispersion, including variance and standard deviation, capture how widely observations differ from that center. Correlation quantifies the strength and direction of association between variables, and regression extends this by modeling how changes in one variable predict changes in another. Sampling error and bias remind us that our observations are imperfect reflections of reality, prompting the need for confidence intervals and hypothesis tests to assess the uncertainty inherent in our estimates. Finally, while both association and causation are valuable concepts in social inquiry and scientific knowledge, the distinction between them is crucial. This difference highlights the importance of moving beyond merely observing patterns (association) to understanding the underlying mechanisms that produce those patterns (causation).

Right at the outset, it is crucial to have a few key terminologies clearly defined. These terminologies will repeat throughout the book, so it is imperative to have a precise understanding of them.

Population

In statistical reasoning, a population refers to the entire set of entities, individuals, or cases about which we wish to draw conclusions. It represents the full universe of possible observations relevant to a question—whether that universe is as vast as all citizens of a country or as specific as all firms in a given industry. The population is the conceptual target of inference: the reality we aim to understand, even if it cannot be fully observed.

Sample

A sample is a smaller subset of the population from which data are actually collected. Because studying an entire population is in most cases impossible, sampling provides a practical means of acquiring knowledge about the whole through a manageable portion. When properly drawn, a sample reflects the essential characteristics of its population, allowing researchers to make informed and generalizable claims about larger social patterns. In fact, the term statistics itself signifies drawing on a sample from a population.

Variables

A variable is any characteristic or attribute that varies across individuals, groups, or time. In social research, variables can represent attitudes, income, education, or behaviors. They are the building blocks of analysis, allowing us to measure and model the relationships that structure the social world.

Random Variable

A random variable formalizes uncertainty by assigning numerical values to outcomes of a random process. It links probability theory to empirical data, translating abstract uncertainty into quantifiable form. In social science research, a random variable allows us to treat outcomes—such as voting behavior, income, or test performance—as realizations of underlying stochastic processes rather than fixed quantities.

Distribution

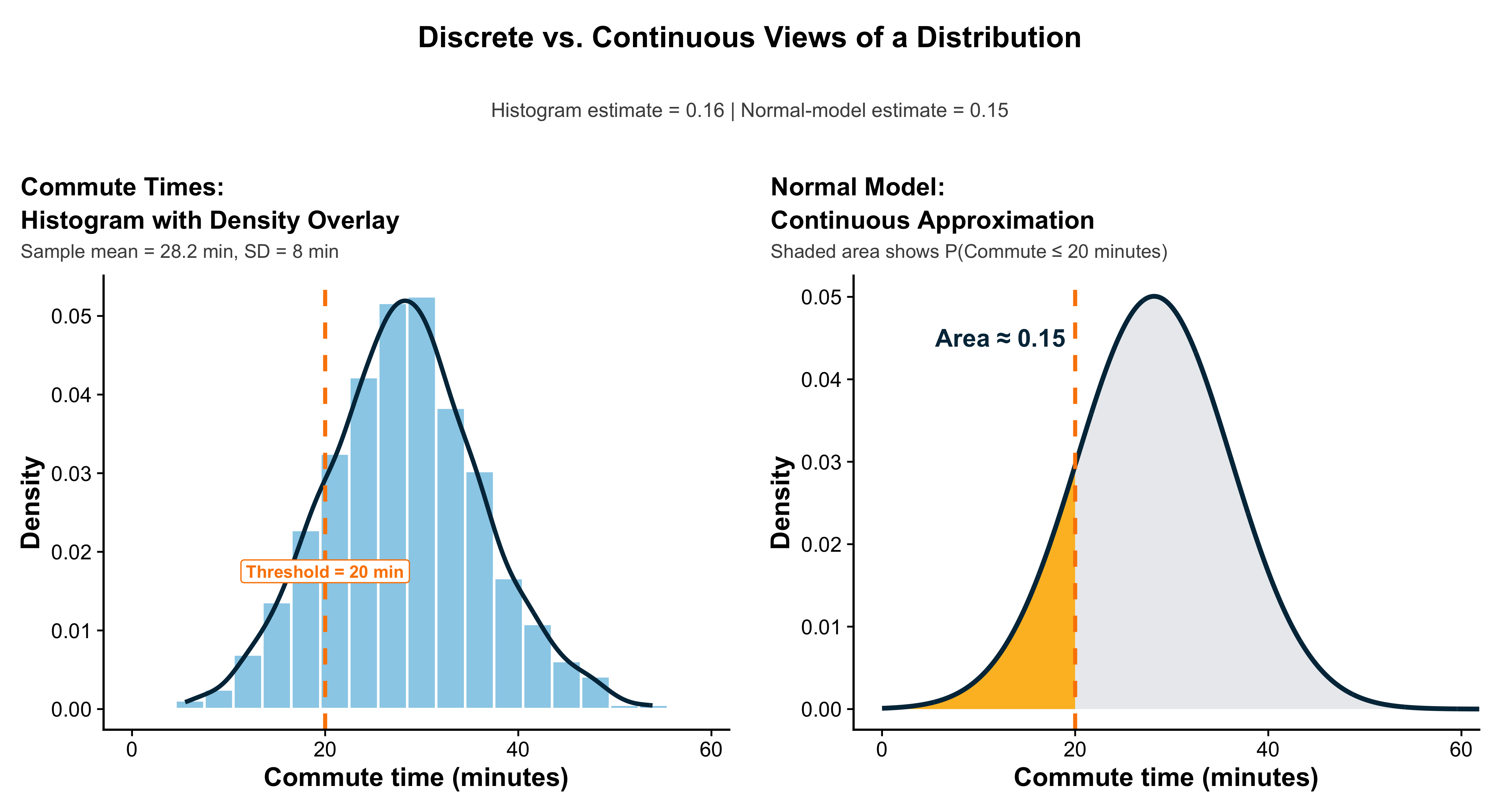

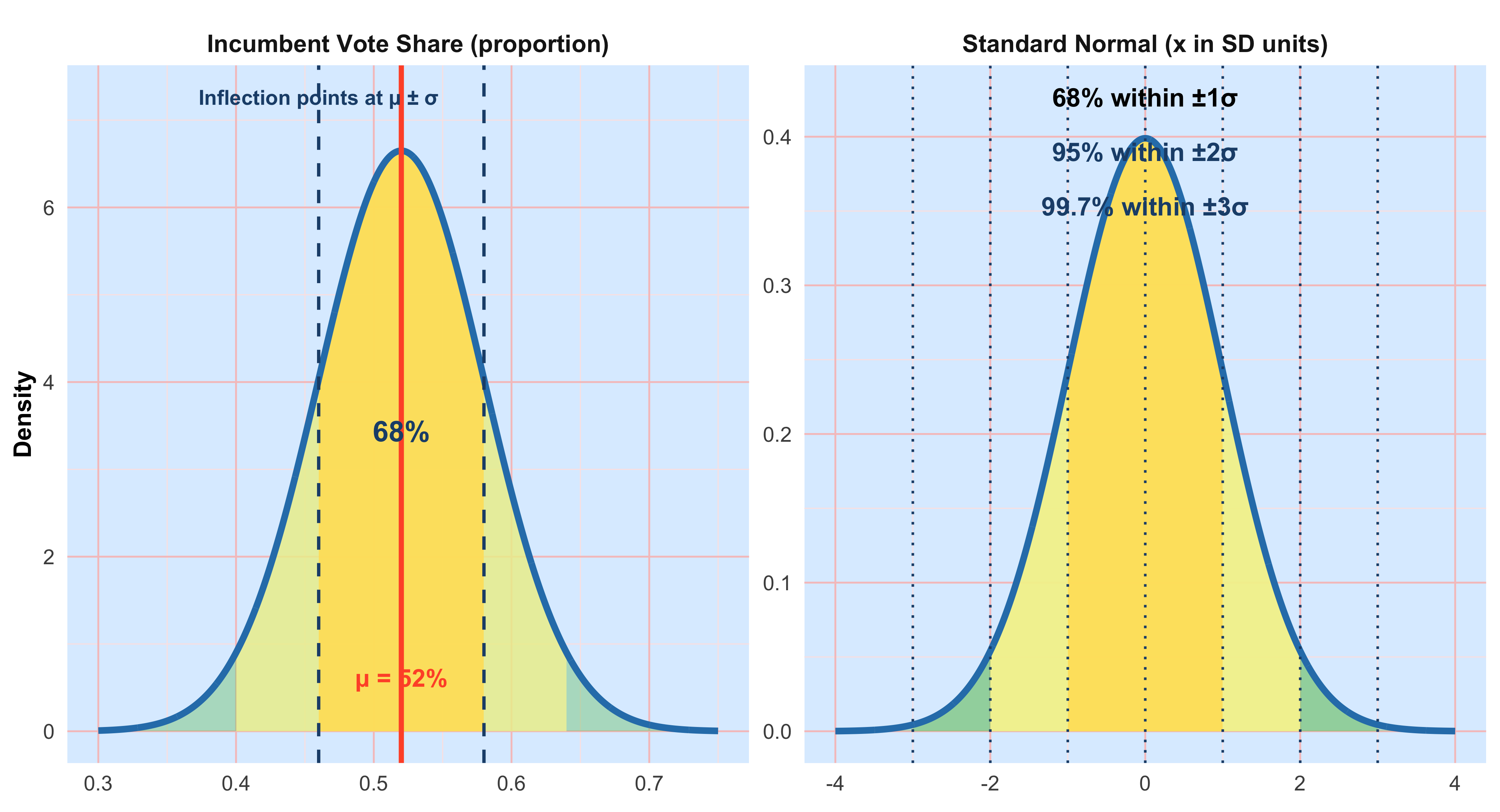

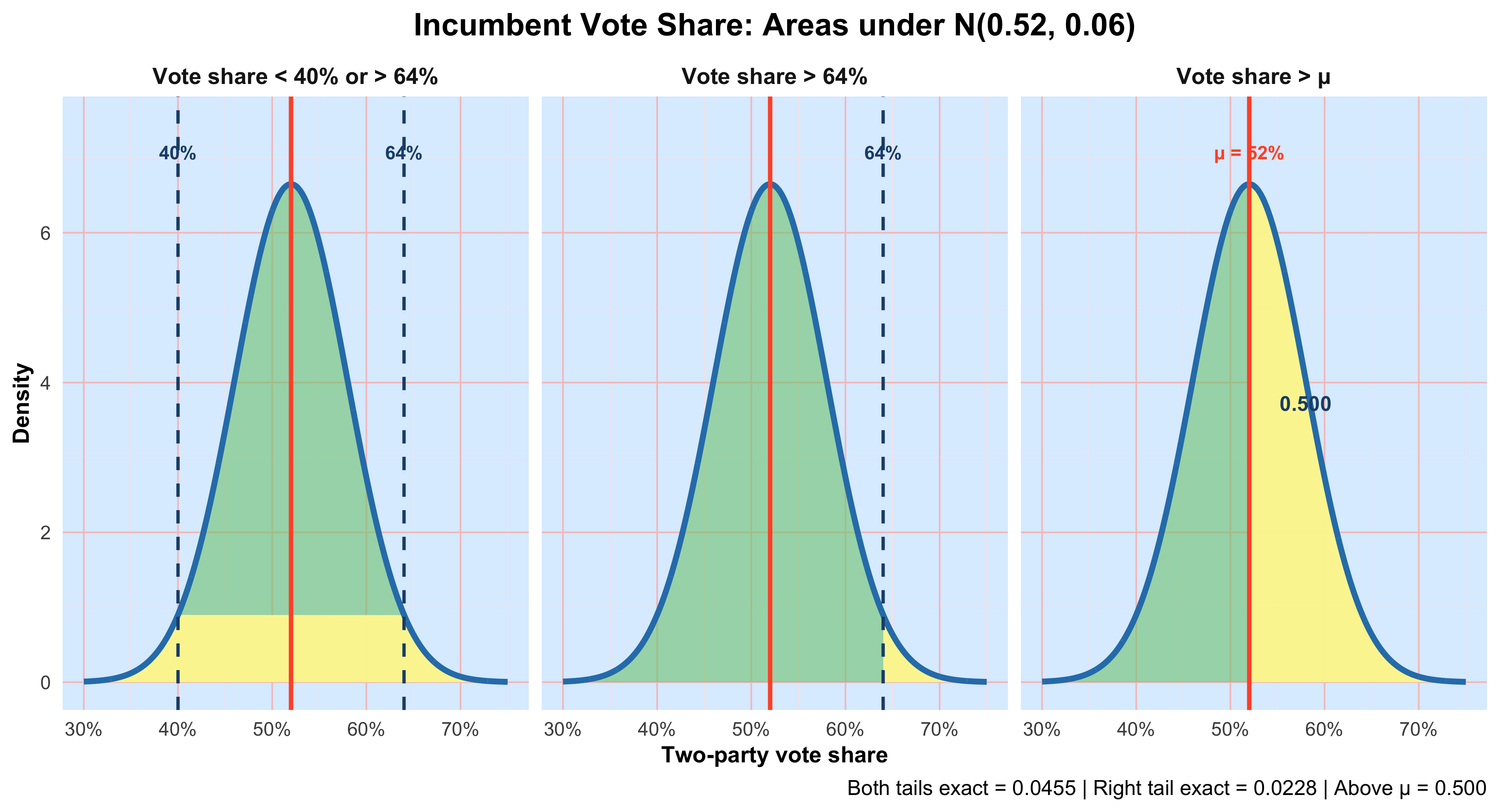

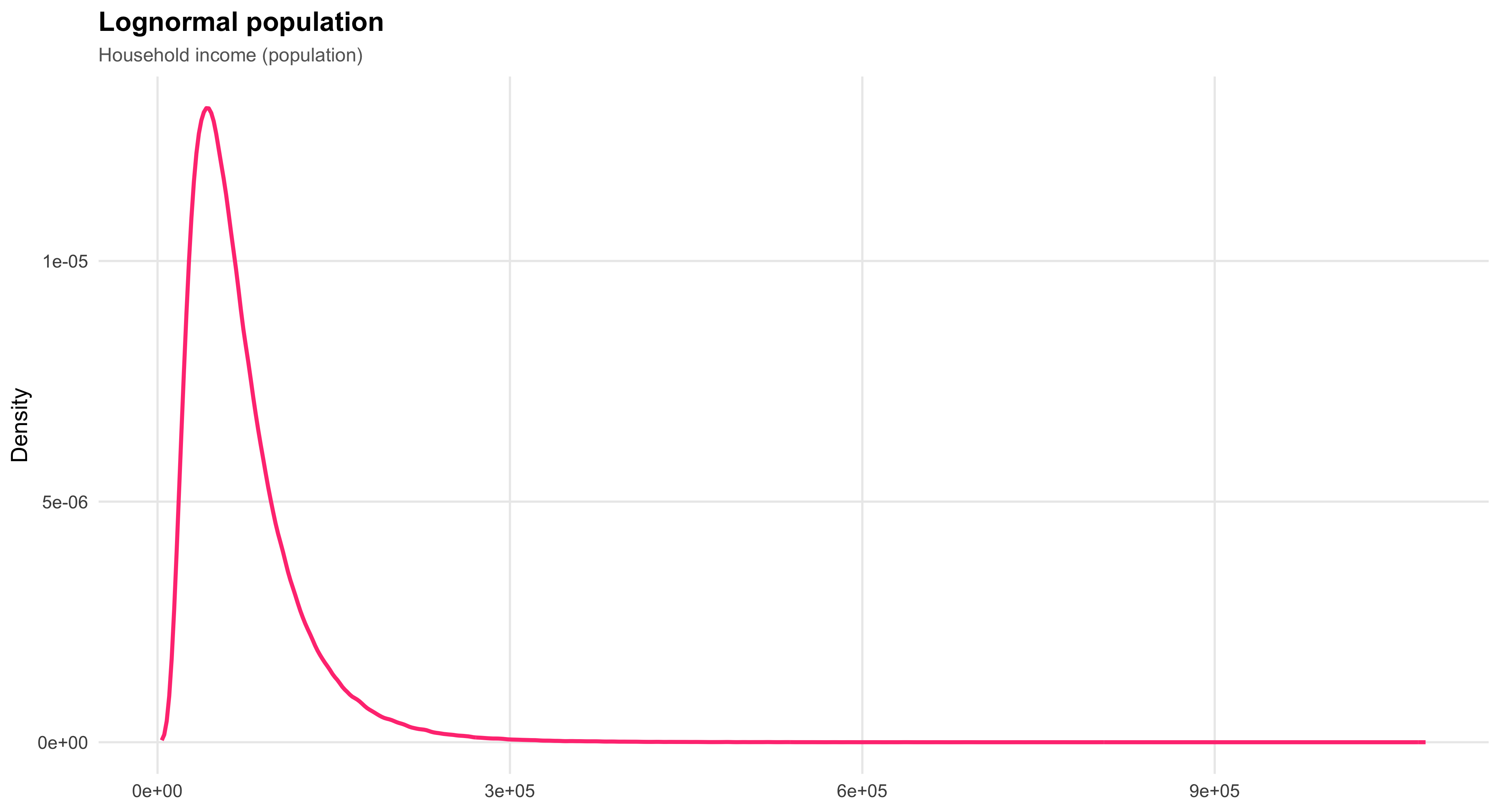

A distribution describes how values of a variable are spread across observations. It reveals the underlying shape of the data—whether clustered, symmetric, or skewed—and allows researchers to identify patterns, outliers, and regularities that might otherwise go unnoticed.

Central Tendency

Measures of central tendency, such as the mean, median, and mode, summarize where the center of a distribution lies. They offer a concise description of the “typical” case within a dataset, providing a first glimpse of the regularities (or irregularities) that statistics seek to uncover.

Dispersion

While measures of central tendency describe where values converge, measures of dispersion—including range, variance, and standard deviation—capture how much they differ. Dispersion quantifies inequality, diversity, or heterogeneity within a population, dimensions that are central to social inquiry.

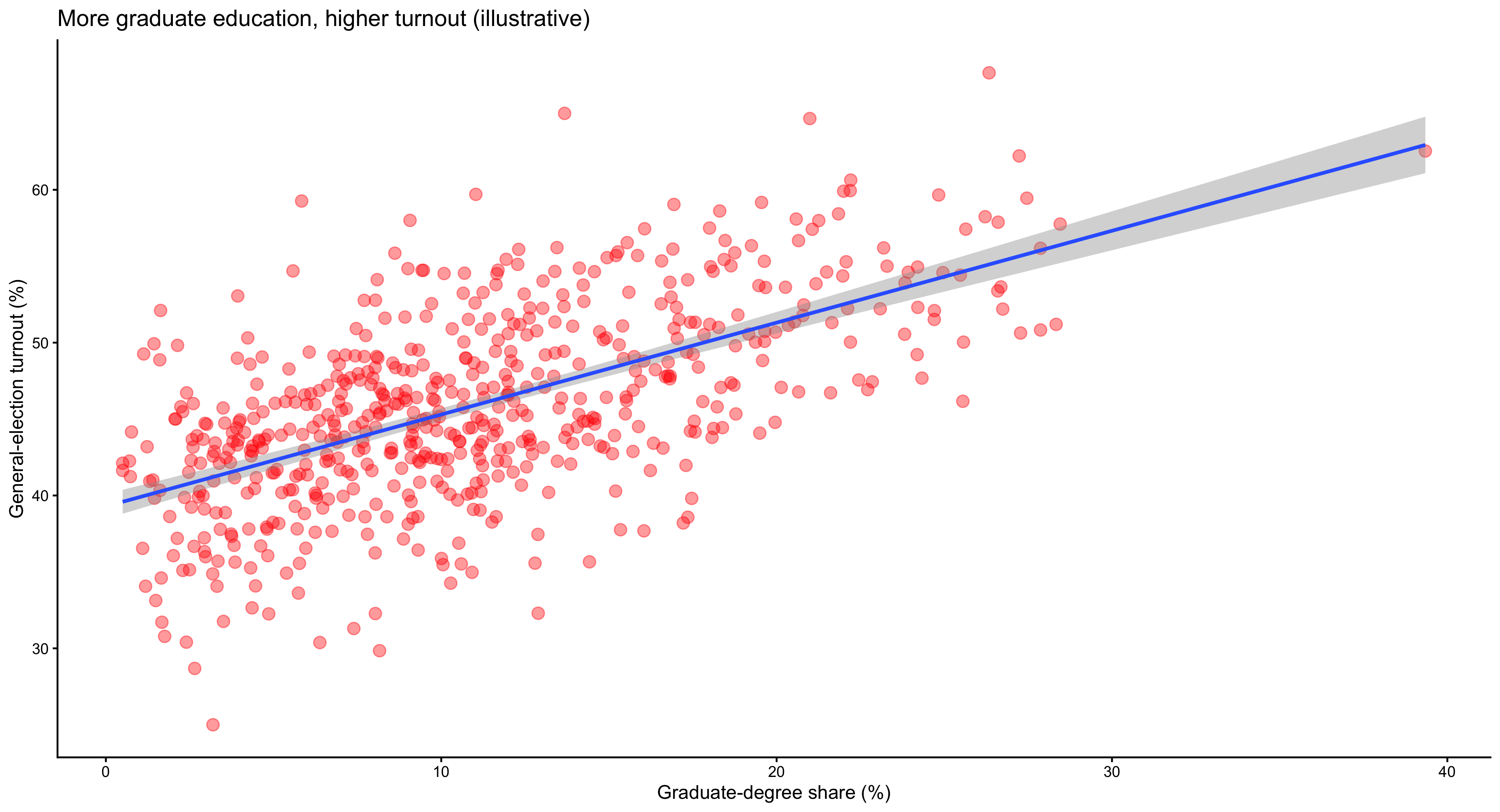

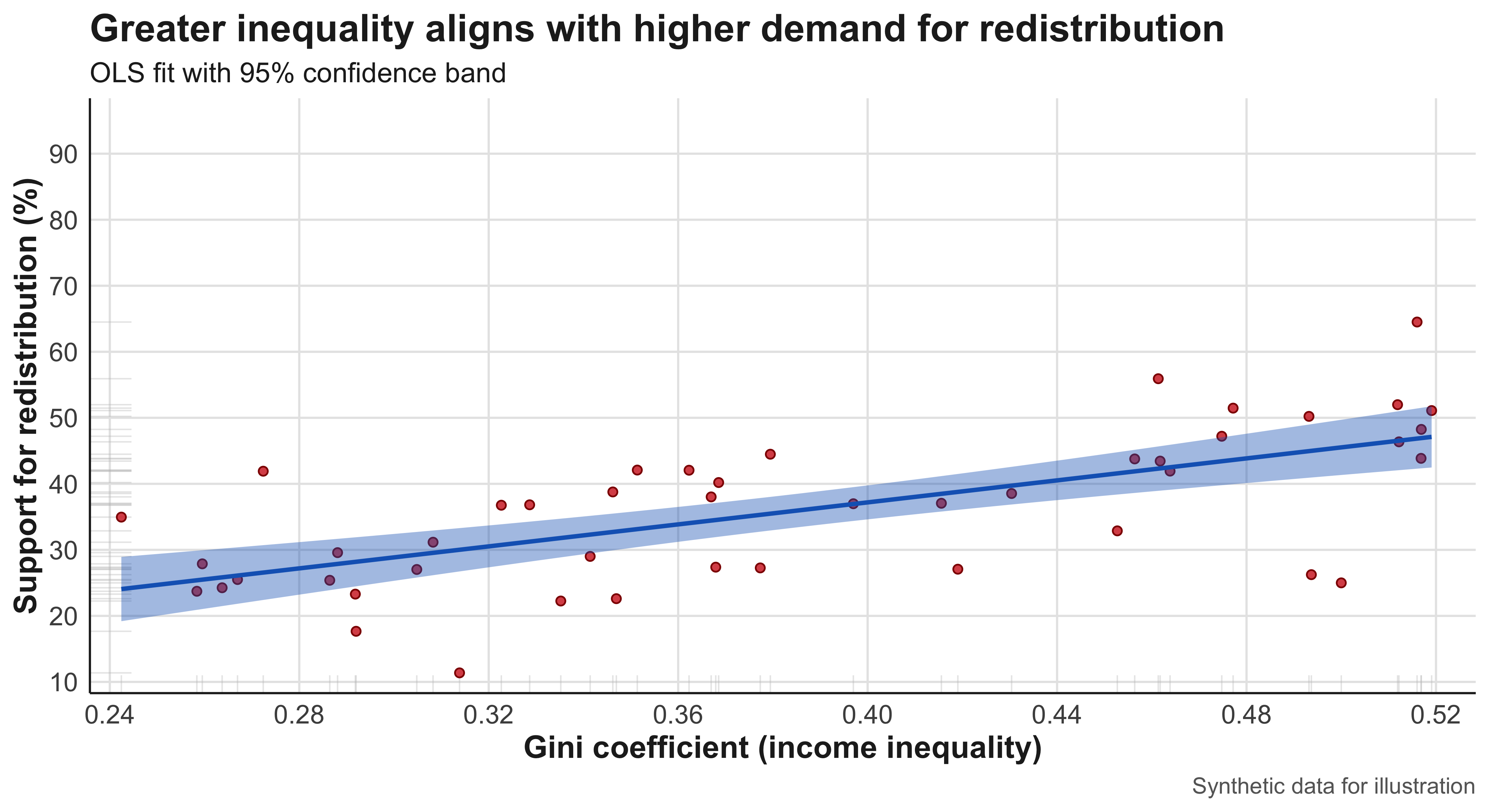

Correlation

Correlation measures the strength and direction of association between two variables. It tells us whether, and to what extent, they move together—positively or negatively—without necessarily implying that one causes the other.

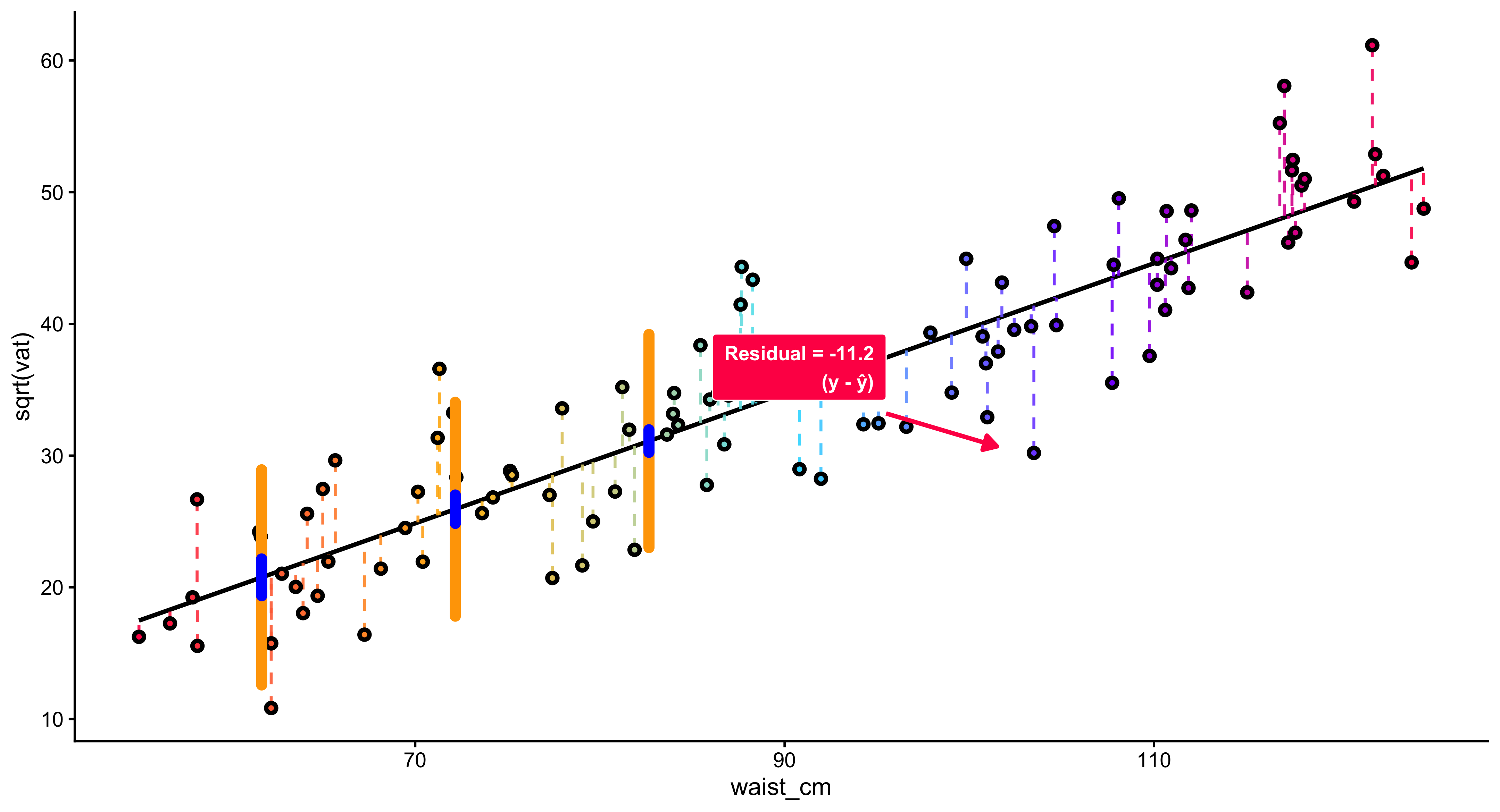

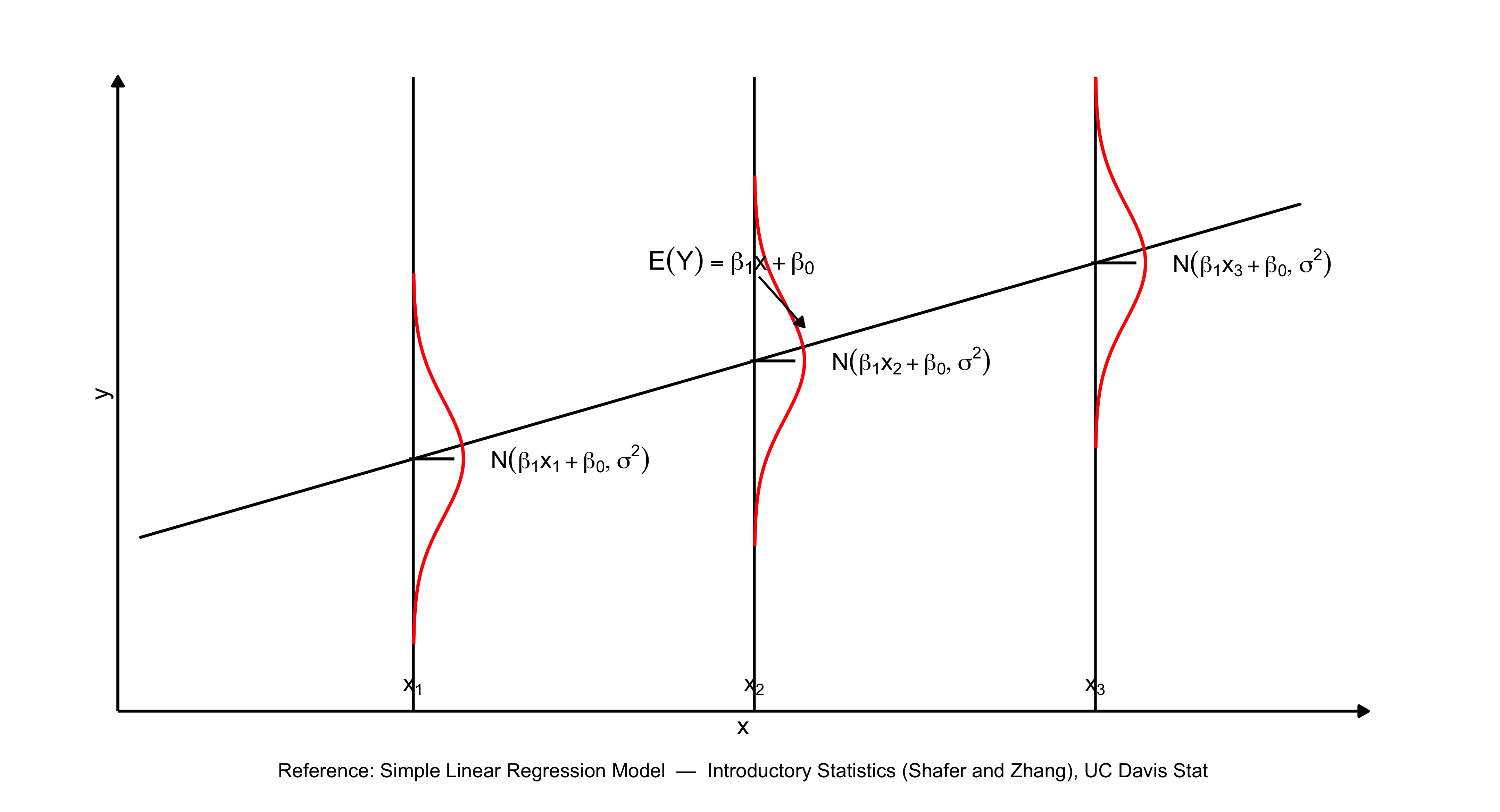

Regression

Regression analysis extends correlation by modeling how changes in one variable are associated with changes in another, holding other factors constant. It is among the most powerful tools for disentangling complex social relationships and estimating the independent effect of specific factors.

Sampling Error and Bias

All empirical research must confront sampling error and bias. Sampling error arises because we study only a portion of the population, while bias occurs when the sample systematically differs from the population it seeks to represent. Recognizing and minimizing these sources of error is essential for valid inference.

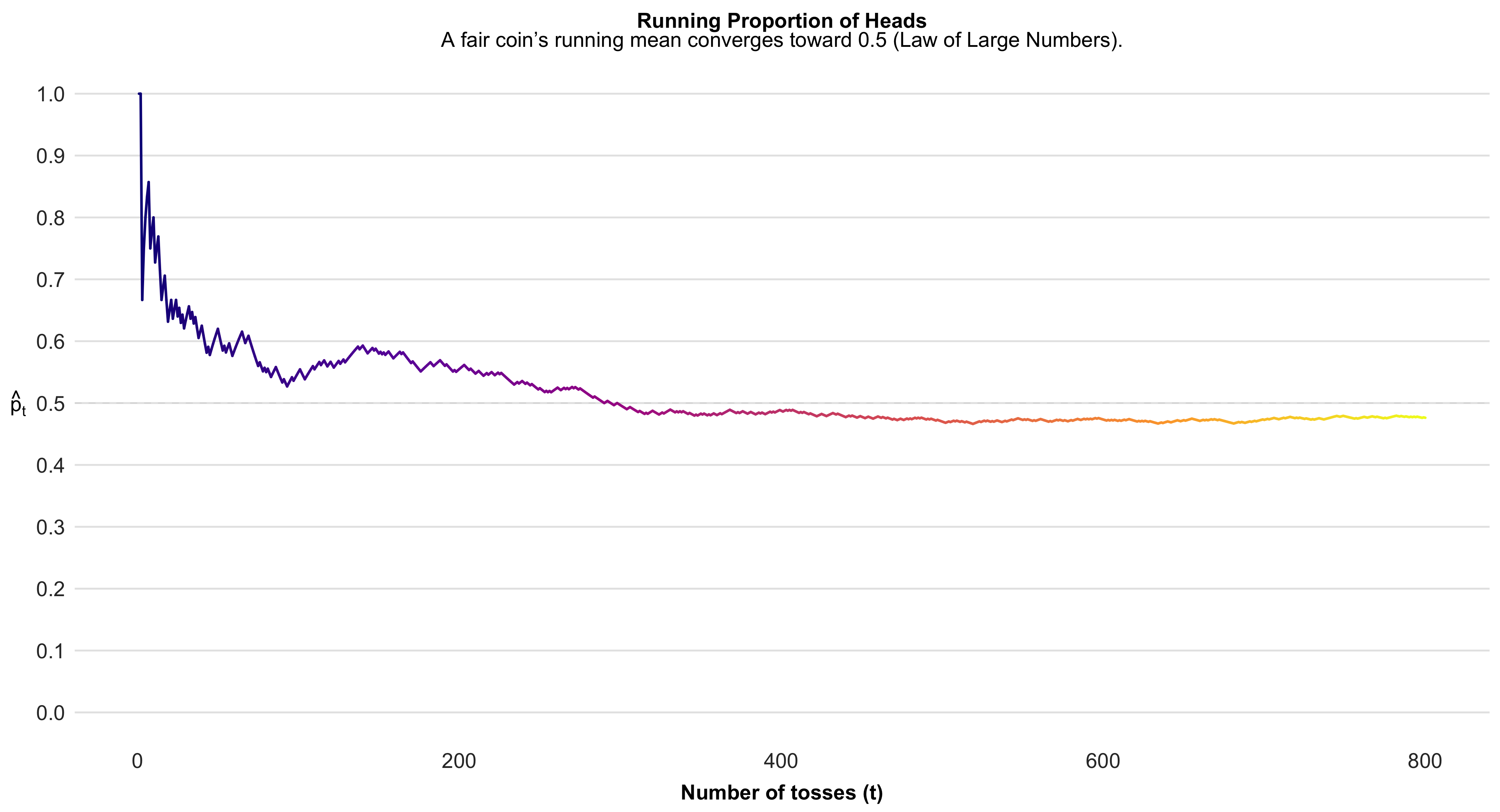

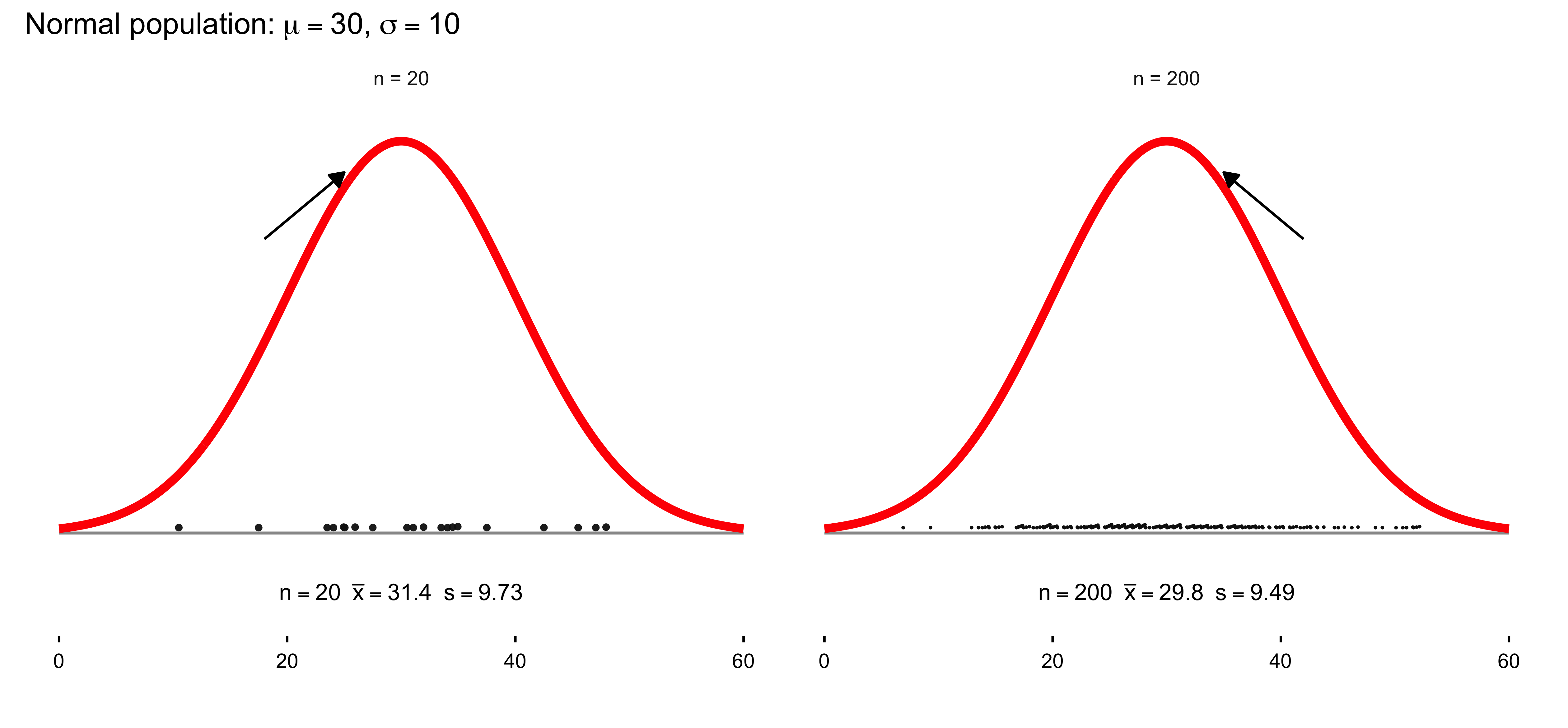

Law of Large Numbers

The Law of Large Numbers states that as the size of a sample increases, its average tends to converge toward the true population mean. This principle underlies the logic of statistical inference: the more observations we gather, the more stable and reliable our estimates become. It provides the mathematical reassurance that, despite randomness in individual outcomes, patterns emerge with regularity and consistency in aggregate.

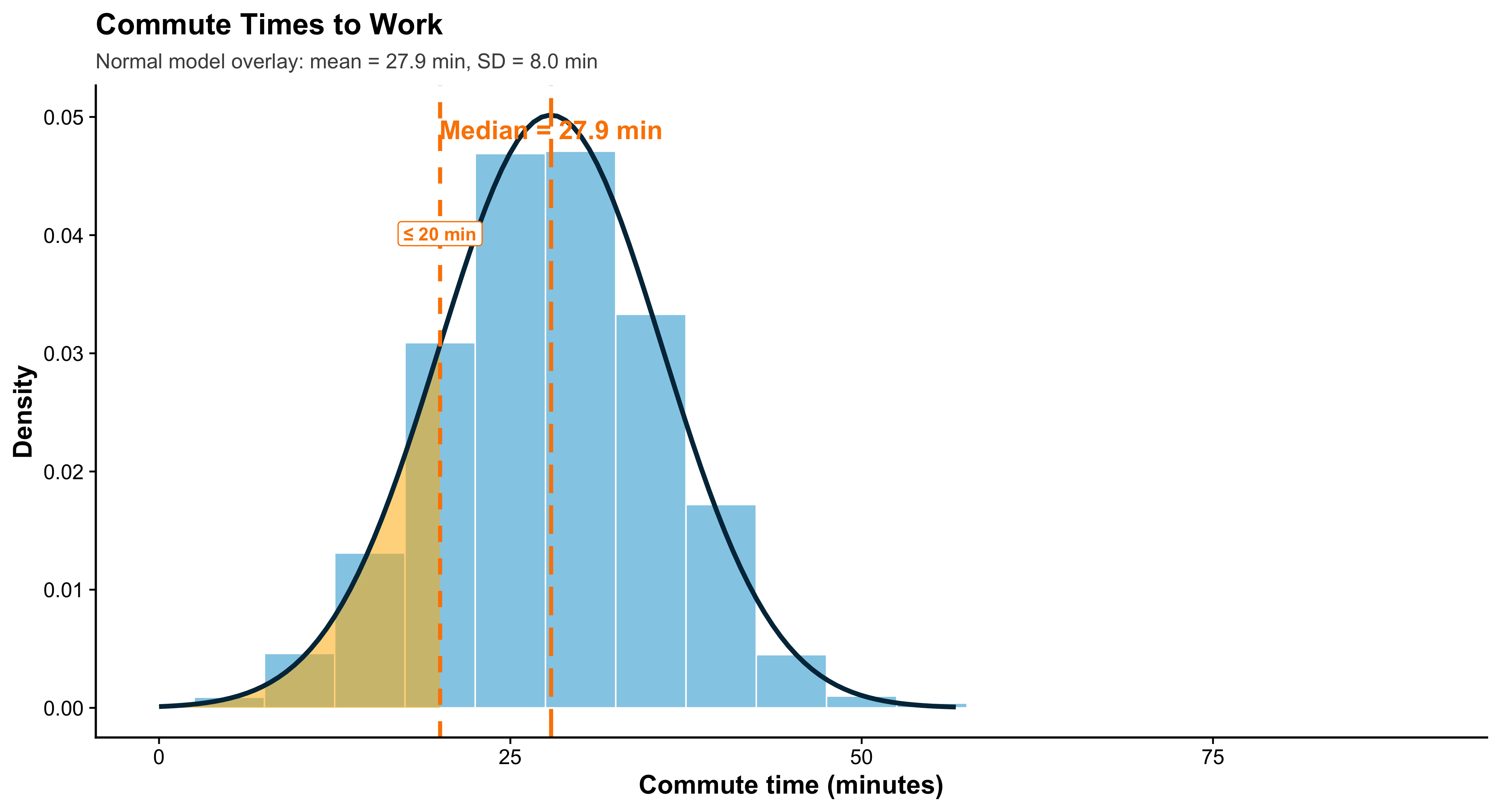

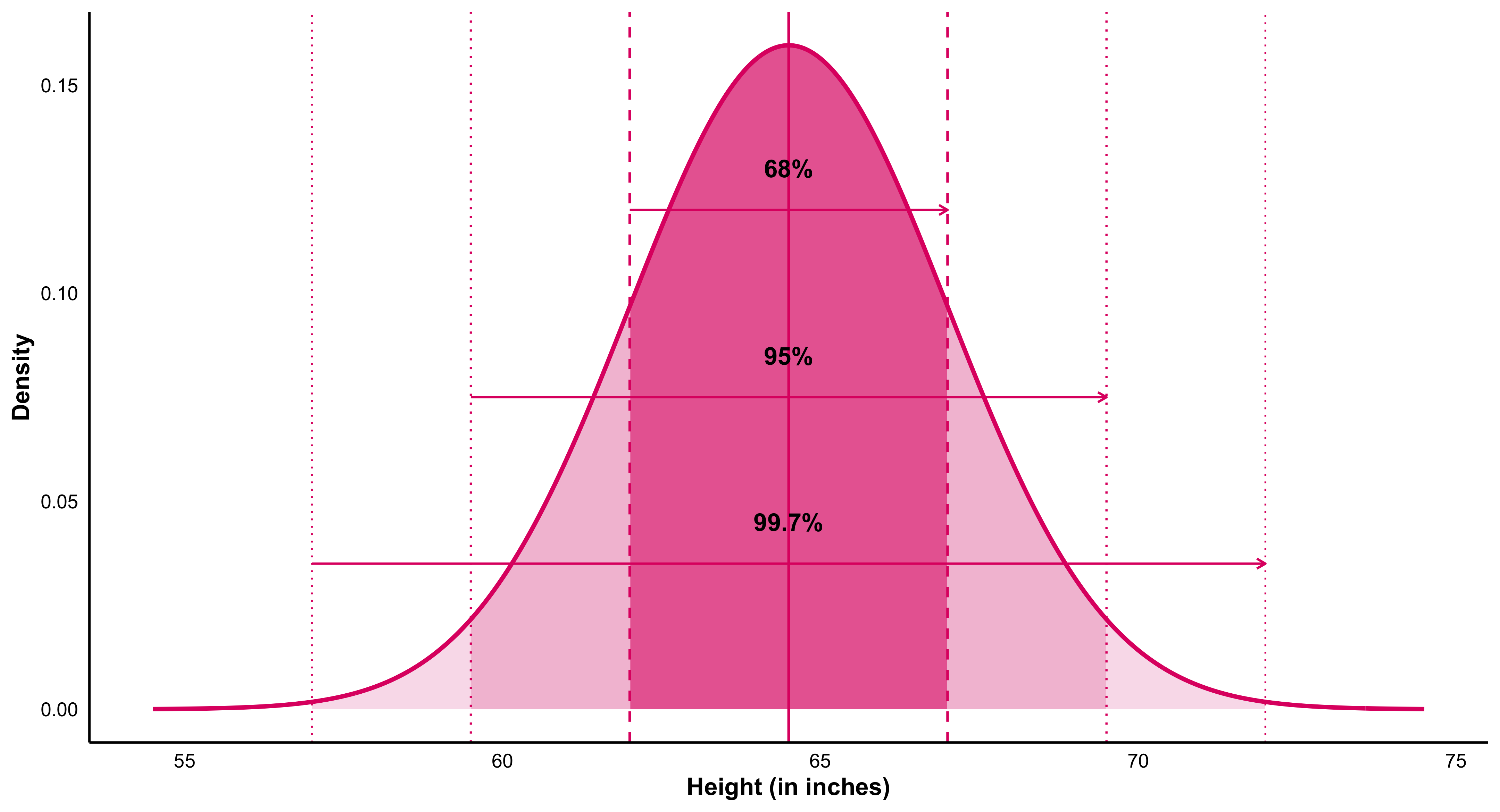

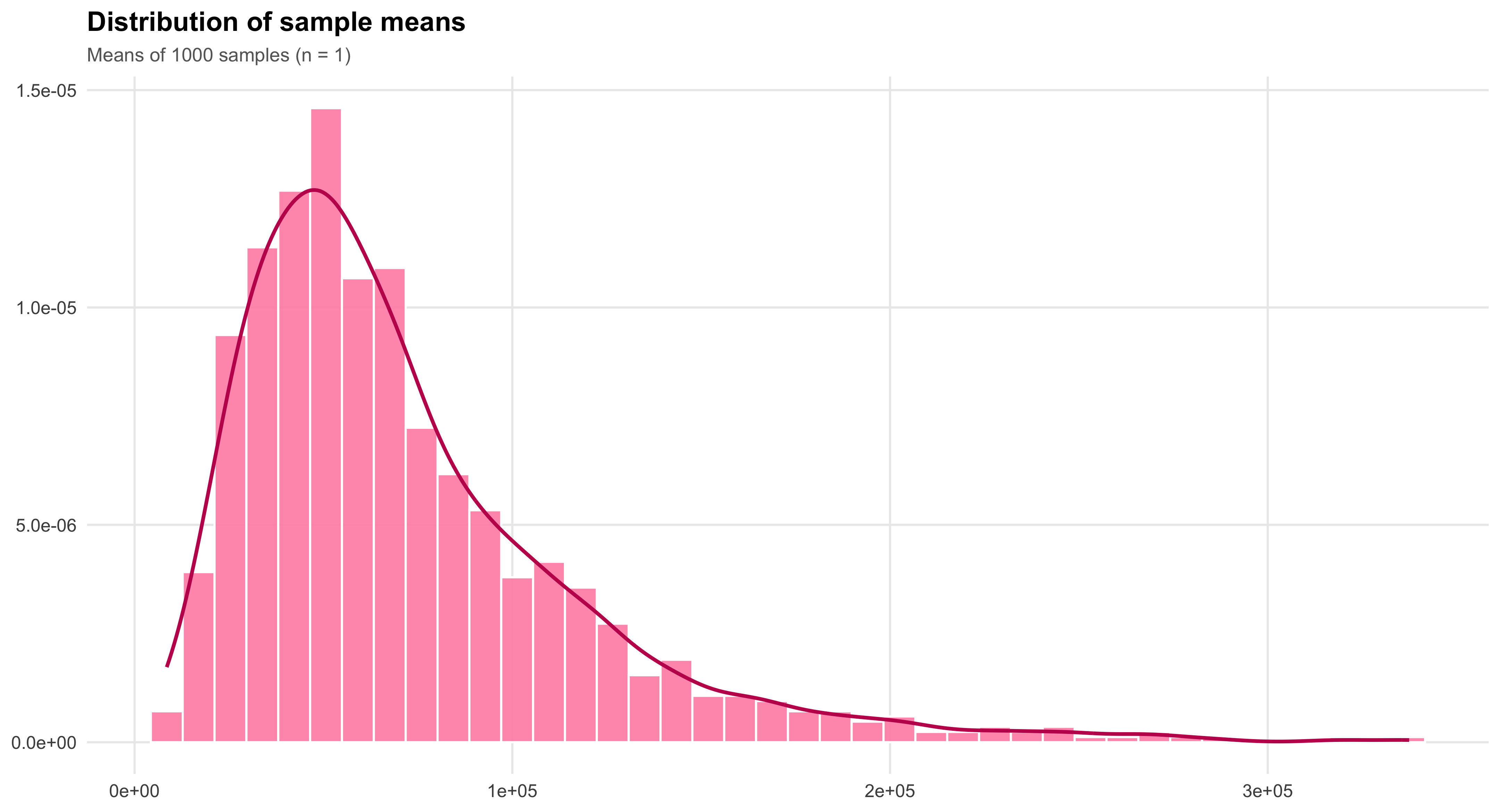

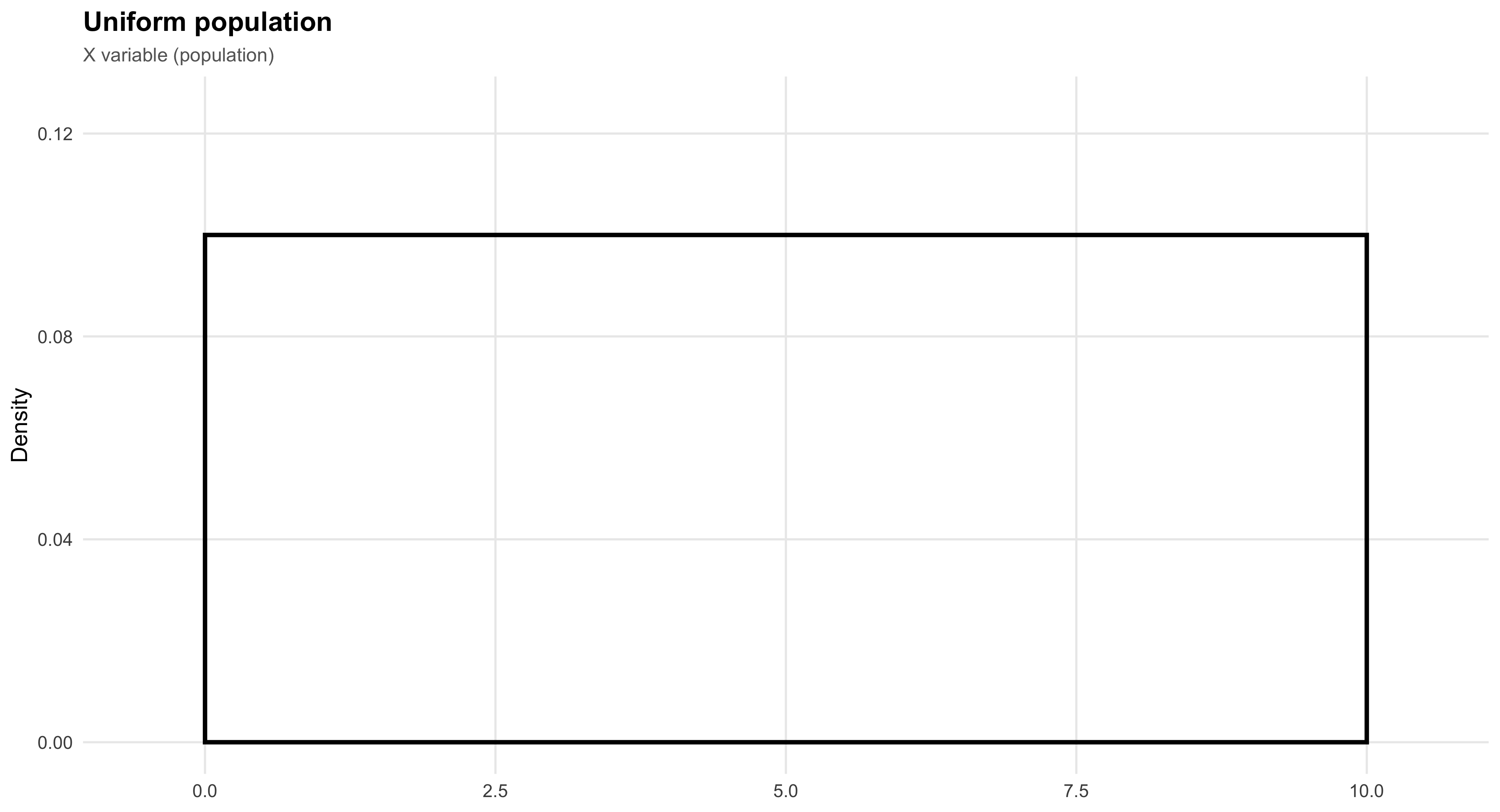

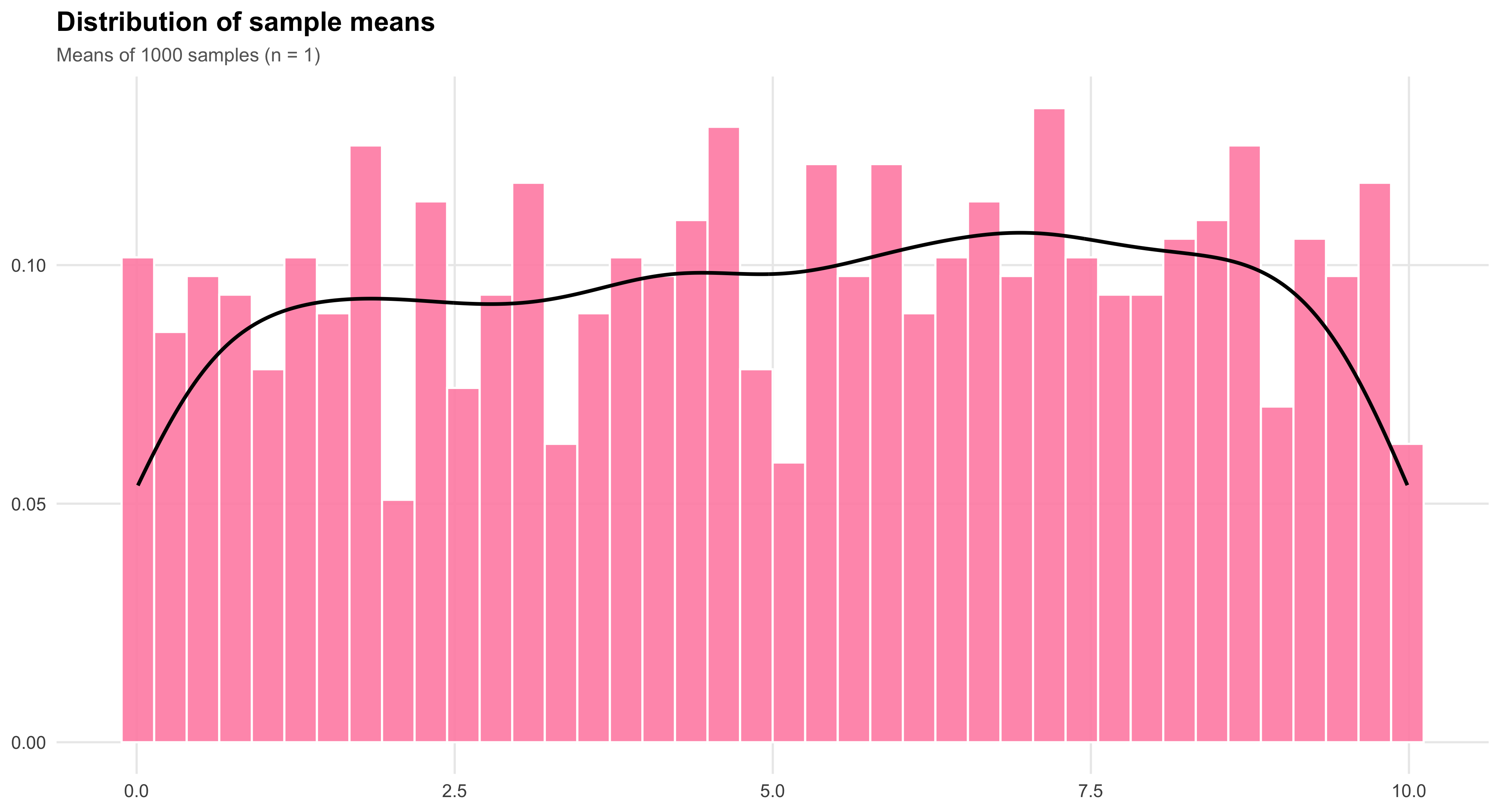

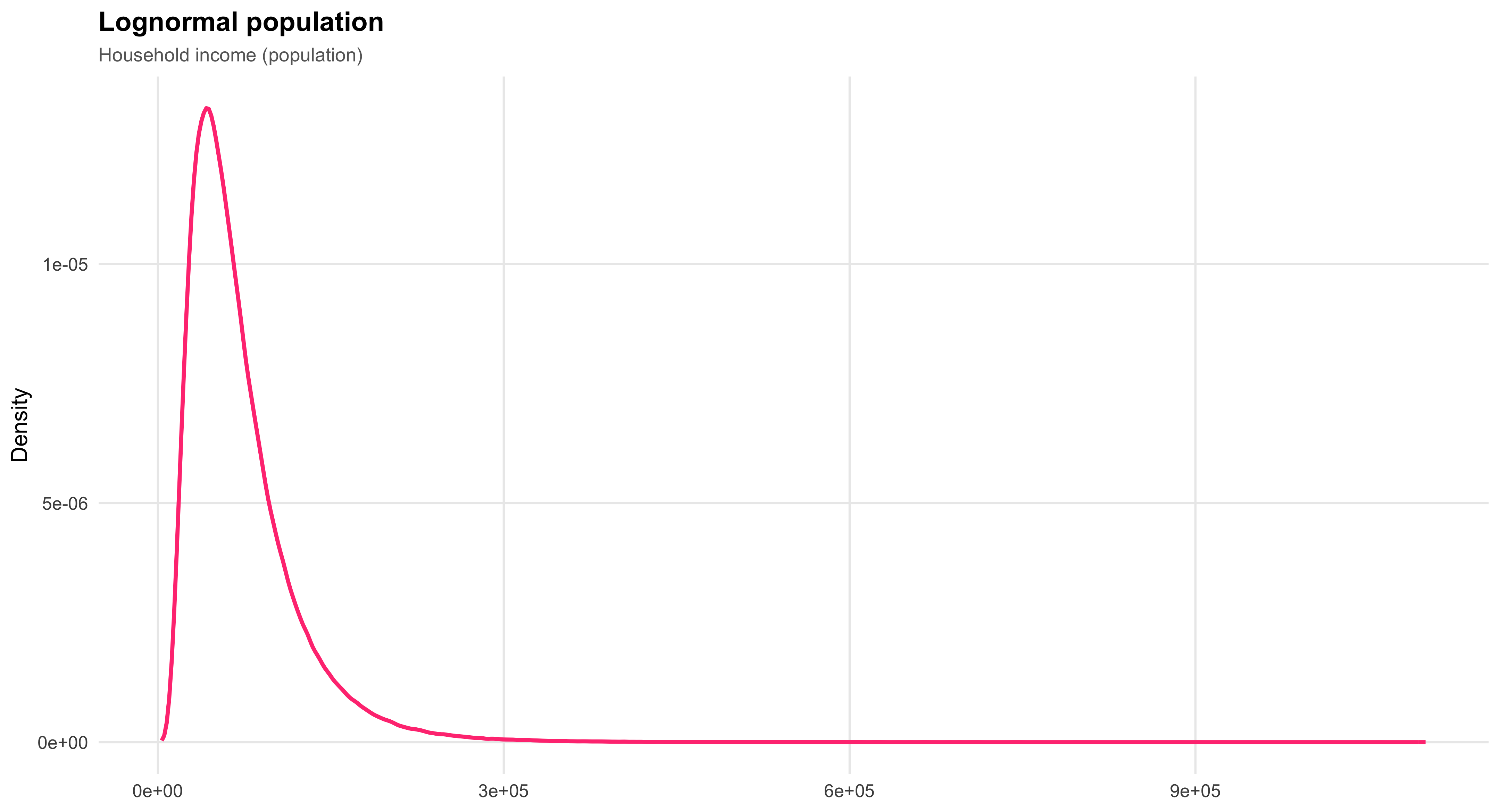

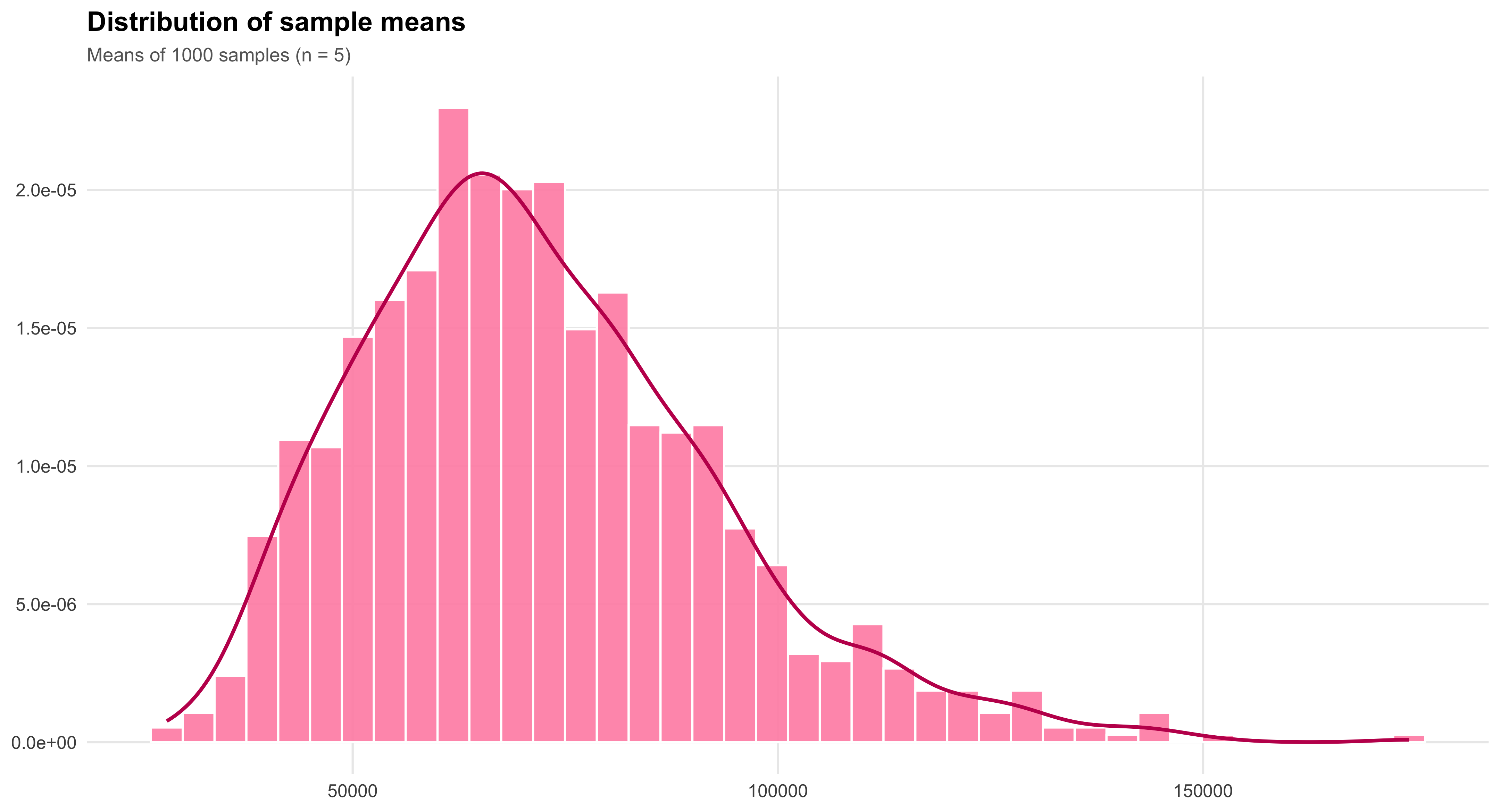

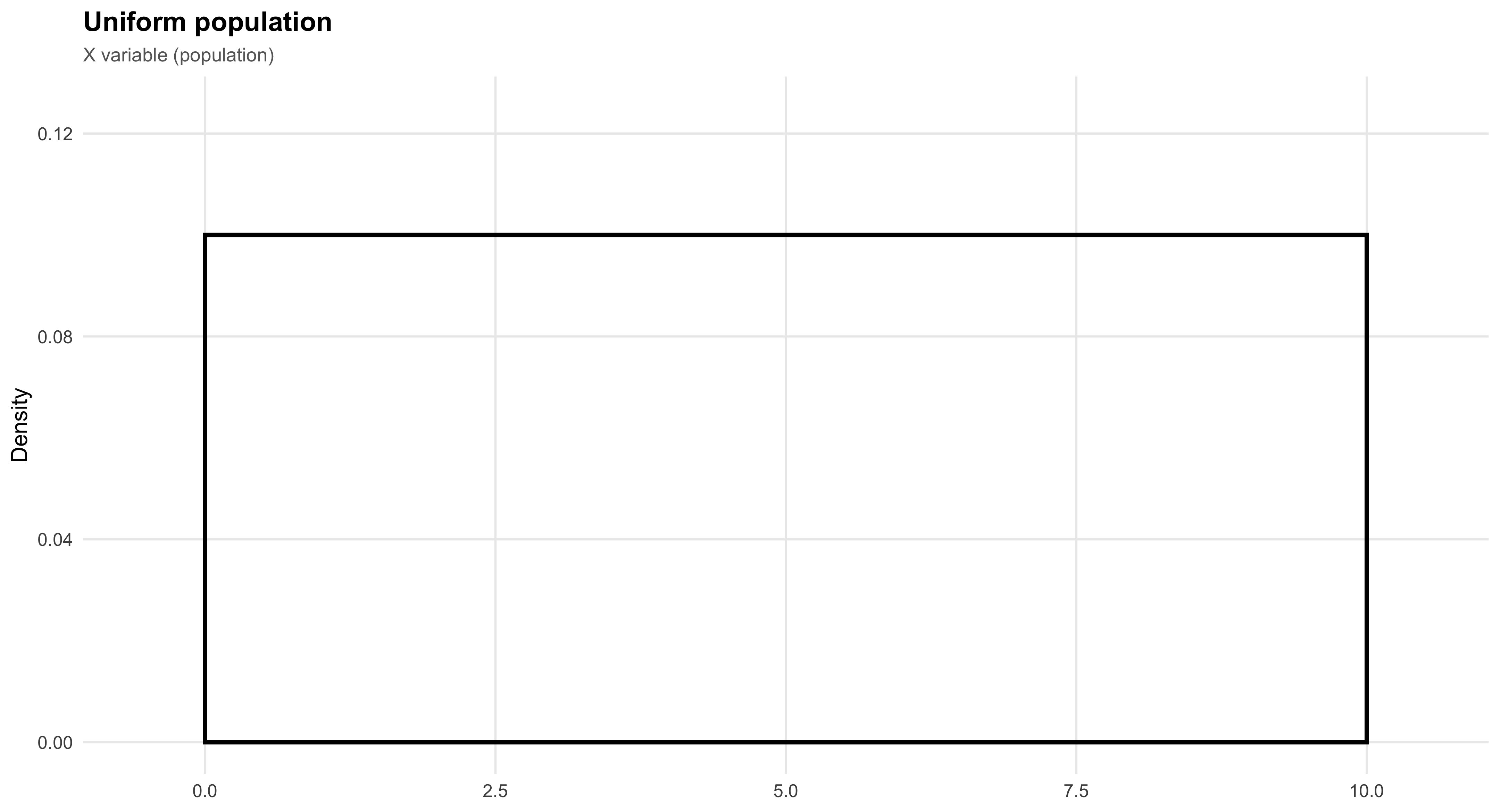

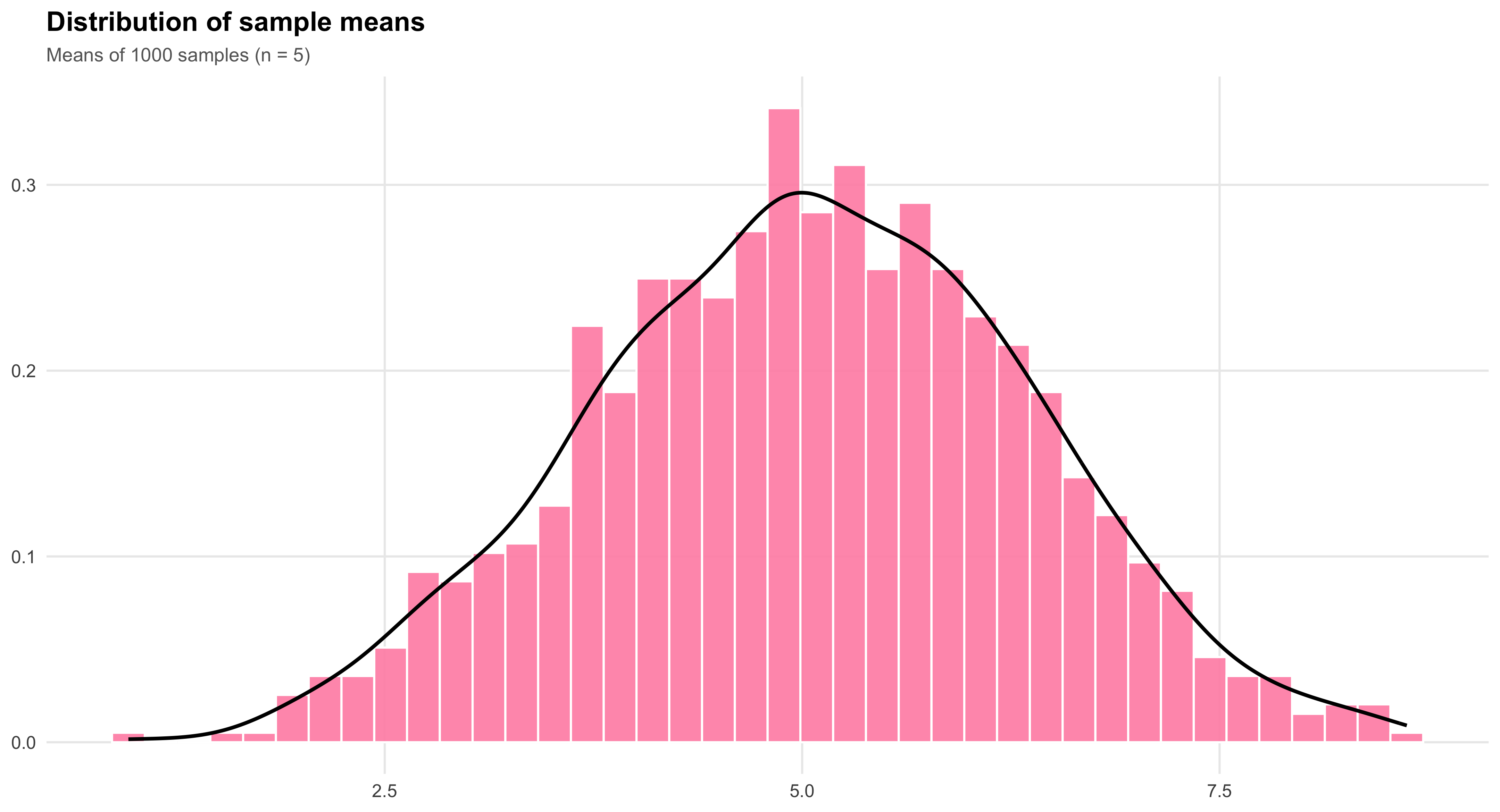

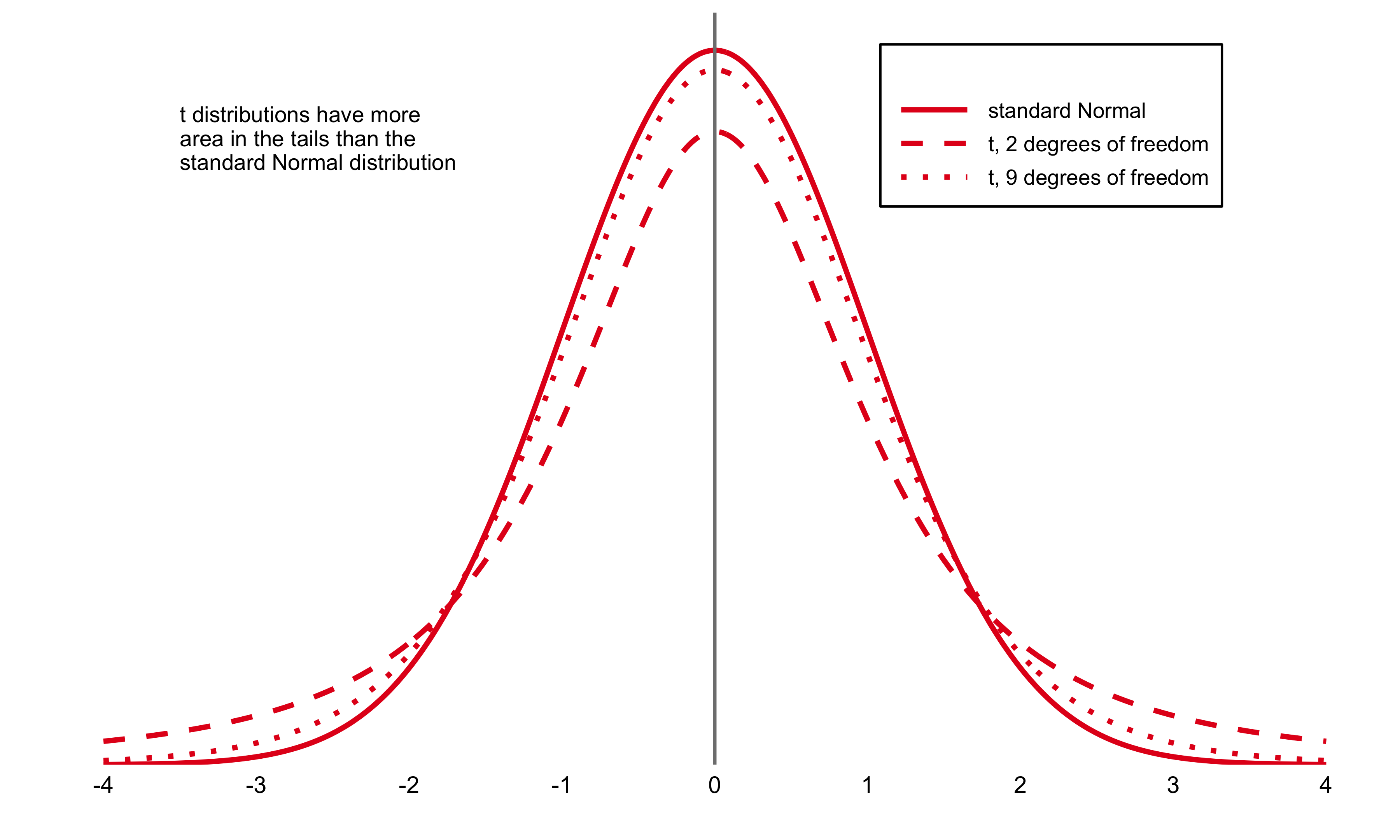

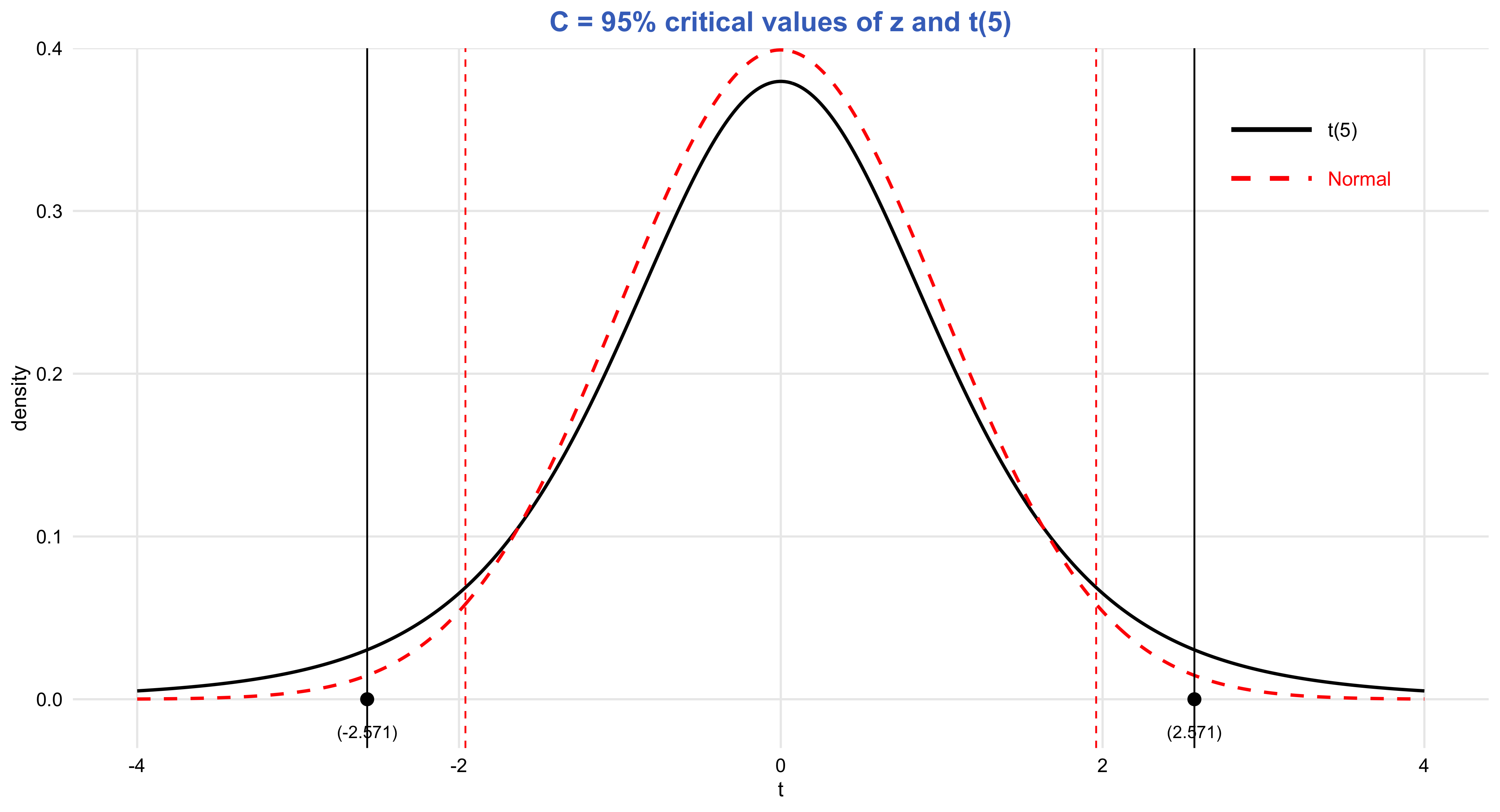

Central Limit Theorem

Closely related to the Law of Large Numbers, the Central Limit Theorem explains why sampling distributions of the mean tend to approximate a normal distribution as sample size grows, regardless of the shape of the underlying population. This remarkable property enables researchers to apply probability-based inference widely across the social sciences. It is the theoretical bridge that makes estimation, confidence intervals, and hypothesis testing possible—transforming randomness into a source of knowledge rather than noise.

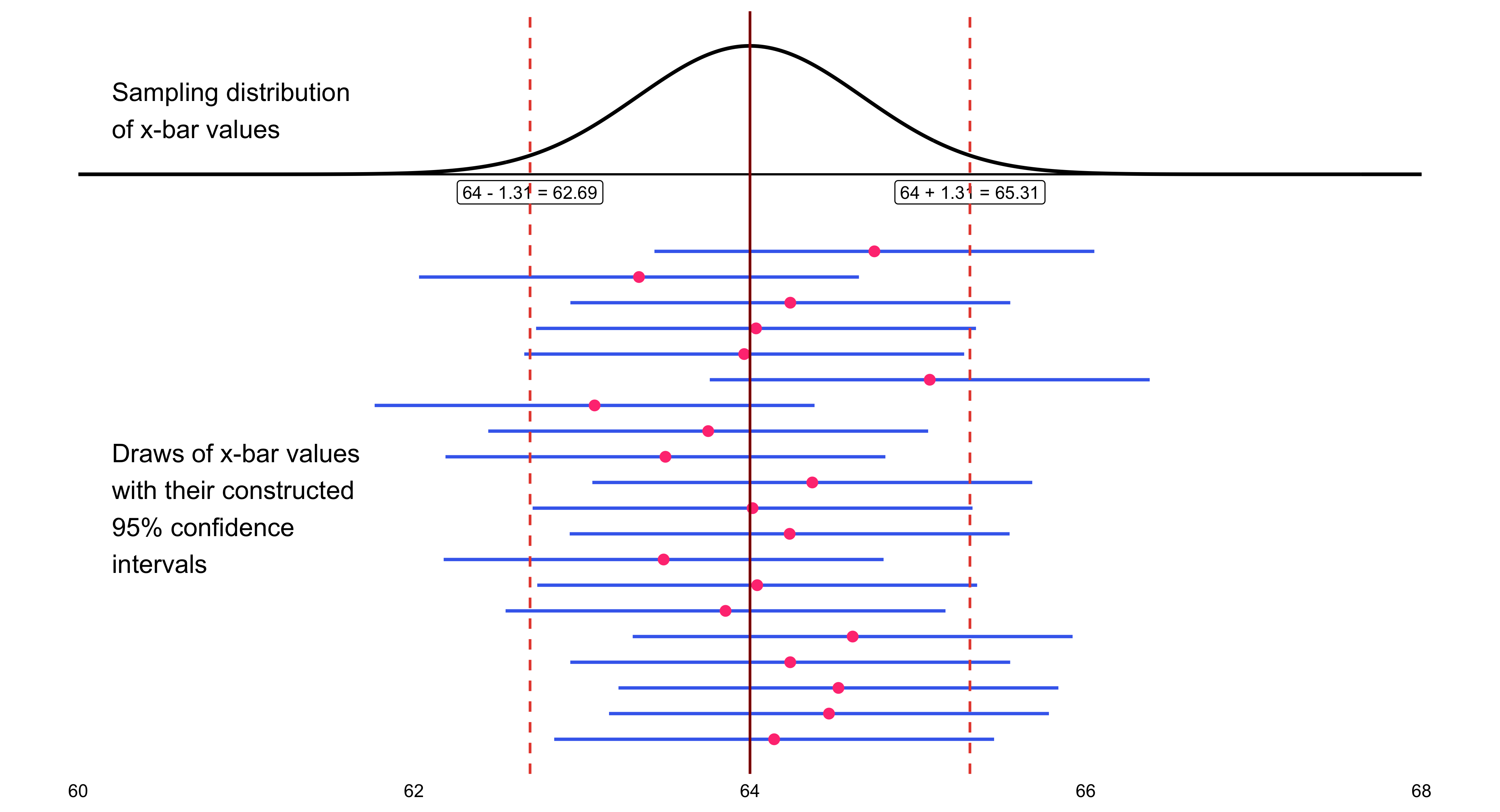

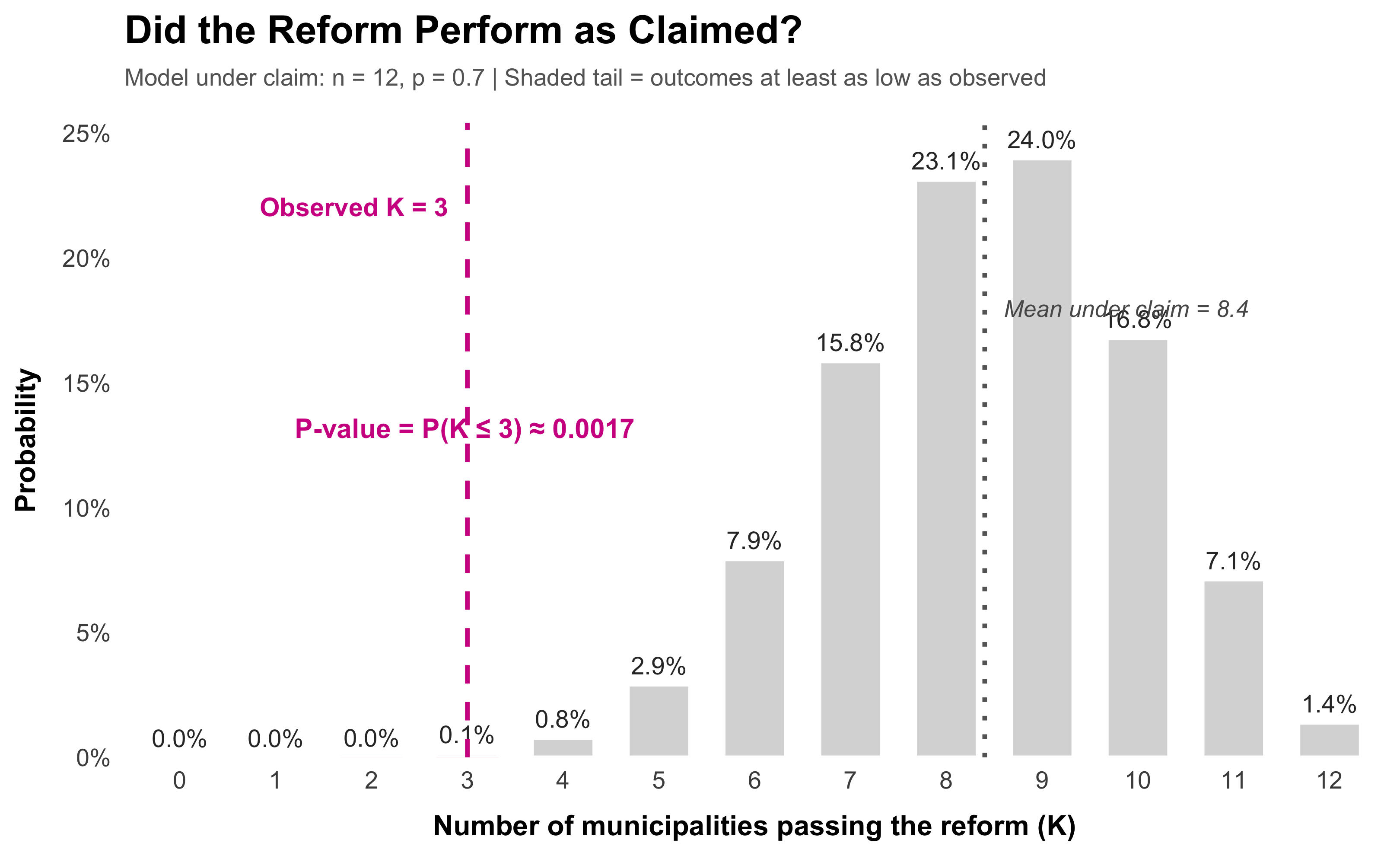

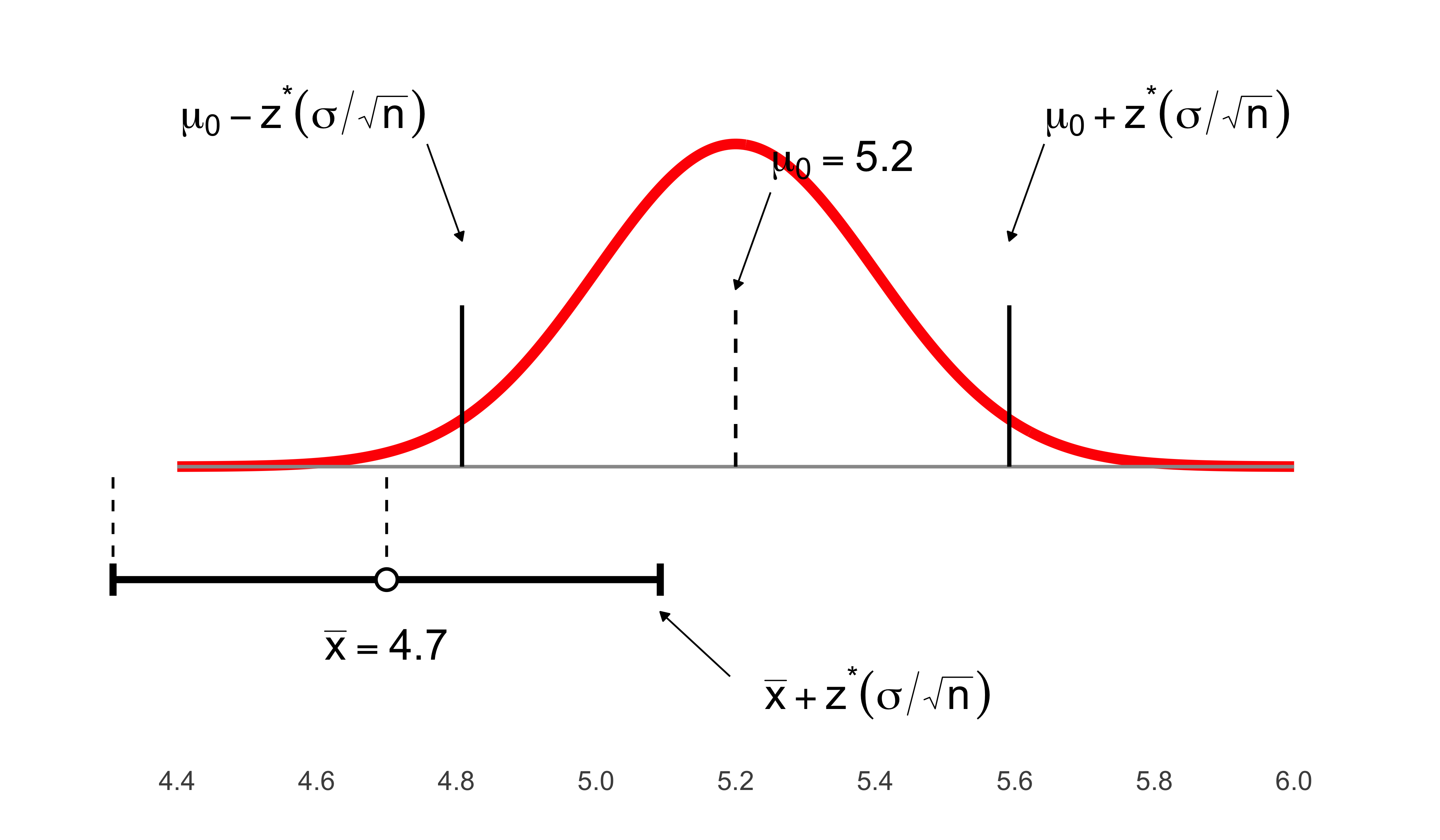

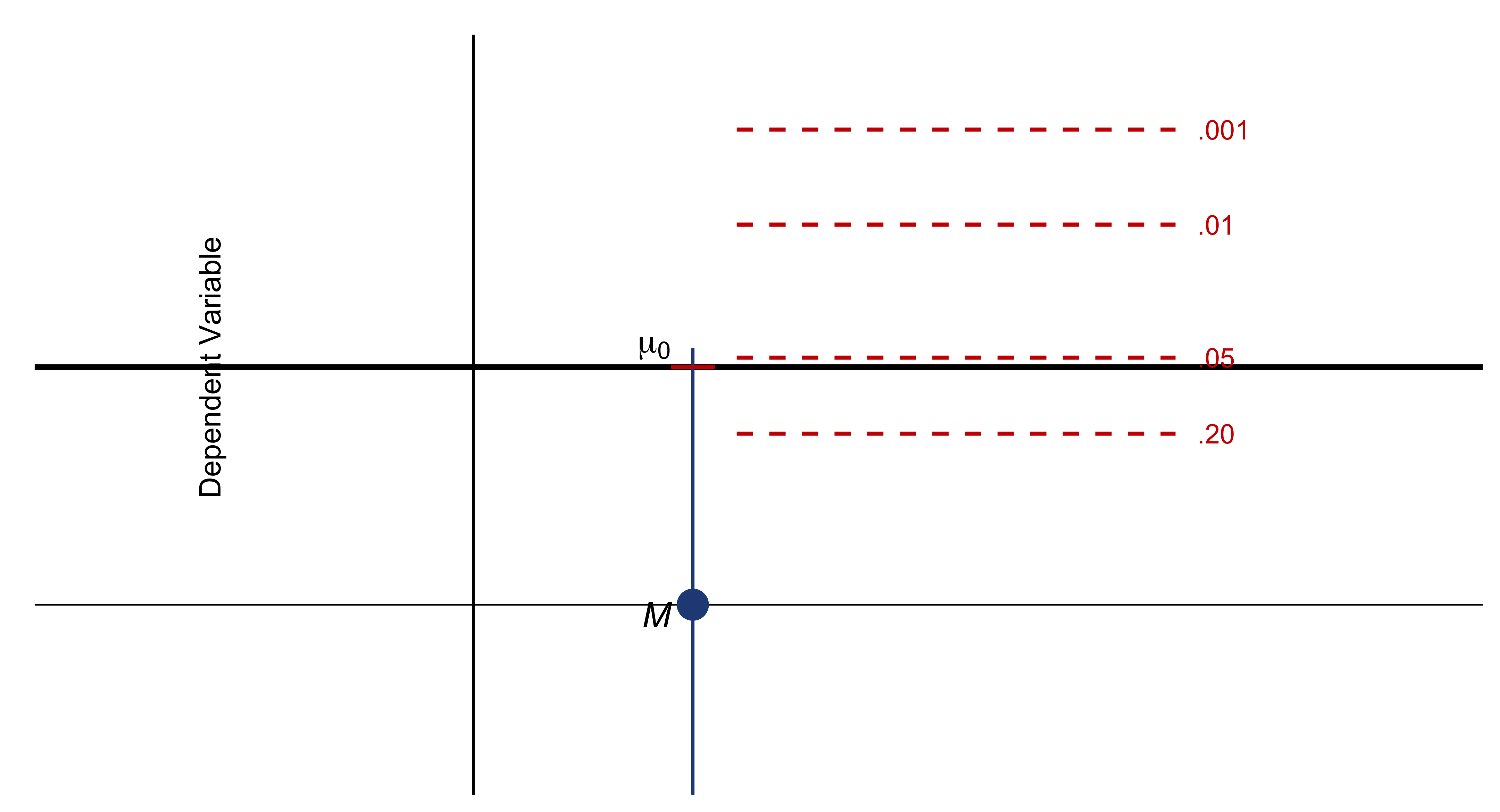

Confidence Intervals and Hypothesis Testing

Because data are inherently uncertain, confidence intervals and hypothesis tests provide a framework for assessing the precision of our estimates and the likelihood that observed patterns are due to chance. They are central to the scientific ethos of quantifying uncertainty rather than ignoring it. A confidence interval (CI) gives an estimated range of plausible values for a population parameter, constructed so that, over many samples, a fixed proportion (the confidence level) of such intervals would contain the true parameter. In the simplest term, a confidence interval is a range of values, derived from sample data, that is likely to contain the true value of an unknown population parameter with a specified level of confidence.

Association and Causation

Finally, the distinction between association and causation lies at the core of scientific reasoning. Association (or correlation) refers to a statistical relationship between two or more variables — that is, when changes in one variable are systematically related to changes in another. For instance, X and Y co-occur: they increase or decrease in tandem with each other to a certain degree. Causation, by contrast, implies a directional, mechanistic, or counterfactual dependency: changes in one variable produce or influence changes in another.

In this introductory chapter, we examined the fundamental definitions of essential statistical concepts. Chapter 2 extends this discussion by addressing the measurement of key summary statistics—such as the mean, median, and mode, variance, minimum, and maximum, etc.

2 Chapter 2: Descriptive Statistics

The first step towards understanding statistical patterns is to learn the data at hand themselves. To learn the dataset we are working on, we must draw on descriptive analysis: I define them as a set of visualization techniques to make sense of patterns. Of course, descriptive analyses are not, by any means, limited to visualization techniques. Quite often descriptive tables are equally and even more telling and powerful.

Descriptive statistics summarize, organize, and describe the main features of a dataset.They provide a quantitative overview without making inferences about a larger population. In what follows, I detail the key elements of descriptive statistics.

Variable: A characteristic that differs from one subject/object to another.

Data: a set of values (numbers or labels) variables can assume.

Population: the entire collection of objects [units] of interest

- Sometimes we can identify all units (if population is students in a course), but often populations of interest are too large to study entirely

Mean (Arithmetic Average):

\[ \bar{x} = \frac{\sum_{i=1}^{n} x_i}{n} \]

Median: The middle value when the data are ordered.

Mode: The most frequently occurring value.

Range:

\[ \text{Range} = \max(x) - \min(x) \]

Variance:

\[ s^2 = \frac{\sum_{i=1}^{n} (x_i - \bar{x})^2}{n - 1} \]

Standard Deviation:

\[ s = \sqrt{s^2} \]

Interquartile Range (IQR):

\[ IQR = Q_3 - Q_1 \]

3. Measures of Shape

These describe the distribution pattern of the data.

Skewness: Indicates the degree of asymmetry (left or right skew).

Kurtosis: Indicates how peaked or flat the distribution is compared to a normal curve.

4. Frequency and Distribution Summaries

These are also additional tools for descriptive statistics and show how data values are distributed.

- Frequency tables and relative frequencies

- Histograms, bar charts, and pie charts

- Stem-and-leaf plots and box plots

5. Position or Percentile Measures

These describe the relative standing of observations within the dataset.

- Percentiles: Divide the data into 100 equal parts.

- Quartiles: Divide the data into four equal parts (Q1, Q2, Q3).

Summary:

Descriptive statistics capture four main aspects of data — central tendency, variability, shape, and distribution — providing a clear and structured overview of the dataset’s characteristics.

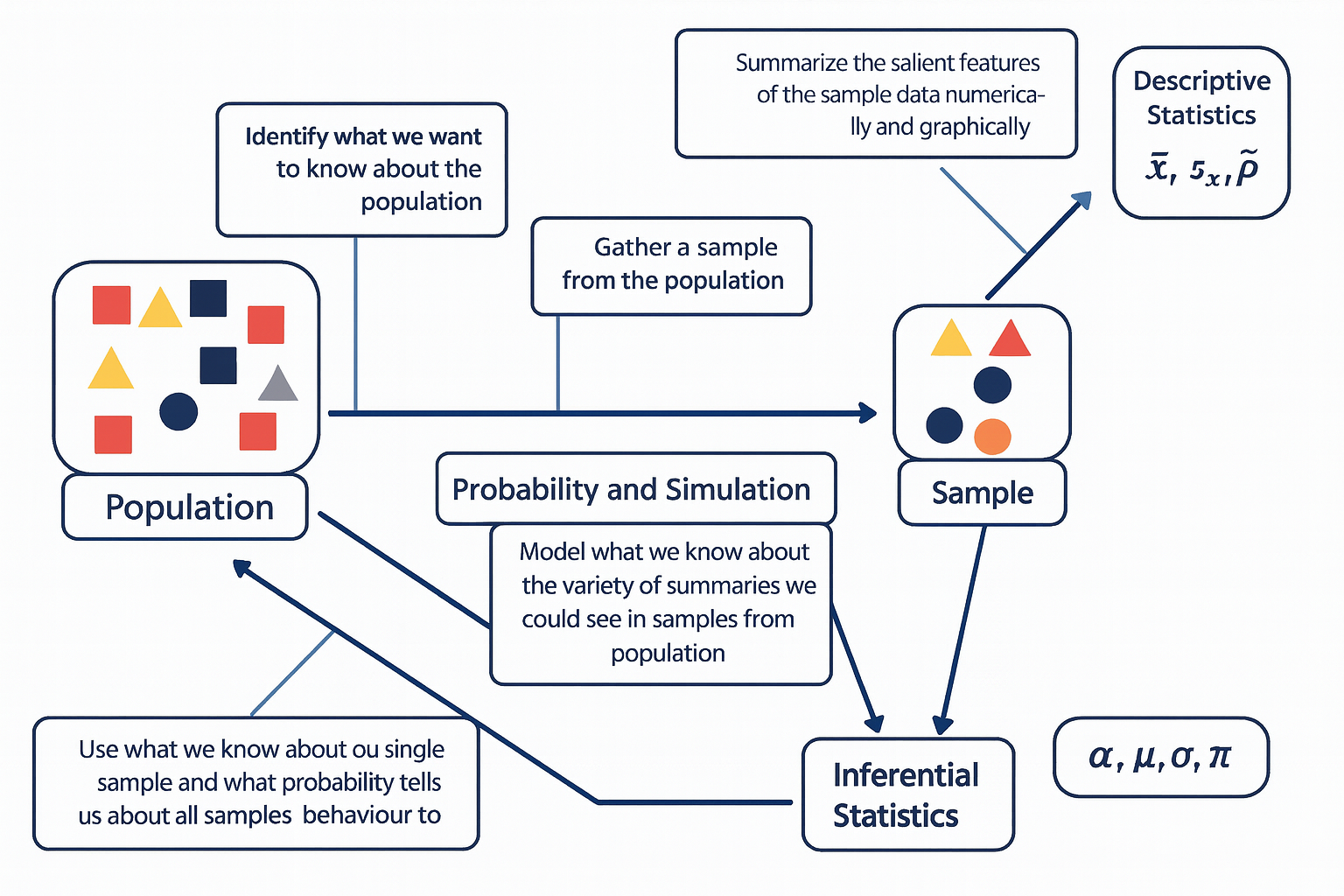

The diagram below illustrates the fundamental relationship between populations, samples, probability, and the two main branches of statistics — descriptive and inferential statistics.

The process begins with the population, representing the entire group of interest. Researchers first identify what they want to know about this population, then collect a sample — a smaller, representative subset of that population.

Descriptive statistics are then used to summarize the sample data both numerically and graphically, using measures such as the sample mean (\(\bar{x}\)), sample standard deviation (\(s_x\)), and sample proportion (\(\hat{p}\)). Next, probability and simulation help model the expected variability among possible samples that could be drawn from the population, allowing us to understand how sample summaries might behave due to random chance.

Finally, through inferential statistics, we use what we know from our sample — along with probability theory — to make educated, probabilistic statements about population parameters, such as the population mean (\(\mu\)), standard deviation (\(\sigma\)), proportion (\(\pi\)), and significance level (\(\alpha\)).

In essence, this figure captures how statistical reasoning flows from data collection and description to modeling and generalization — moving from what we observe in a sample to what we infer about the entire population.The diagram below is one way to think through statistics when we seek to answer a question about the social world.

2.1 Collecting Data

The stepping stone towards conducting statistical analysis is collecting data. The elegance of good research begins not with equations or estimation models, but with the deliberate act of collecting or finding the right information. Whether searching for the relevant survey, locating a reliable public dataset, or designing your own instrument to capture social realities, data collection is the foundation upon which all inference rests. This process is both scientific and creative—it requires knowing what questions matter, where credible evidence resides, and how to translate abstract ideas into measurable variables. Without the discipline of gathering data carefully, analysis becomes speculation. But when we gather the right data—accurate, representative, and conceptually aligned—the analytical process becomes not only possible but powerful.

What major survey datasets are publically available? Here are a list of a few major survey datasets that are regularly used in social sciences.

American National Election Studies (ANES) * US-based

The American National Election Studies (ANES) are a cornerstone of political behavior research in the United States. Established in 1948 and jointly administered by Stanford University and the University of Michigan, ANES surveys collect rich pre- and post-election data to understand how citizens form opinions, participate in politics, and make electoral choices. The ANES includes detailed measures of political ideology, media exposure, partisanship, policy preferences, and social identity, along with contextual information about candidates and campaigns. Its time-series (i.e., repeated over years) design—fielded every national election cycle—allows researchers to trace the evolution of political attitudes and polarization in the U.S. over more than seven decades. Because of its rigorous sampling, extensive questionnaire design, and continuity of measures, ANES remains an important dataset for studying democratic engagement, voter behavior, and the sociopolitical landscape.

Current Population Survey (CPS) * US-based

The Current Population Survey (CPS), jointly conducted by the U.S. Census Bureau and the Bureau of Labor Statistics, is the primary source of labor force statistics in the United States. Since its inception in the 1940s, the CPS has provided monthly data on employment, unemployment, earnings, and demographic characteristics for a representative sample of roughly 60,000 U.S. households. In addition to the core labor force questions, the CPS includes periodic supplements that cover vital social topics—such as education, fertility, civic participation, and voting behavior (the CPS Voting and Registration Supplement). Because of its frequency, large sample size, and policy relevance, the CPS is essential not only to economists and policymakers but also to sociologists and demographers studying inequality, class mobility, and household dynamics in real time.

Panel Study of Income Dynamics (PSID) * US-based

The Panel Study of Income Dynamics (PSID), begun in 1968 at the University of Michigan’s Institute for Social Research, is one of the longest-running household panel studies in the world. Unlike cross-sectional surveys, the PSID follows the same families and their descendants over decades, enabling the study of intergenerational mobility, family formation, and long-term income dynamics. Its design captures both economic and social dimensions of life—income, employment, wealth, health, and housing—allowing researchers to trace how structural inequality unfolds across generations. The PSID’s intergenerational structure has made it particularly valuable for studying persistent poverty, wealth accumulation, and social stratification within the U.S., and its supplements on child development and transition to adulthood add depth to understanding life-course processes.

World Values Survey (WVS) * Cross-National

The World Values Survey (WVS) is an ambitious, long-running project that seeks to map the cultural values and beliefs of people around the globe. Initiated in 1981 and now spanning over 100 countries, the WVS measures attitudes toward democracy, religion, gender roles, economic life, and social trust. Its conceptual foundation builds on the idea that cultural change and economic development are interconnected—an idea most famously articulated by Ronald Inglehart’s theory of postmaterialist value change. Conducted in waves approximately every five years, the WVS provides a comparative framework to examine how value systems chaneg across nations.

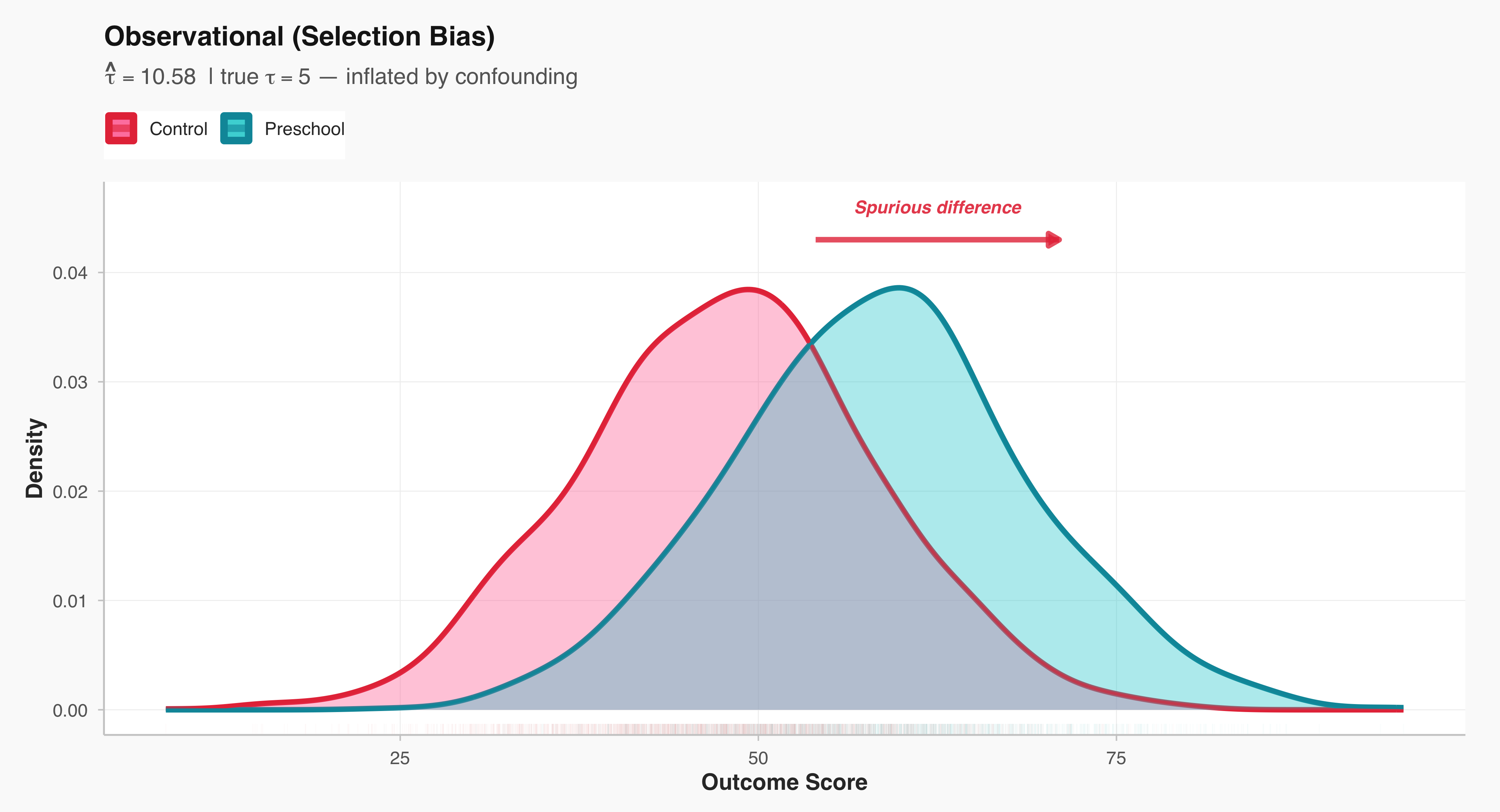

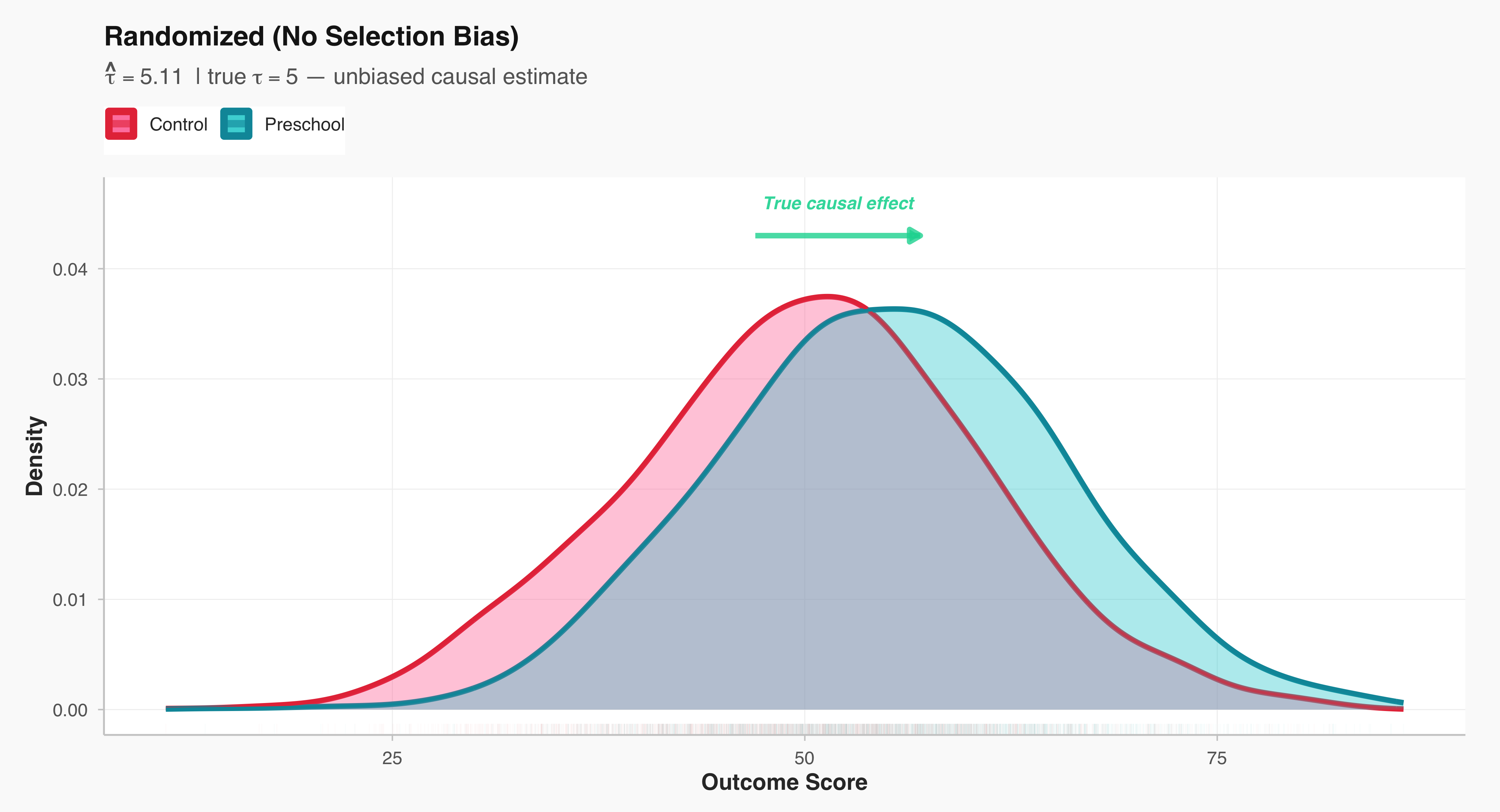

A Key Distinction Between the Types of Studies

A central distinction in social research lies between studies that intervene in the social world and those that observe it as it naturally unfolds. Experimental studies are designed to establish causal relationships: researchers deliberately manipulate one or more variables—such as exposure to a message, allocation of resources, or participation in a program—and then observe the resulting changes in outcomes. In contrast, observational studies refrain from such manipulation; they document social behavior, attitudes, or outcomes as they occur in real life. While experiments offer stronger leverage for identifying causality through control and randomization, observational designs provide insights into complex social processes that cannot be ethically or practically manipulated. Recognizing this distinction is fundamental, as it shapes how researchers interpret evidence, evaluate validity, and generalize findings to broader populations.

Experiment

In social science, an experiment is a systematic study in which the researcher deliberately introduces an intervention or treatment in order to observe its causal effect on an outcome. The key feature of experimentation is control—researchers manipulate one or more independent variables while holding other factors constant, allowing for the identification of causal relationships.

Example: A sociologist wants to examine whether exposure to news stories about economic inequality affects people’s support for redistributive policies. Participants are randomly assigned to read one of five news articles—each emphasizing a different narrative about inequality—and then asked about their policy preferences. Because the researcher actively manipulates what participants are exposed to, this constitutes an experiment.

Observational Study

An observational study involves collecting data without manipulating or altering the social processes under investigation. Researchers observe patterns, behaviors, or associations as they naturally occur, rather than imposing an intervention. This design is often used when experimental manipulation would be unethical or impractical.

Example: A political scientist wants to know whether residents of wealthier neighborhoods are more likely to vote. They collect information from voter registration records and census data, comparing turnout rates across neighborhoods with different socioeconomic profiles and characteristics. Because the researcher merely observes existing differences without assigning any treatment, this is an observational study.

Sample

A sample is a subset of a broader population that researchers study in order to make inferences about that population. Because it is rarely possible to collect information from every individual in a population, sampling allows researchers to study social phenomena efficiently while maintaining representativeness.

Simple Random Sample (SRS)

A simple random sample (SRS) is a sampling method in which every possible subset of the population of a given size has an equal chance of being selected. This procedure minimizes selection bias and ensures that observed patterns can be attributed to the population rather than to systematic differences in who was included.

Example: To study public attitudes toward climate change, a researcher draws a random sample of 1,500 adults from a national database where each individual has an equal probability of being chosen. This kind of sampling forms the statistical foundation for most nationally representative surveys in the social sciences, such as the General Social Survey or European Social Survey.

2.2 Summarizing Data

In any empirical investigation, one of the first steps is to summarize information about the units or individuals we study. Social scientists often distinguish between characteristics of an entire population and those of a sample drawn from it. The key concepts here are parameters and statistics—two closely related but conceptually distinct ideas that link description and inference.

A parameter is a numerical summary that describes a true characteristic of the entire population—the full set of individuals, organizations, or events that constitute our field of interest. In practice, parameters are almost always unknown because it is rarely feasible to collect information on every member of a population. For example, if we could measure the average income of all households in the United States, that value would represent a population parameter. Likewise, the mean level of political trust among all adults in a country, or the proportion of citizens who voted in a specific election, are parameters describing entire populations.

A statistic, by contrast, is a numerical summary computed from a sample—a smaller subset of observations selected from the population. Because researchers typically rely on samples to study larger populations, sample statistics serve as our best estimates of the unknown population parameters. For instance, the average income calculated from a nationally representative survey, such as the General Social Survey, is a sample statistic that approximates the population mean. Similarly, if we surveyed a thousand voters to estimate the proportion who support a particular candidate, that proportion would be a statistic, standing in for the true but unknown population value.

The relationship between populations and samples is often visualized as a flow of information: on one side lies the broad and complex population we seek to understand, and on the other, the smaller and more manageable sample we can actually observe. Between the two stands the domain of probability, which provides the theoretical bridge linking what we see to what we infer. Probability theory helps us quantify how much variation we might expect if we were to draw many different samples from the same population. Through probibility simulation and modeling, researchers can approximate this sampling variability, giving rise to measures of uncertainty such as confidence intervals and standard errors.

Together, these elements—parameters and statistics, sampling and inference—form the intellectual architecture of social statistics. They remind us that every conclusion we draw about society rests on a bridge between what we observe and what we infer, linking empirical data to theoretical understanding through the language of probability.

2.3 Types of Variables/Data

In social science research, variables can take many forms depending on what they measure and how those measurements are expressed. Distinguishing among types of data is crucial because it determines which statistical summaries, visualizations, and models are appropriate for analysis. Broadly speaking, data can be classified into two overarching types: Continuous and Categorical.

Continuous Data

Quantitative data consist of numerical values that express how much or how many of something there is. These values exist on a numeric scale with meaningful magnitudes, allowing for arithmetic operations such as addition or averaging. Quantitative data can be further divided into two subtypes: Discrete data represent countable quantities that take on distinct, separate values—often integers—with gaps between them. Examples include the number of children in a household, the count of protests in a county, or the number of campaign events attended by a candidate. Because these variables reflect counts, fractional values have no substantive meaning.

Continuous data represent measurements that can, in principle, take on any value within a given interval. There are no inherent gaps in the scale; instead, the precision of measurement depends on the accuracy of the instrument or method used. Examples include age (measured in years, months, or days), household income, or hours of television watched per week. Continuous variables are especially common in survey and administrative data when researchers measure social or economic quantities along a continuum.

Categorical Data

Categorical data, by contrast, represent values that differ in kind rather than degree. These data describe membership in categories, groups, or qualitative states that cannot be meaningfully ordered or subjected to arithmetic operations. Each distinct category is referred to as a level of the variable. Examples include gender identity, religious affiliation, race or ethnicity, political party, or preferred news source.

Within categorical data, researchers often distinguish between:

Nominal variables, where categories have no intrinsic order (e.g., political party: Democrat, Republican, Independent, Other), and Ordinal variables, where categories imply a rank or order but the intervals between them are not uniform (e.g., ideology scale: “very liberal,” “liberal,” “moderate,” “conservative,” “very conservative”).

An Illustrative Example

Consider a political scientist preparing to study voting behavior ahead of a national election. They design a survey collecting a range of variables about registered voters:

- Party identification (Democrat, Republican, Independent, Other) — categorical, nominal

- Political ideology (very liberal to very conservative) — categorical, ordinal

- Voter turnout history (number of elections voted in during the past decade) — quantitative, discrete

- Campaign donations (dollar amount contributed to political campaigns) — quantitative, continuous

- Primary news source (television, online, print, social media) — categorical, nominal

- Age (in years) — quantitative, continuous

- Education (less than high school, high school, some college, college degree, graduate degree) — categorical, ordinal

This list is meant to provide the level of measurement for each variable—nominal, ordinal, binary, discrete, or continuous.

Understanding these distinctions is more than a technical exercise: it informs every step of analysis, from selecting the right summary statistics and visualizations (e.g., bar charts versus histograms) to choosing the appropriate inferential tests (e.g., chi-square, correlation, or regression). In short, recognizing the type of data at hand is a foundational skill for designing, interpreting, and communicating sound social research.

2.4 In-Class Exercise (1): Identifying Variable Types

In this exercise, you will practice classifying variables by their level of measurement—a foundational skill for all empirical social scientists. The table below lists variables drawn from a hypothetical study of registered voters in an upcoming general election. Each variable reflects a different kind of information political researchers might collect: from demographic traits and political attitudes to behaviors such as voting and campaign participation.

Your task is to identify the correct variable type for each entry in the table—whether it is nominal, ordinal, binary, discrete, or continuous. Think carefully about what distinguishes each level of measurement. Ask yourself:

- Does the variable represent categories or quantities?

- If it’s categorical, does it have a natural order (ordinal) or not (nominal)?

- If it’s numeric, does it take on only whole numbers (discrete) or any value along a scale (continuous)?

- Discuss your reasoning with your classmates and be prepared to explain how your classification would influence the choice of graphs or statistical models in a real research project.

| Variables / Characteristics | Variable Type |

|---|---|

| Trust in national government (1 = none … 5 = a lot) | __________ |

| Political interest (1 = not at all … 4 = very interested) | __________ |

| Registered to vote (Yes/No) | __________ |

| Education level (less than HS … graduate degree) | __________ |

| Employment status (employed/unemployed/student/retired) | __________ |

| Belief that vote matters (1 = strongly disagree … 5 = agree) | __________ |

| Frequency of social media political posts (Never … Daily) | __________ |

| Union membership (Yes/No) | __________ |

| Perceived local economic conditions (1 = worse … 5 = better) | __________ |

| Number of times contacted by a campaign this cycle | __________ |

2.5 In-Class Exercise (2):: The 1936 FDR–Landon Poll

During the 1936 U.S. presidential election between Democratic incumbent Franklin D. Roosevelt (FDR) and Republican challenger Alf Landon, The Literary Digest magazine conducted a massive poll to predict the outcome. The magazine mailed 10 million questionnaires to names drawn from its subscriber list, telephone directories, and automobile registration records. About 2.3 million people responded. Based on these replies, the Digest predicted that Landon would win by a landslide of 370 electoral votes.

In contrast, George Gallup, using a carefully designed random sample of about 50,000 respondents, correctly predicted that Roosevelt would win decisively.

This example illustrates how even a very large dataset can lead to inaccurate conclusions if the sample is not representative of the population of interest. It highlights the importance of sampling design and bias in survey research.

Questions:

- Identify the population(s) and parameter(s) of interest to The Literary Digest.

- Was the data collection observational or experimental? Explain your reasoning?

- Describe the sample and the type of data obtained

- What does this example teach us about the relationship between sample size and sample quality in public opinion research?

Extension Activity: Have students compare The Literary Digest poll to a modern equivalent, such as online opt-in surveys. Discuss how selection bias can still occur today even with large datasets.

2.6 A First Look at R and RStudio

Part 1 — Set up your workspace

This chapter guides you through setting up the necessary software for this book. We strongly recommend using RStudio Projects to manage your files, which ensures all examples are reproducible.

1. Prepare Your Course Folder

Start by creating a main location for all your files.

- Create a main class folder on your computer (e.g., on your Desktop or in Documents). Name it something easy to find, like

Stats_BookorSOC205A_Data. - All files, projects, and data for this course will be saved inside this folder.

2. Install the Core Software

You need both R (the statistical programming language) and RStudio (the user interface, or IDE).

Installation Order Matters! You must install R first, and then RStudio Desktop.

- Install R (The Language): Search “Install R” and follow the link to CRAN (The Comprehensive R Archive Network).

- Install RStudio Desktop (The IDE): Search “Install RStudio Desktop” and select the Free/Open Source edition.

3. Start RStudio and Create a Project

RStudio Projects keep your files and paths organized, which is essential when compiling a book.

- Launch RStudio.

- Go to the menu: File \(\to\) New Project \(\to\) New Directory \(\to\) New Project.

- Name the project (e.g.,

lecture-1). - For the location, choose the main class folder you created in Step 1.

- Click Create Project.

4. Sanity Check: Verify R is Working

Use the R Console to confirm that R is installed correctly and is accessible by RStudio.

- Look at the R Console (usually the bottom-left pane).

- Type the following command exactly and press Enter:

To begin, create a new R Markdown file in RStudio. From the menu, select File ▸ New File ▸ R Markdown…, and when prompted, give your document a title such as Lecture 1 Practice. You may choose any output format—Word or PDF is recommended for simplicity, though HTML also works. Once the file opens, save it immediately into your designated class folder with a clear name like Lecture1.Rmd. Next, scroll through the automatically generated template and delete everything beginning with the line ## R Markdown to the end of the file, leaving only the YAML header at the top. This ensures you’re starting with a clean workspace. Finally, click Knit to compile the document. If your setup is correct, a Word, PDF, or HTML file with the same name should appear in your folder. Open it to confirm that RStudio successfully created your first document.

With your document ready, you will now explore one of R’s classic built-in datasets, called iris. This dataset contains measurements of 150 individual irises, divided into three species. To view the data, create a code chunk—either by selecting Code ▸ Insert Chunk from the menu or by typing the shortcut (Ctrl+Alt+I or ⌘⌥I). Inside this code chunk, type print(iris) and run the chunk to display the full dataset in the console. Observe that there are 150 rows representing flowers and 5 variables: (1) Sepal Length, (2) Sepal Width, (3) Petal Length, (4) Petal Width, and (5) Species. The first four variables are quantitative and continuous, while Species is a categorical variable with three distinct levels (setosa, versicolor, and virginica).

Next, examine the structure of the dataset by typing str(iris) in the same or a new code chunk. Running this command reveals the data types of each variable and confirms your earlier observations about their nature—numeric for the first four and factor (categorical) for the fifth. To go a step further, type summary(iris) and execute the command. This function provides numerical summaries for each quantitative variable—displaying the minimum, first quartile, median, mean, third quartile, and maximum—and shows counts for each level of the categorical variable. Discuss with students how summary() automatically adjusts its output depending on variable type, giving a concise overview of both numeric distributions and categorical frequencies.

Once you have run and interpreted these commands, include short written explanations in your R Markdown document to describe what each function does and what you observe in the results. When finished, knit the file again and check the output. You now have your first reproducible R document, complete with code, output, and interpretation. oduce and/or explain your code outside of the code chunk. Knit the word document.

2.7 Working With Real World Dataset: The Iris Data

The Iris dataset contains measurements of iris flowers collected to study how physical characteristics vary across different species. Originally compiled by the British statistician and biologist Ronald A. Fisher in 1936, the dataset was introduced in his classic paper “The Use of Multiple Measurements in Taxonomic Problems.” Fisher used these data to demonstrate one of the earliest applications of discriminant analysis, a statistical method for classifying observations into categories based on quantitative traits. The dataset includes 150 individual iris flowers, divided equally among three species:

*Iris setosa

*Iris versicolor

*Iris virginica

For each flower, Fisher measured four quantitative variables:

*Sepal Length (in centimeters)

*Sepal Width (in centimeters)

*Petal Length (in centimeters)

*Petal Width (in centimeters)

These measurements describe the geometry of the flowers’ petals and sepals—two key parts of the blossom that vary visibly across species. Using these traits, Fisher showed how statistical models could classify species based on measurement patterns, a concept that later became foundational in machine learning and pattern recognition.

Today, the Iris dataset comes preloaded with R and most statistical software. It continues to serve as a pedagogical example for teaching data exploration, visualization, descriptive statistics, classification, and clustering. Although small in size, it captures many of the essential elements of real-world data: multiple variables, distinct categories, and measurable variation within and between groups.

The dataset was originally introduced by the British biologist and statistician Ronald A. Fisher in his 1936 paper, “The Use of Multiple Measurements in Taxonomic Problems.” Fisher used these data to demonstrate one of the first applications of discriminant analysis, a method for classifying observations into groups.

In R, the data are stored as a data frame (a rectangular table similar to a spreadsheet). Because it’s built into R, you can explore it right away:

2.8 Eseential Commands in R

Accessing help

Use ? or help() to pull up documentation; ?? searches help topics.

?iris # help page for the dataset

?data.frame # help page for data frames

help("subset") # equivalent

??"linear model" # fuzzy search across help filesLearning the dataset - Summary Statistics

# loads the dataset (though usually it’s already available)

data(iris)

# shows the first six rows - get a sens eof the dataset

head(iris) Sepal.Length Sepal.Width Petal.Length Petal.Width Species

1 5.1 3.5 1.4 0.2 setosa

2 4.9 3.0 1.4 0.2 setosa

3 4.7 3.2 1.3 0.2 setosa

4 4.6 3.1 1.5 0.2 setosa

5 5.0 3.6 1.4 0.2 setosa

6 5.4 3.9 1.7 0.4 setosaInformation about objects

str(), class(), names(), dim(), and summary() give fast overviews.

# structure (types + preview) - it gives you an overview of the dataset

str(iris) 'data.frame': 150 obs. of 5 variables:

$ Sepal.Length: num 5.1 4.9 4.7 4.6 5 5.4 4.6 5 4.4 4.9 ...

$ Sepal.Width : num 3.5 3 3.2 3.1 3.6 3.9 3.4 3.4 2.9 3.1 ...

$ Petal.Length: num 1.4 1.4 1.3 1.5 1.4 1.7 1.4 1.5 1.4 1.5 ...

$ Petal.Width : num 0.2 0.2 0.2 0.2 0.2 0.4 0.3 0.2 0.2 0.1 ...

$ Species : Factor w/ 3 levels "setosa","versicolor",..: 1 1 1 1 1 1 1 1 1 1 ...# it tells you the types of dataset you are working with

class(iris) [1] "data.frame"# it tells you the name fo the columns in the dataset

names(iris) [1] "Sepal.Length" "Sepal.Width" "Petal.Length" "Petal.Width" "Species" # it tells you the number of rows and columns your dataset.

dim(iris) [1] 150 5# it tells you the numeric summaries + factor counts

summary(iris) Sepal.Length Sepal.Width Petal.Length Petal.Width

Min. :4.300 Min. :2.000 Min. :1.000 Min. :0.100

1st Qu.:5.100 1st Qu.:2.800 1st Qu.:1.600 1st Qu.:0.300

Median :5.800 Median :3.000 Median :4.350 Median :1.300

Mean :5.843 Mean :3.057 Mean :3.758 Mean :1.199

3rd Qu.:6.400 3rd Qu.:3.300 3rd Qu.:5.100 3rd Qu.:1.800

Max. :7.900 Max. :4.400 Max. :6.900 Max. :2.500

Species

setosa :50

versicolor:50

virginica :50

Using packages

Install once; load each session. (Skip install.packages() on shared lab machines if preinstalled.)

# The first command installs the package. The second command (library) "calls" it.

install.packages("tidyverse") # uncomment if neededThe following package(s) will be installed:

- tidyverse [2.0.0]

These packages will be installed into "~/Documents/UCSB/book/r-book-starter/renv/library/macos/R-4.5/aarch64-apple-darwin20".

# Installing packages --------------------------------------------------------

- Installing tidyverse ... OK [linked from cache]

Successfully installed 1 package in 2.9 milliseconds.library(tidyverse) # loads dplyr, ggplot2, etc.The working directory

# tells you your current working directory

getwd() # setwd("~/Stats_Class") # avoid hard-coding; use Projects instead[1] "/Users/masoudmovahed/Documents/UCSB/book/r-book-starter"Operators (arithmetic, relational, logical, assignment)

# Create two numeric vectors from iris so we can practice operations.

x <- iris$Sepal.Length

y <- iris$Petal.Length

# Add two numeric vectors element-by-element (quick math across rows).

x + y [1] 6.5 6.3 6.0 6.1 6.4 7.1 6.0 6.5 5.8 6.4 6.9 6.4 6.2 5.4 7.0

[16] 7.2 6.7 6.5 7.4 6.6 7.1 6.6 5.6 6.8 6.7 6.6 6.6 6.7 6.6 6.3

[31] 6.4 6.9 6.7 6.9 6.4 6.2 6.8 6.3 5.7 6.6 6.3 5.8 5.7 6.6 7.0

[46] 6.2 6.7 6.0 6.8 6.4 11.7 10.9 11.8 9.5 11.1 10.2 11.0 8.2 11.2 9.1

[61] 8.5 10.1 10.0 10.8 9.2 11.1 10.1 9.9 10.7 9.5 10.7 10.1 11.2 10.8 10.7

[76] 11.0 11.6 11.7 10.5 9.2 9.3 9.2 9.7 11.1 9.9 10.5 11.4 10.7 9.7 9.5

[91] 9.9 10.7 9.8 8.3 9.8 9.9 9.9 10.5 8.1 9.8 12.3 10.9 13.0 11.9 12.3

[106] 14.2 9.4 13.6 12.5 13.3 11.6 11.7 12.3 10.7 10.9 11.7 12.0 14.4 14.6 11.0

[121] 12.6 10.5 14.4 11.2 12.4 13.2 11.0 11.0 12.0 13.0 13.5 14.3 12.0 11.4 11.7

[136] 13.8 11.9 11.9 10.8 12.3 12.3 12.0 10.9 12.7 12.4 11.9 11.3 11.7 11.6 11.0# Ask a yes/no question per row: is Sepal.Length > 6? Returns TRUE/FALSE.

x > 6 [1] FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE

[13] FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE

[25] FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE

[37] FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE

[49] FALSE FALSE TRUE TRUE TRUE FALSE TRUE FALSE TRUE FALSE TRUE FALSE

[61] FALSE FALSE FALSE TRUE FALSE TRUE FALSE FALSE TRUE FALSE FALSE TRUE

[73] TRUE TRUE TRUE TRUE TRUE TRUE FALSE FALSE FALSE FALSE FALSE FALSE

[85] FALSE FALSE TRUE TRUE FALSE FALSE FALSE TRUE FALSE FALSE FALSE FALSE

[97] FALSE TRUE FALSE FALSE TRUE FALSE TRUE TRUE TRUE TRUE FALSE TRUE

[109] TRUE TRUE TRUE TRUE TRUE FALSE FALSE TRUE TRUE TRUE TRUE FALSE

[121] TRUE FALSE TRUE TRUE TRUE TRUE TRUE TRUE TRUE TRUE TRUE TRUE

[133] TRUE TRUE TRUE TRUE TRUE TRUE FALSE TRUE TRUE TRUE FALSE TRUE

[145] TRUE TRUE TRUE TRUE TRUE FALSE# Combine two conditions: long sepals AND species is setosa (both must be true).

x > 6 & iris$Species == "setosa" [1] FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE

[13] FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE

[25] FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE

[37] FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE

[49] FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE

[61] FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE

[73] FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE

[85] FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE

[97] FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE

[109] FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE

[121] FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE

[133] FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE FALSE

[145] FALSE FALSE FALSE FALSE FALSE FALSE# Store the average of Sepal.Length so we can reuse it (one number).

x_mean <- mean(x)

# Center each value: “how far is each observation from the average?”

x_centered <- x - x_mean

# Show the first few centered values so I can sanity-check the result quickly.

head(x_centered)[1] -0.7433333 -0.9433333 -1.1433333 -1.2433333 -0.8433333 -0.4433333Getting started with vectors

# Make a tiny numeric vector by hand to see how vectors behave.

v <- c(1, 3.5, 7)

# Tell me how many elements are in this vector.

length(v)[1] 3# Tell me the storage type (numeric, character, logical, etc.).

class(v)[1] "numeric"# Coerce to integer so I see how R converts numeric types.

as.integer(v)[1] 1 3 7# Show unique category labels present in Species (factor levels as characters).

unique(iris$Species)[1] setosa versicolor virginica

Levels: setosa versicolor virginica2.9 Selecting vector elements

# Work with a single numeric column so indexing feels concrete.

x <- iris$Sepal.Width

# Give me the very first value (position 1).

x[1][1] 3.5# Give me the first five values (a range slice).

x[1:5][1] 3.5 3.0 3.2 3.1 3.6# Give me all widths strictly greater than 3.5 (logical filter).

x[x > 3.5] [1] 3.6 3.9 3.7 4.0 4.4 3.9 3.8 3.8 3.7 3.6 4.1 4.2 3.6 3.8 3.8 3.7 3.6 3.8 3.8# Give me the single value that is the maximum (position of the max, then extract).

x[which.max(x)][1] 4.4# Chain two filters: take Sepal.Length from only virginica, then peek at first five.

iris$Sepal.Length[iris$Species == "virginica"][1:5][1] 6.3 5.8 7.1 6.3 6.52.10 Math functions (on numeric columns)

# Central tendency: the typical Petal.Width.

mean(iris$Petal.Width)[1] 1.199333# Robust central tendency (less sensitive to outliers).

median(iris$Petal.Width)[1] 1.3# Distribution landmarks: 25th, 50th, and 75th percentiles.

quantile(iris$Petal.Width, probs = c(.25, .5, .75))25% 50% 75%

0.3 1.3 1.8 # Spread measures: how variable is Petal.Width?

var(iris$Petal.Width)[1] 0.5810063sd(iris$Petal.Width)[1] 0.7622377# Extremes in one shot (min and max).

range(iris$Petal.Width)[1] 0.1 2.5# Linear association strength between two continuous variables.

cor(iris$Sepal.Length, iris$Petal.Length)[1] 0.8717538# Make a tidy, readable number for reporting (rounded mean).

round(mean(iris$Sepal.Width), 2)[1] 3.06# call the package

library(dplyr)

# Keep a clean working copy so the original iris stays untouched.

df <- iris

# Filter rows by conditions, then keep only the columns I care about for this view.

df_small <- df %>%

filter(Sepal.Length > 6, Species != "setosa") %>%

select(Sepal.Length, Petal.Length, Species)

# Create useful ratios that often separate species nicely (new columns).

df_features <- df %>%

mutate(

sepal_ratio = Sepal.Length / Sepal.Width,

petal_ratio = Petal.Length / Petal.Width

)

# Sort rows by a new metric so the most extreme cases float to the top.

df_sorted <- df_features %>%

arrange(desc(sepal_ratio))

# Collapse rows to species-level facts: counts and key summaries for reporting.

by_species <- df %>%

group_by(Species) %>%

summarise(

n = n(),

mean_sepal_len = mean(Sepal.Length),

mean_petal_len = mean(Petal.Length),

sd_petal_wid = sd(Petal.Width),

.groups = "drop"

)

# Print the grouped summary so I can read the species differences at a glance.

by_species# A tibble: 3 × 5

Species n mean_sepal_len mean_petal_len sd_petal_wid

<fct> <int> <dbl> <dbl> <dbl>

1 setosa 50 5.01 1.46 0.105

2 versicolor 50 5.94 4.26 0.198

3 virginica 50 6.59 5.55 0.2752.11 Subseting in R

# Human-readable filtering: keep setosa with Sepal.Length > 5; keep 3 named columns.

subset(iris, Species == "setosa" & Sepal.Length > 5.0,

select = c(Sepal.Length, Sepal.Width, Species)) Sepal.Length Sepal.Width Species

1 5.1 3.5 setosa

6 5.4 3.9 setosa

11 5.4 3.7 setosa

15 5.8 4.0 setosa

16 5.7 4.4 setosa

17 5.4 3.9 setosa

18 5.1 3.5 setosa

19 5.7 3.8 setosa

20 5.1 3.8 setosa

21 5.4 3.4 setosa

22 5.1 3.7 setosa

24 5.1 3.3 setosa

28 5.2 3.5 setosa

29 5.2 3.4 setosa

32 5.4 3.4 setosa

33 5.2 4.1 setosa

34 5.5 4.2 setosa

37 5.5 3.5 setosa

40 5.1 3.4 setosa

45 5.1 3.8 setosa

47 5.1 3.8 setosa

49 5.3 3.7 setosa# Same operation using bracket syntax (what R is doing under the hood).

iris[iris$Species == "setosa" & iris$Sepal.Length > 5.0,

c("Sepal.Length", "Sepal.Width", "Species")] Sepal.Length Sepal.Width Species

1 5.1 3.5 setosa

6 5.4 3.9 setosa

11 5.4 3.7 setosa

15 5.8 4.0 setosa

16 5.7 4.4 setosa

17 5.4 3.9 setosa

18 5.1 3.5 setosa

19 5.7 3.8 setosa

20 5.1 3.8 setosa

21 5.4 3.4 setosa

22 5.1 3.7 setosa

24 5.1 3.3 setosa

28 5.2 3.5 setosa

29 5.2 3.4 setosa

32 5.4 3.4 setosa

33 5.2 4.1 setosa

34 5.5 4.2 setosa

37 5.5 3.5 setosa

40 5.1 3.4 setosa

45 5.1 3.8 setosa

47 5.1 3.8 setosa

49 5.3 3.7 setosa2.12 UC Berkeley Admissions: A Compact Case Study

The UCBAdmissions dataset records graduate admissions at UC Berkeley in Fall 1973, cross-classified by Admit (Admitted/Rejected), Gender (Male/Female), and Dept (A–F). The data are counts—not individual rows—so each combination of (Admit × Gender × Dept) has a frequency. This structure is ideal for learning how to move between tables and data frames, compute margins and conditional proportions, test independence with chi-square, visualize joint and conditional distributions, and fit logistic regressions with frequency weights. A famous feature of this dataset is that aggregate rates suggest women were admitted at lower rates than men; however, within departments the pattern largely reverses or disappears. This is a textbook example of Simpson’s paradox: an association seen in the aggregate can flip (or vanish) once you condition on a confounder (here, department selectivity and application patterns).

Learn the Dataset

This section orients students to the UC Berkeley admissions data and gets it into an analysis-ready shape. We begin by loading a three-way contingency table that records counts by admission outcome, gender, and department. We inspect its structure to understand the dimensions and categories the table contains. Then we convert the multiway table into a tidy data frame so each row represents one unique combination of outcome, gender, and department with an associated count—exactly the form most tools expect for plotting and modeling. A quick glance at the first few rows confirms the variable names and values, and a simple total of the count column verifies that the number of applications is consistent. In short, we’ve transformed a compact but opaque table into a clear, row-wise dataset that’s easy to analyze and visualize.

# Load the built-in table: 3-way (Admit x Gender x Dept) with integer counts.

data(UCBAdmissions)

# See the structure: it's a 'table' with dimensions and factor levels.

str(UCBAdmissions) 'table' num [1:2, 1:2, 1:6] 512 313 89 19 353 207 17 8 120 205 ...

- attr(*, "dimnames")=List of 3

..$ Admit : chr [1:2] "Admitted" "Rejected"

..$ Gender: chr [1:2] "Male" "Female"

..$ Dept : chr [1:6] "A" "B" "C" "D" ...# Turn multiway table into a tidy data frame with one row per cell + a 'Freq' column.

ucb <- as.data.frame(UCBAdmissions)

# Peek at the first rows to understand variable names and counts.

head(ucb) Admit Gender Dept Freq

1 Admitted Male A 512

2 Rejected Male A 313

3 Admitted Female A 89

4 Rejected Female A 19

5 Admitted Male B 353

6 Rejected Male B 207# Sanity check: total applications should equal the sum of frequencies.

sum(ucb$Freq)[1] 4526Descriptive Totals and Admission Rates

These steps build the essential summaries you need before plotting or modeling. First, it collapses the dataset to compare overall admission rates by gender in the aggregate—answering “what fraction of women vs. men were admitted, ignoring departments?” Next, it drills down to the within-department view, computing admission rates for women and men separately inside each department so you can see whether patterns hold once you compare like with like. Finally, it calculates each department’s baseline selectivity—the overall admit rate regardless of gender—so you can rank departments from most to least selective. Together, these summaries set up the core lesson: aggregate gaps can differ from conditional gaps, and understanding both levels is crucial for sound social-science inference.

# Create a 2×2 table of counts aggregated over departments:

# rows = Admit (Admitted/Rejected), cols = Gender (Female/Male).

agg_gender <- margin.table(UCBAdmissions, margin = c(1, 2))

# Convert that 2×2 into conditional proportions *by gender*:

# i.e., within each gender column, divide by that gender’s total applicants.

admits_by_gender <- prop.table(agg_gender, margin = 2)

# Pull just the admitted row so we see “admitted share” for each gender.

admits_by_gender["Admitted", ] Male Female

0.4451877 0.3035422 # For each department (the 3rd dimension of the 3-way table),

# compute the admitted share *by gender* within that department.

by_dept <- apply(UCBAdmissions, 3, function(tab2){

# Inside each department’s 2×2 (Admit × Gender), condition on columns (gender)

# then pick the "Admitted" row to get admitted share by gender.

prop.table(tab2, margin = 2)["Admitted", ]

})

# Transpose so departments become rows and genders become columns (easier to read).

t(by_dept) Admit

Dept Male Female

A 0.62060606 0.82407407

B 0.63035714 0.68000000

C 0.36923077 0.34064081

D 0.33093525 0.34933333

E 0.27748691 0.23918575

F 0.05898123 0.07038123# Also compute each department’s overall selectivity:

# admitted share across all genders within each department.

dept_selectivity <- apply(UCBAdmissions, 3, function(tab2) prop.table(tab2)["Admitted"])

# Print the department selectivity vector to inspect which depts are more/less selective.

dept_selectivity A B C D E F

NA NA NA NA NA NA Data Managemnt Tools

Here, we’re reshaping and summarizing the raw UC Berkeley admissions data so that each row represents a unique combination of department and gender. For every department–gender pair, we total the number of admitted and rejected applicants, calculate how many people applied in total, and prepare the data so that each row is a clean, self-contained summary of that group. The result is a dataset that’s ready for visualization—each row gives a compact snapshot of admissions for that department and gender, making it easy to compare patterns in the plots that follow.

# Load wrangling helpers: dplyr for pipes/grouping; tidyr for wide/long reshaping.

library(dplyr); library(tidyr)

# Start from the tidy data frame (Admit, Gender, Dept, Freq) and make one row per Dept × Gender:

dg <- ucb %>%

# First, get totals by Dept × Gender × Admit (safe even if already unique).

group_by(Dept, Gender, Admit) %>%

summarise(Freq = sum(Freq), .groups = "drop") %>%

# Spread Admit (Admitted/Rejected) into two numeric columns for easy math.

pivot_wider(names_from = Admit, values_from = Freq) %>%

# Total applicants in this Dept × Gender cell = admitted + rejected.

mutate(n = Admitted + Rejected) %>%

# Tell dplyr: treat each row independently so per-row tests/CI work cleanly.

rowwise() %>%

mutate(

# Run a binomial test per row to get a 95% CI for the admitted proportion k/n.

.pt = list(prop.test(Admitted, n)),

# Point estimate: admitted share = k/n.

p_hat = Admitted / n,

# Lower bound of 95% CI from prop.test’s result.

lwr = .pt$conf.int[1],

# Upper bound of 95% CI from prop.test’s result.

upr = .pt$conf.int[2]

) %>%

# Return to regular (non-rowwise) data frame and drop the temporary test object.

ungroup() %>%

select(-.pt)

# Sanity check: make sure the columns we need for plotting exist.

stopifnot(all(c("Dept","Gender","p_hat","lwr","upr") %in% names(dg)))Visualizing the Structure Simple Barplot

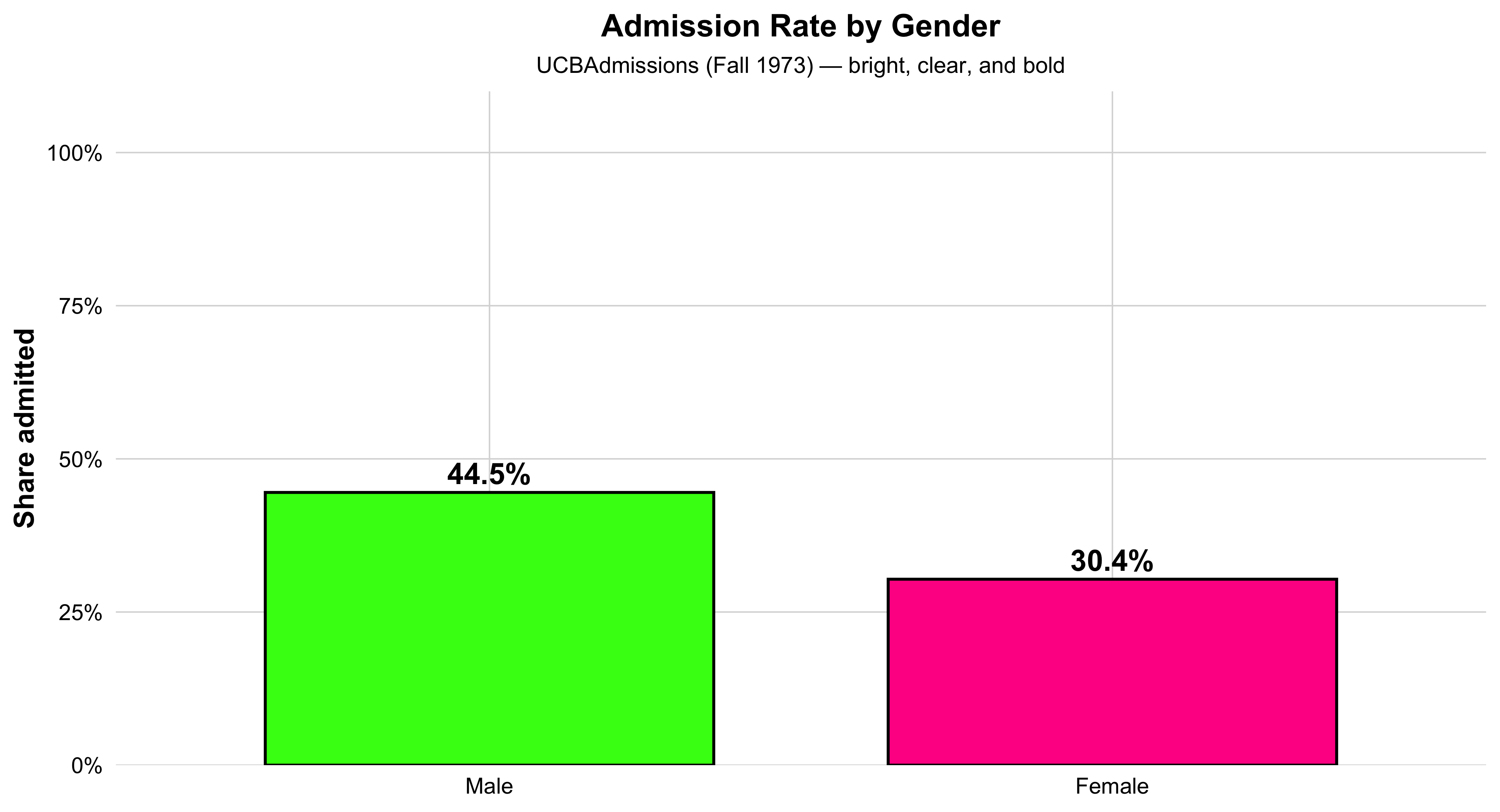

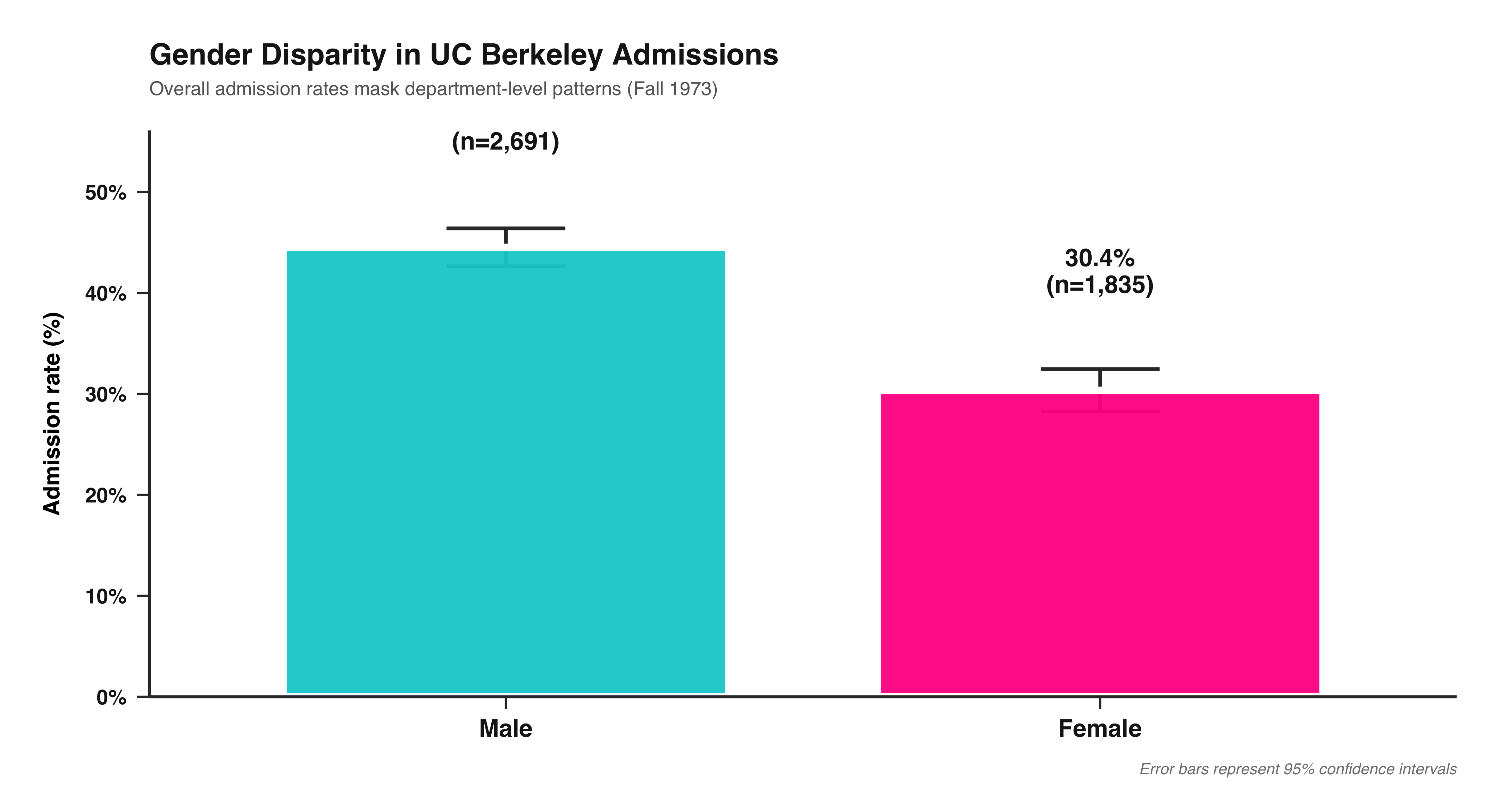

This bar chart shows the overall admission rate by gender at UC Berkeley in 1973. Each bar represents one gender, and its height corresponds to the percentage of applicants admitted. The green bar shows the rate for women, and the orange bar shows the rate for men. The percent labels above each bar make the difference clear at a glance.

This visualization distills the data to its most basic 2×2 form—Admit × Gender—providing an aggregate snapshot before looking at differences across departments. It’s an ideal introductory plot for teaching how to summarize multidimensional data into a clear comparison of group proportions. In a single view, students can see the broad pattern that originally sparked debate about gender bias in UC Berkeley admissions—setting up the need for deeper, department-level analysis later on.

# 1) Prepare 2x2 Admit × Gender table with overall admitted proportions

agg2 <- as.data.frame(margin.table(UCBAdmissions, c(1, 2))) |>

tidyr::pivot_wider(names_from = Admit, values_from = Freq) |>

dplyr::mutate(n = Admitted + Rejected,

pct = Admitted / n)

# 2) Neon-inspired, high-contrast palette

flashy_pal <- c("Female" = "#FF1493",

"Male" = "#39FF14")

# 3) Plot with big bars, bold labels, and white background

ggplot(agg2, aes(x = Gender, y = pct, fill = Gender)) +

geom_col(width = 0.72, color = "black", linewidth = 0.8, show.legend = FALSE) +

geom_text(aes(label = scales::percent(pct, accuracy = 0.1)),

vjust = -0.4, size = 6, fontface = "bold", color = "black") +

scale_y_continuous(labels = scales::percent_format(),

limits = c(0, 1),

expand = expansion(mult = c(0, 0.10))) +

scale_fill_manual(values = flashy_pal) +

labs(

title = "Admission Rate by Gender",

subtitle = "UCBAdmissions (Fall 1973) — bright, clear, and bold",

x = NULL, y = "Share admitted"

) +

theme_minimal(base_size = 16) +

theme(

plot.background = element_rect(fill = "white", color = NA),

panel.background = element_rect(fill = "white", color = NA),

panel.grid.major = element_line(color = "grey85", linewidth = 0.4),

panel.grid.minor = element_blank(),

axis.text = element_text(color = "black"),

axis.title.y = element_text(face = "bold"),

plot.title = element_text(face = "bold", hjust = 0.5, size = 18),

plot.subtitle = element_text(hjust = 0.5, size = 13)

)

Visualizing the Structure by a Simple Barplot - By Department Divisions

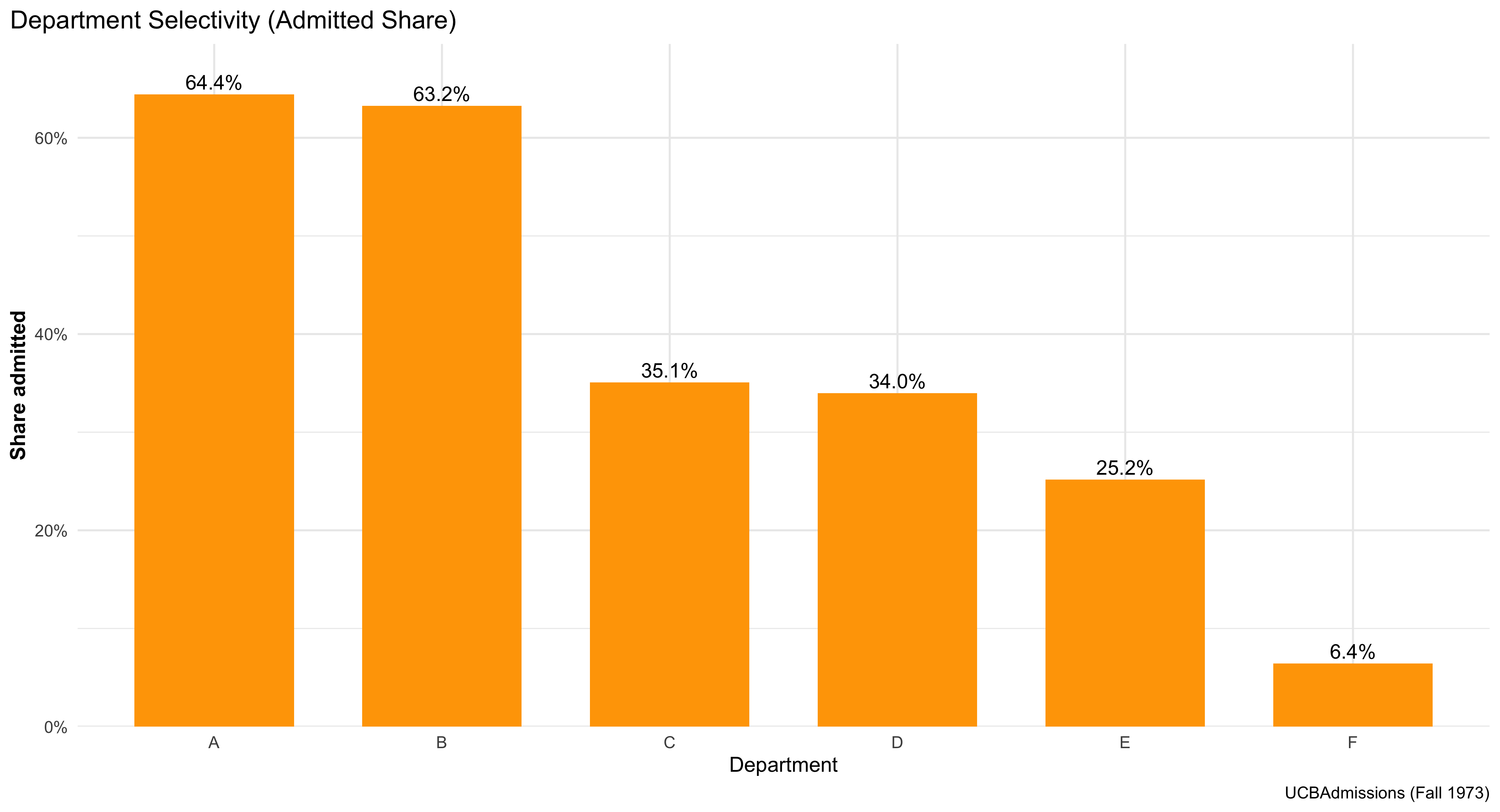

This bar graph shows how selective each department is at UC Berkeley. Each orange bar represents one department, and the height of the bar indicates the percentage of applicants who were admitted. The taller the bar, the higher the admit rate — meaning that department is less selective. Shorter bars show more competitive departments where fewer applicants were admitted. The percentage label above each bar gives the exact admit rate, making it easy to compare departments directly. Overall, the chart gives a clear visual summary of which departments are more or less selective in admissions.

#| label: ucb-admit-bar-gender

#| fig-width: 7

#| fig-height: 4.6

#| message: false

#| warning: false

# admitted share by department (overall, genders combined)

dept_select <- as.data.frame(margin.table(UCBAdmissions, c(1,3))) |>

pivot_wider(names_from = Admit, values_from = Freq) |>

mutate(n = Admitted + Rejected,

pct = Admitted / n)

ggplot(dept_select, aes(Dept, pct)) +

geom_col(width = 0.70, fill = "orange") + # purple from your example vibe

geom_text(aes(label = percent(pct, accuracy = 0.1)),

vjust = -0.35, size = 4.1) +

scale_y_continuous(labels = percent_format(),

expand = expansion(mult = c(0, .08))) +

labs(title = "Department Selectivity (Admitted Share)",

x = "Department", y = "Share admitted",

caption = "UCBAdmissions (Fall 1973)") +

theme_minimal(base_size = 12) +

theme(plot.title.position = "plot",

axis.title.y = element_text(face = "bold"))

Visualizing the Structure (Mosaic & Bar Plots)

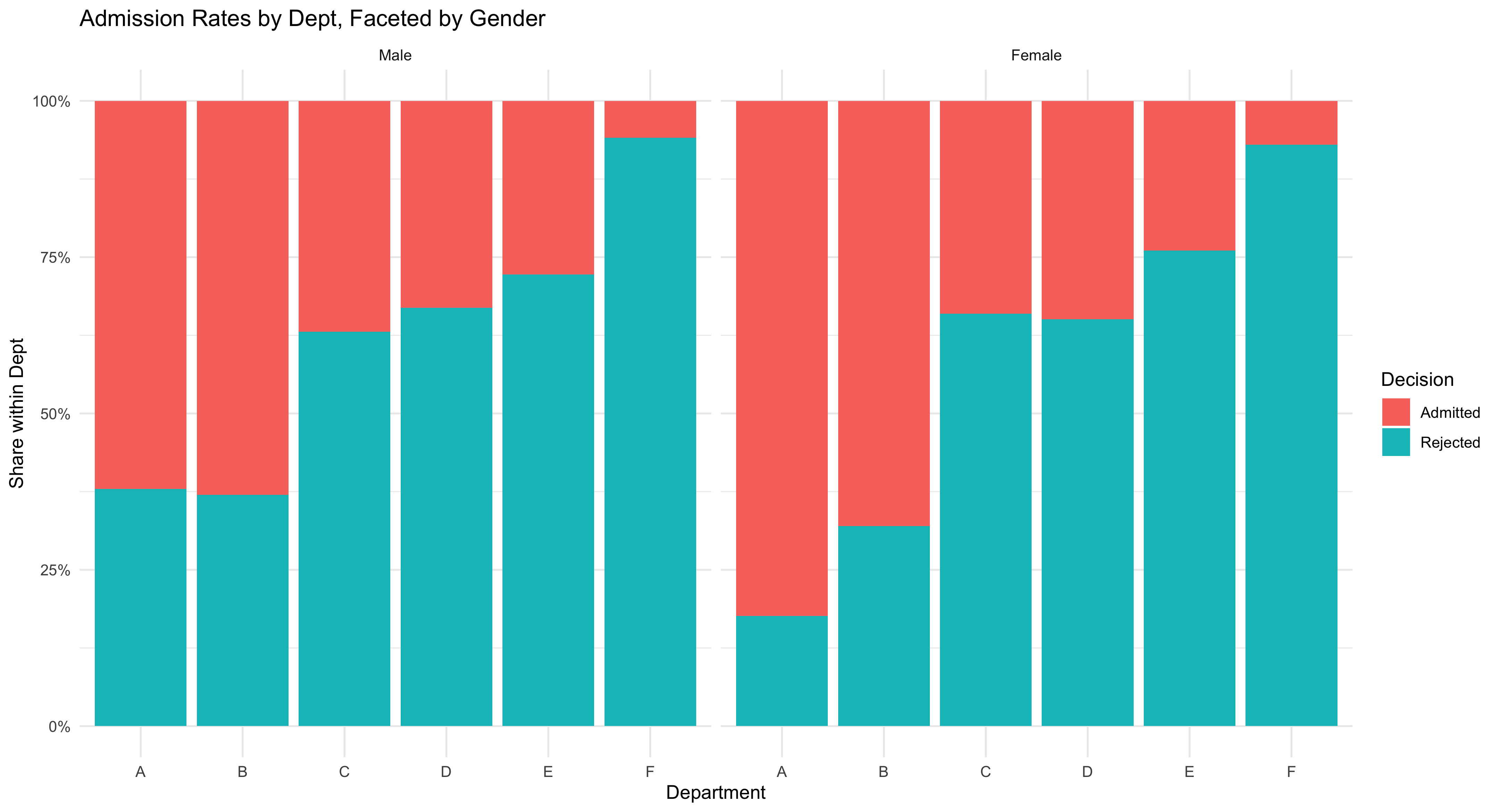

This figure shows a clean, comparative view of admissions outcomes across departments and by gender, using a simple stacked bar chart. Each bar represents one department at UC Berkeley, and the colored segments show how many applicants were admitted versus rejected. Faceting the chart by gender creates two panels—one for women and one for men—so students can easily compare patterns side by side without crowding the display.

The chart emphasizes relative shares visually: taller “admitted” segments mean higher success rates within that department, while smaller ones indicate lower acceptance. By comparing across panels, you can see whether men and women tended to be admitted at similar rates in each department.

This kind of bar chart is one of the most intuitive ways to display categorical outcomes, helping students connect data structure to interpretation. It also reinforces a central lesson in social statistics: before moving to complex models, we first use clear, direct visuals to explore how outcomes differ across groups and institutional contexts.

# Tidy bar plot: admitted proportion by gender within department.

# (Requires ggplot2; included if you loaded tidyverse.)

# Load plotting and formatting helpers (percent labels).

library(ggplot2); library(scales)

# Draw stacked bars per department, scaled to *proportions* (“position = 'fill'”),

# and facet the figure so Female and Male are shown in separate panels.

ggplot(ucb, aes(x = Dept, y = Freq, fill = Admit)) +

# Stack admitted + rejected to 100% within each Dept for each facet.

geom_col(position = "fill") +

# One facet per Gender so we can compare shapes directly.

facet_wrap(~ Gender) +

# Pretty percent labels on the y axis.

scale_y_continuous(labels = percent) +

# Clear title and axis labels so readers know exactly what’s being shown.

labs(title = "Admission Rates by Dept, Faceted by Gender",

y = "Share within Dept", x = "Department", fill = "Decision") +

# Minimal theme keeps focus on the data.

theme_minimal()

More Sophisticated Way of Visualizing

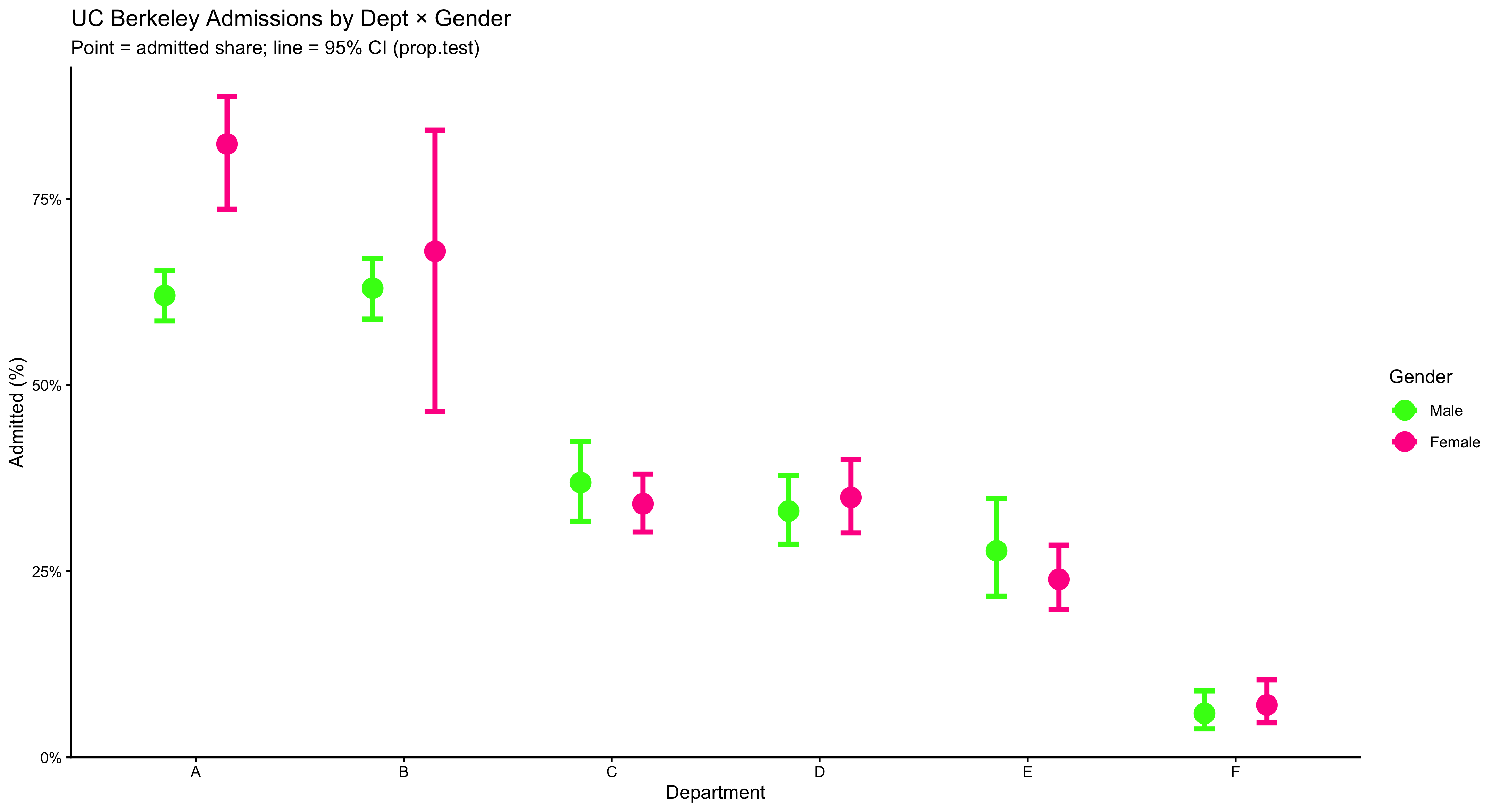

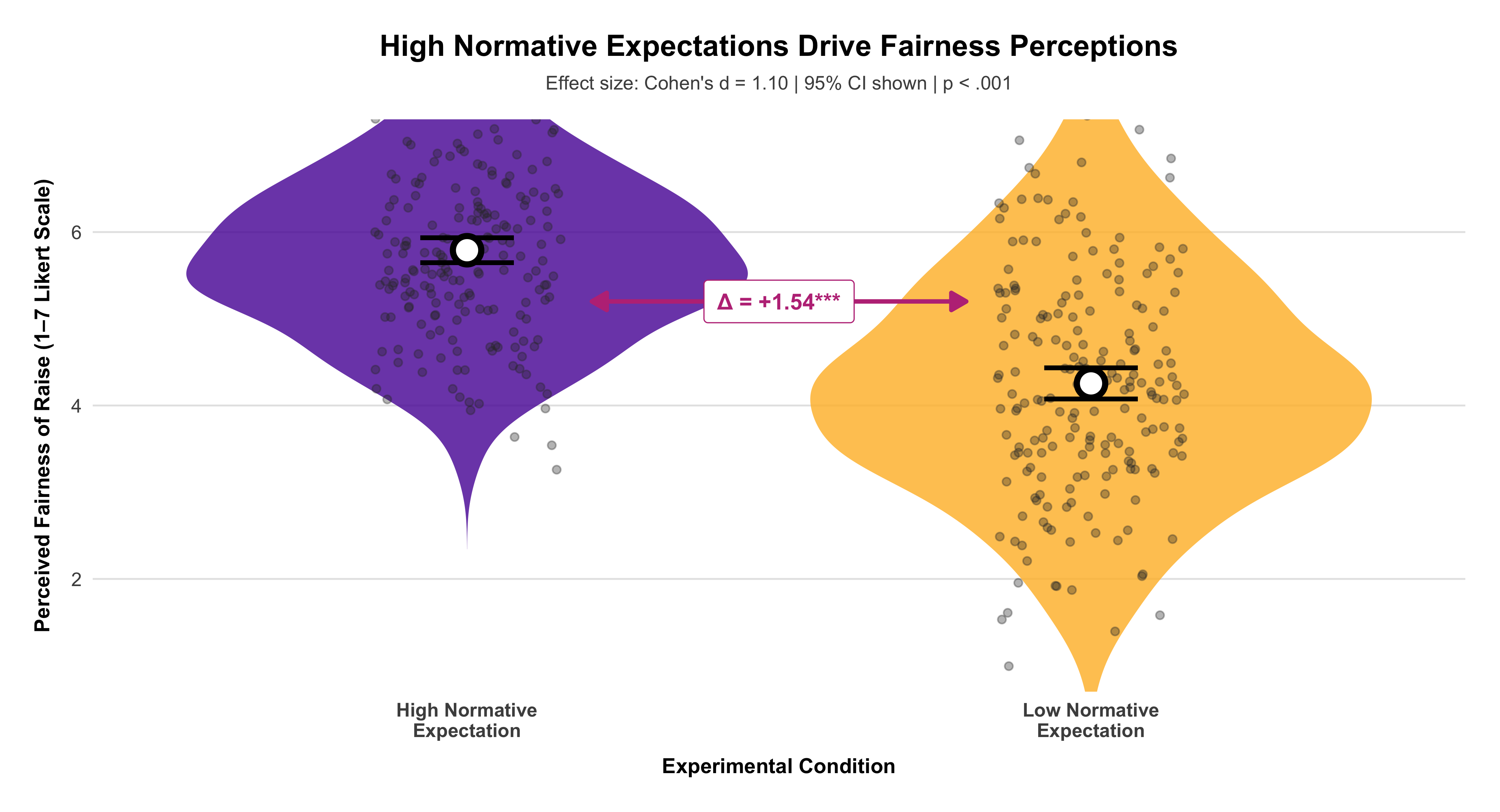

This chart compares admission rates by gender within each department, while also showing uncertainty. Each department appears on the x-axis with two side-by-side (“dodged”) points: one for female and one for male, where the point’s height is the estimated admitted share in that department. The thick vertical bars through each point are 95% confidence intervals from prop.test, which indicate the range of values consistent with the data; shorter bars mean more precise estimates (usually more applicants), and longer bars mean less precision. You may read it as such: within a given department, compare the two points (who is higher?) and check whether the CIs overlap (is the difference clearly large or could it plausibly be zero?). The y-axis is in percent, capped at 90% to keep the comparisons visually clear. The bright colors and large markers make the pairwise contrast obvious, but the substantive message is about conditional comparisons (gender within department) and uncertainty (CIs). Use this figure to teach students that headline gaps can vanish or flip once you condition on the relevant grouping and account for sampling variability.

# Plot the Dept × Gender admitted share with gaudy colors & bigger markers

ggplot(dg, aes(Dept, p_hat, color = Gender)) +

# Thick, obvious 95% CI bars; widened dodge so points don't overlap

geom_errorbar(aes(ymin = lwr, ymax = upr),

position = position_dodge(width = 0.6),

width = 0.2, linewidth = 1.4) +

# Big, loud points

geom_point(position = position_dodge(width = 0.6),

size = 5.2) +

# Percent axis

scale_y_continuous(labels = scales::percent, limits = c(0, 0.9),

expand = expansion(mult = c(0, 0.03))) +

# Neon, high-contrast palette

scale_color_manual(values = c(

"Female" = "#FF1493", # deep magenta / neon pink

"Male" = "#39FF14" # neon green

)) +

labs(title = "UC Berkeley Admissions by Dept × Gender",

subtitle = "Point = admitted share; line = 95% CI (prop.test)",

x = "Department", y = "Admitted (%)", color = "Gender") +

# Make legend keys big enough to match the jumbo points

guides(color = guide_legend(override.aes = list(size = 5))) + theme_classic()

2.13 Even A More Sophisticated Way of Visualization

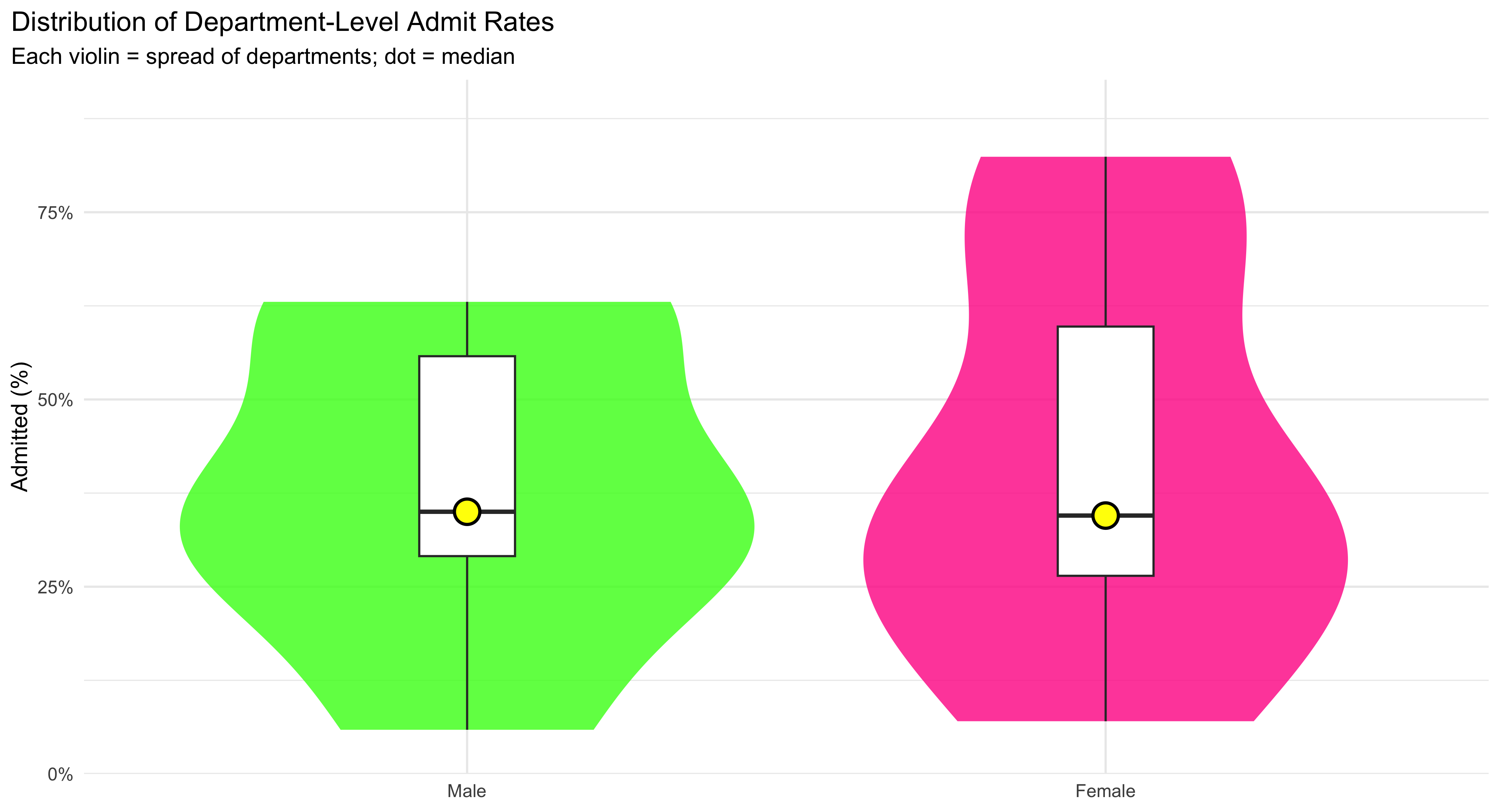

This figure shows how admission rates are distributed across departments for each gender, not just a single average. The wide, smooth violin shapes trace the full distribution of department-level admit rates—thicker sections mean more departments fall in that range, thinner sections mean fewer. Over the violin, the boxplot adds quick landmarks: the box spans the interquartile range (IQR) where the middle 50% of departments lie, and the line inside the box marks the median. To make that median impossible to miss, a large yellow dot is plotted right on it. Reading the plot is straightforward: compare the height and thickness of the two violins to see differences in spread and typical values, and compare the median dots to see which gender tends to have higher rates across departments. Because the y-axis is in percent and capped at 90%, the common range is easy to scan without squashing the detail. Use this plot when you want students to see distributional differences (center and spread) across groups, not just their means.

# Compare the *distribution* of department-level admitted shares by gender:

# violin = overall shape; box = IQR; black dot = median.

ggplot(dg, aes(Gender, p_hat, fill = Gender)) +

# Smooth density shape of dept-level rates per gender.

geom_violin(alpha = 0.8, color = NA, width = 0.9) +

# Boxplot overlay shows median + IQR without outliers drawn.

geom_boxplot(width = 0.15, fill = "white", outlier.shape = NA) +

# Add a dot at the median for quick comparison.

stat_summary(fun = median, geom = "point", size = 6,

shape = 21, stroke = 1.3, color = "black",

fill = "#FFFF00") +

# Percent axis, clipped to [0, 0.9] with a little visual breathing room.

scale_y_continuous(labels = percent_format(accuracy = 1),

limits = c(0, 0.9),

expand = expansion(mult = c(0, .03))) +

# Manually set fills so the palette matches prior plots.

scale_fill_manual(values = c("Female" = "#FF1493", "Male" = "#39FF14")) +

# Title/subtitle clarify what each glyph represents.

labs(title = "Distribution of Department-Level Admit Rates",

subtitle = "Each violin = spread of departments; dot = median",

x = NULL, y = "Admitted (%)") +

# Clean theme, slightly larger base text, and hide redundant legend.

theme_minimal(base_size = 13) +

theme(legend.position = "none",

plot.title.position = "plot")

2.14 Visualization Faceted by Department at UC Berkeley

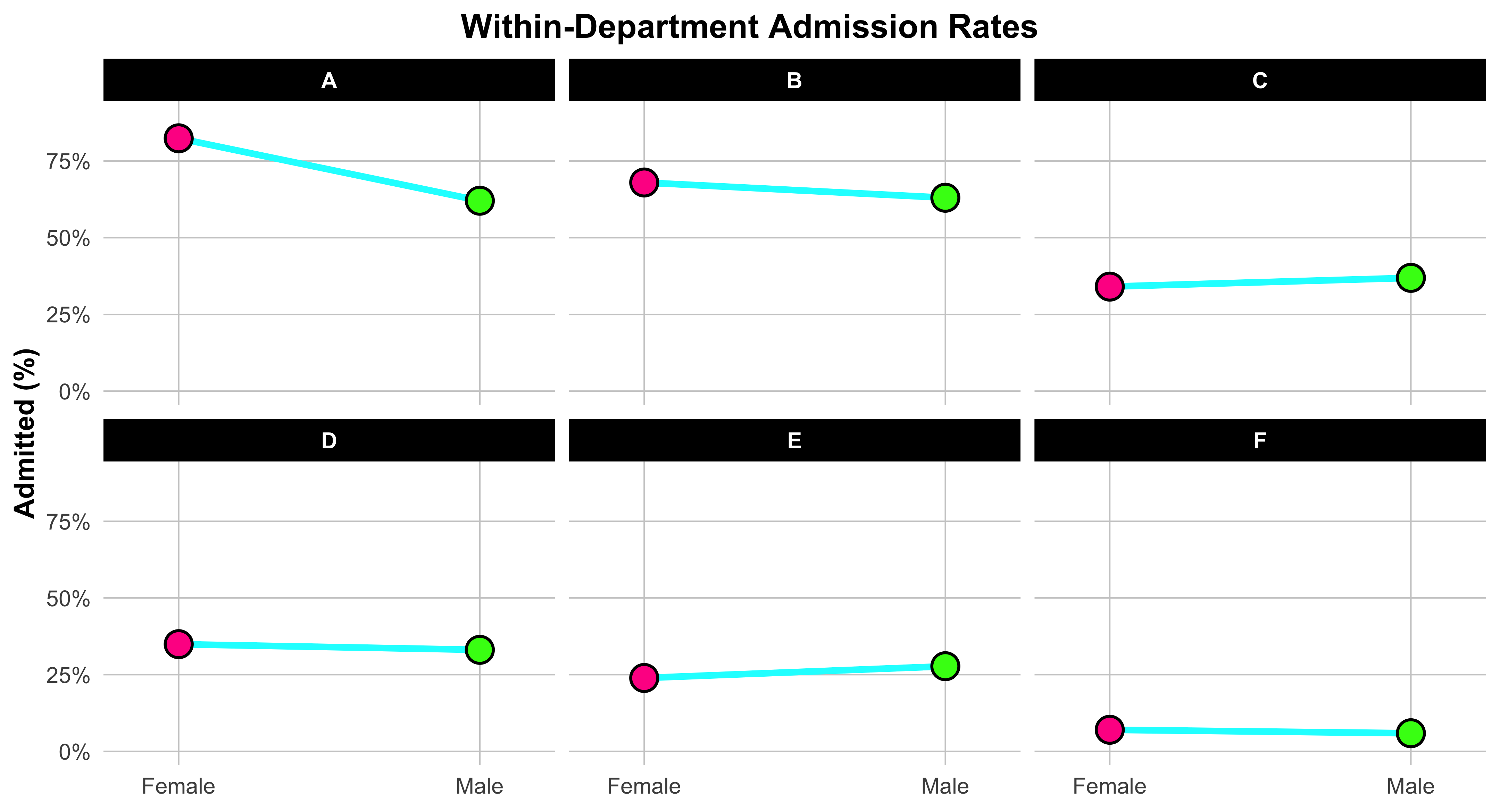

This plot helps us visualize gender differences in admission rates within each department. We start by reshaping the data so that each department has one column for the female admission rate and one for the male admission rate. This “wide” format makes it easy to connect each department’s two values with a line.

Each line links the female rate (on the left) to the male rate (on the right), showing at a glance which gender had the higher admission rate within that department. The slope and direction of the line are what matter: if the line tilts upward to the right, men were admitted at a higher rate; if it tilts downward, women were admitted at a higher rate. The bright neon colors and bold points make the contrast unmistakable. Faceting the plot by department creates one small panel per department, so you can compare patterns across them without clutter. The x-axis simply labels “Female” and “Male,” while the y-axis shows the percent admitted. In short, this figure teaches a key skill in data visualization: how to display paired comparisons across categories while preserving the structure of the data. Each line tells its own small story about one department—and together, they reveal the broader pattern behind the Simpson’s paradox in UC Berkeley admissions.

dg_wide <- dg |>

select(Dept, Gender, p_hat) |>

pivot_wider(names_from = Gender, values_from = p_hat) |>

drop_na()

ggplot(dg_wide) +

# Fat, neon connector lines per department

geom_segment(aes(x = 1, xend = 2, y = Female, yend = Male),

color = "#00FFFF", linewidth = 1.8, lineend = "round", na.rm = TRUE) +

# Female endpoint (left) — jumbo neon pink with black outline

geom_point(aes(x = 1, y = Female),

shape = 21, size = 6.5, stroke = 1.2,

fill = "#FF1493", color = "black", na.rm = TRUE) +

# Male endpoint (right) — jumbo neon green with black outline

geom_point(aes(x = 2, y = Male),

shape = 21, size = 6.5, stroke = 1.2,

fill = "#39FF14", color = "black", na.rm = TRUE) +

# Zoom without dropping rows

coord_cartesian(ylim = c(0, 0.90)) +

# Two labeled x positions with extra breathing room

scale_x_continuous(breaks = c(1, 2), labels = c("Female", "Male"),

expand = expansion(add = 0.25)) +

# Percent labels for y

scale_y_continuous(labels = percent_format(accuracy = 1)) +

# One panel per department

facet_wrap(~ Dept, nrow = 2) +

# --- Keep your original descriptive text exactly ---

labs(title = "Within-Department Admission Rates",

x = NULL, y = "Admitted (%)") +

# Gaudy-friendly theme tweaks with centered title

theme_minimal(base_size = 16) +

theme(

legend.position = "none",

plot.title.position = "plot",

plot.title = element_text(face = "bold", hjust = 0.5), # Center title

plot.subtitle = element_text(hjust = 0.5), # Center subtitle

axis.title.y = element_text(face = "bold"),

panel.grid.major = element_line(linewidth = 0.4, color = "grey80"),

panel.grid.minor = element_blank(),

strip.text = element_text(face = "bold", color = "white"),

strip.background = element_rect(fill = "black", color = NA)

)

2.15 Selecting Appropriate Graphical Summaries

- Quantitative: histograms/box plots (large n); dot plots (small n).

- Categorical: bar charts or frequency tables; pie charts only when categories are few and distinct.

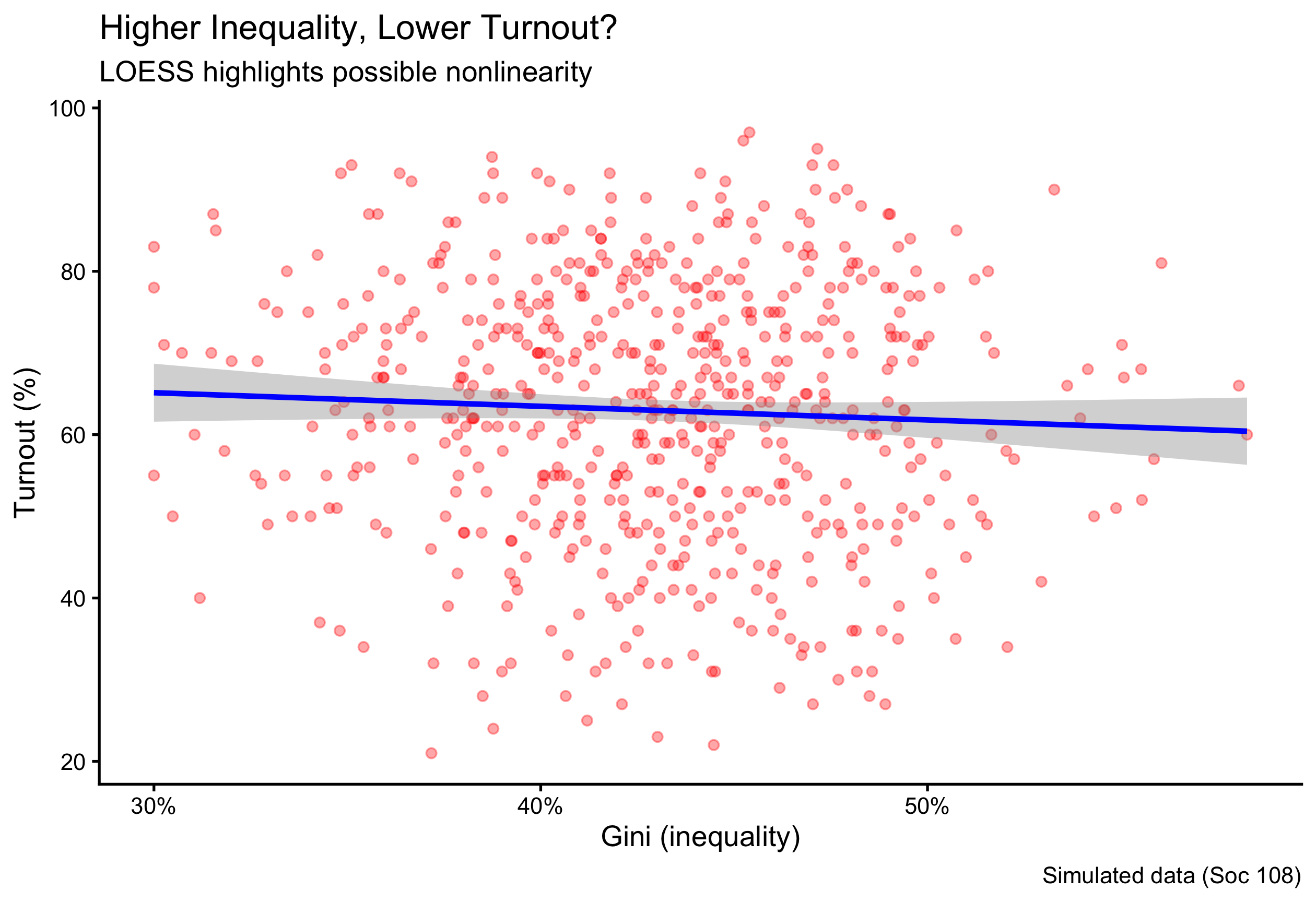

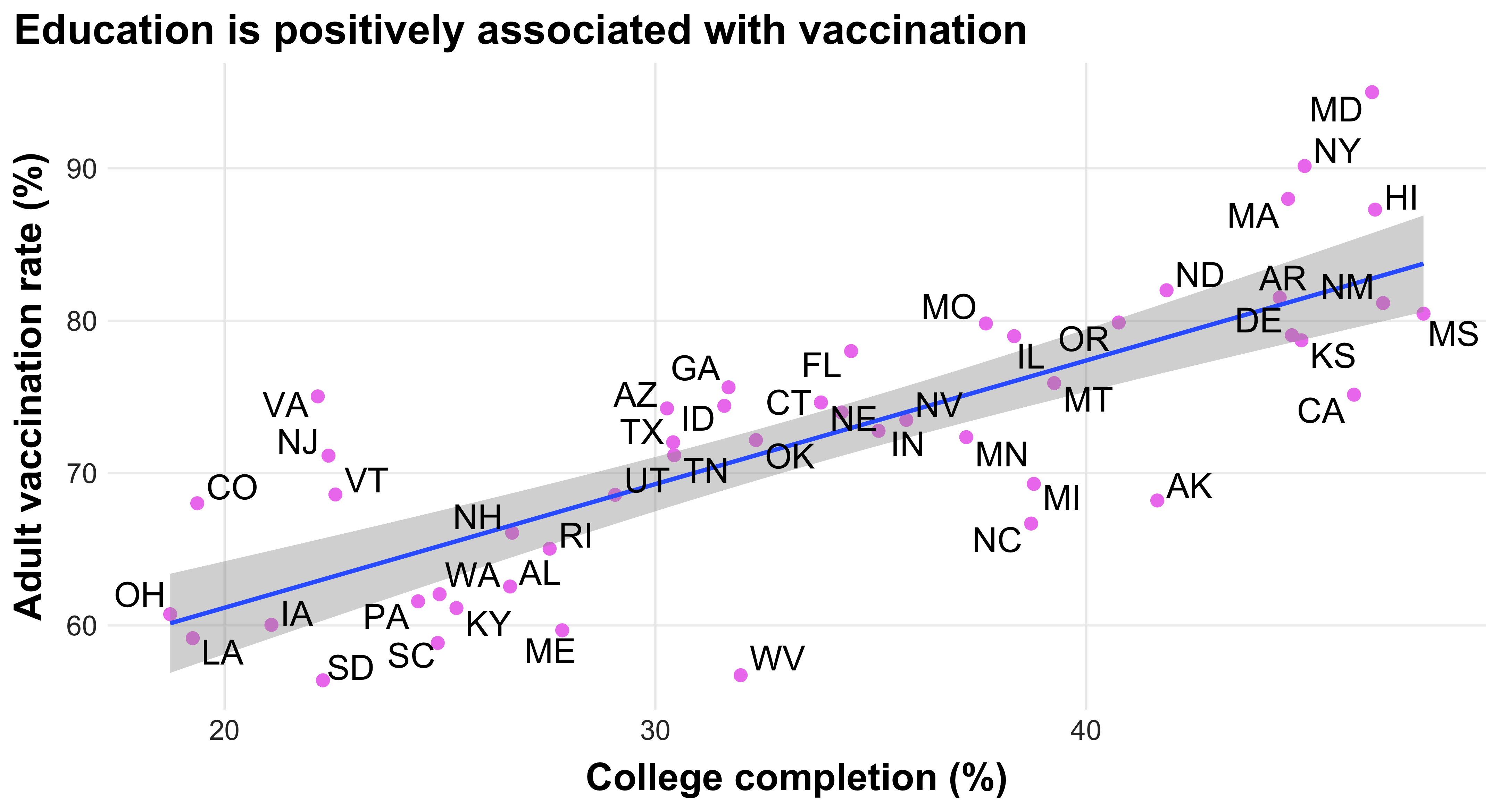

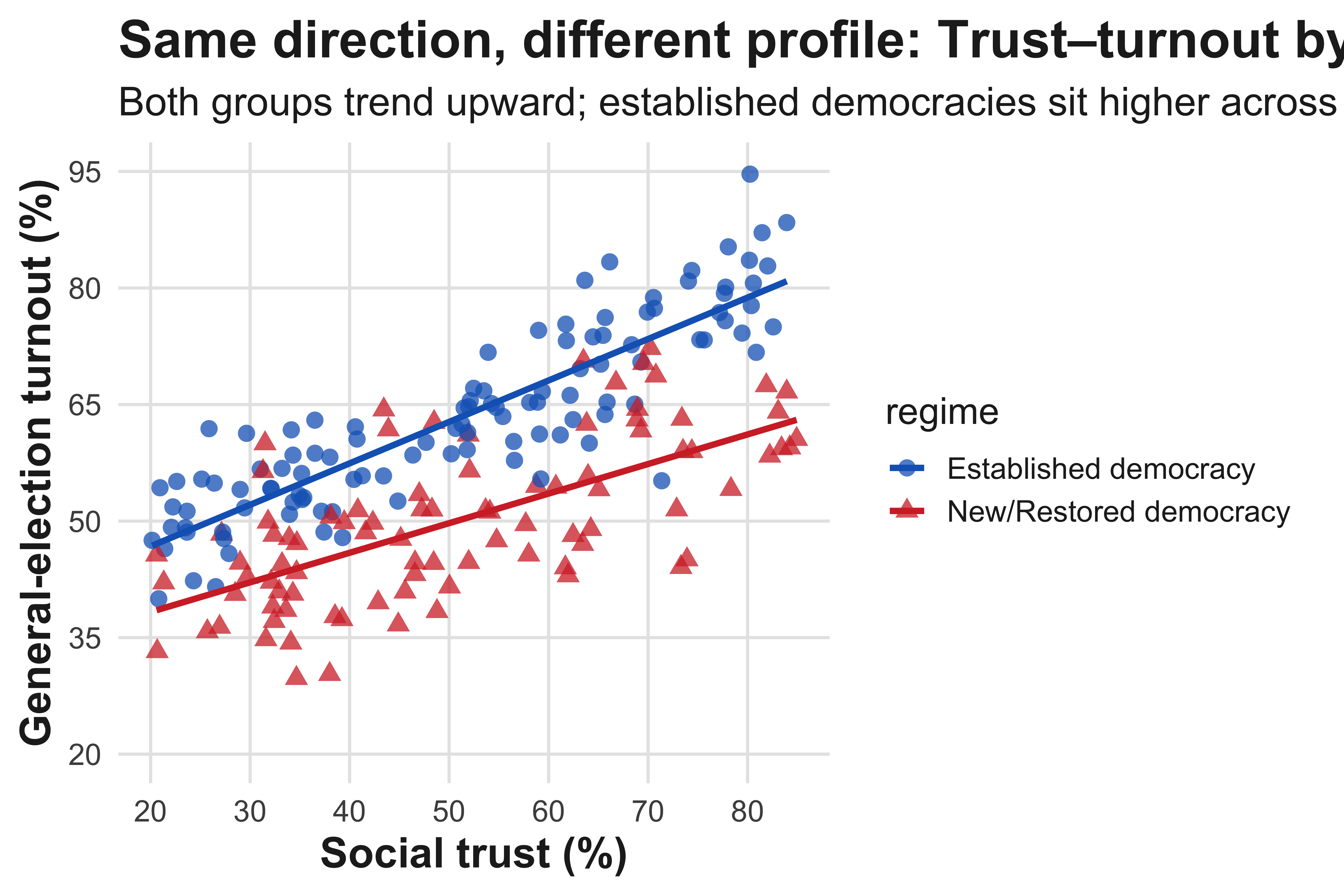

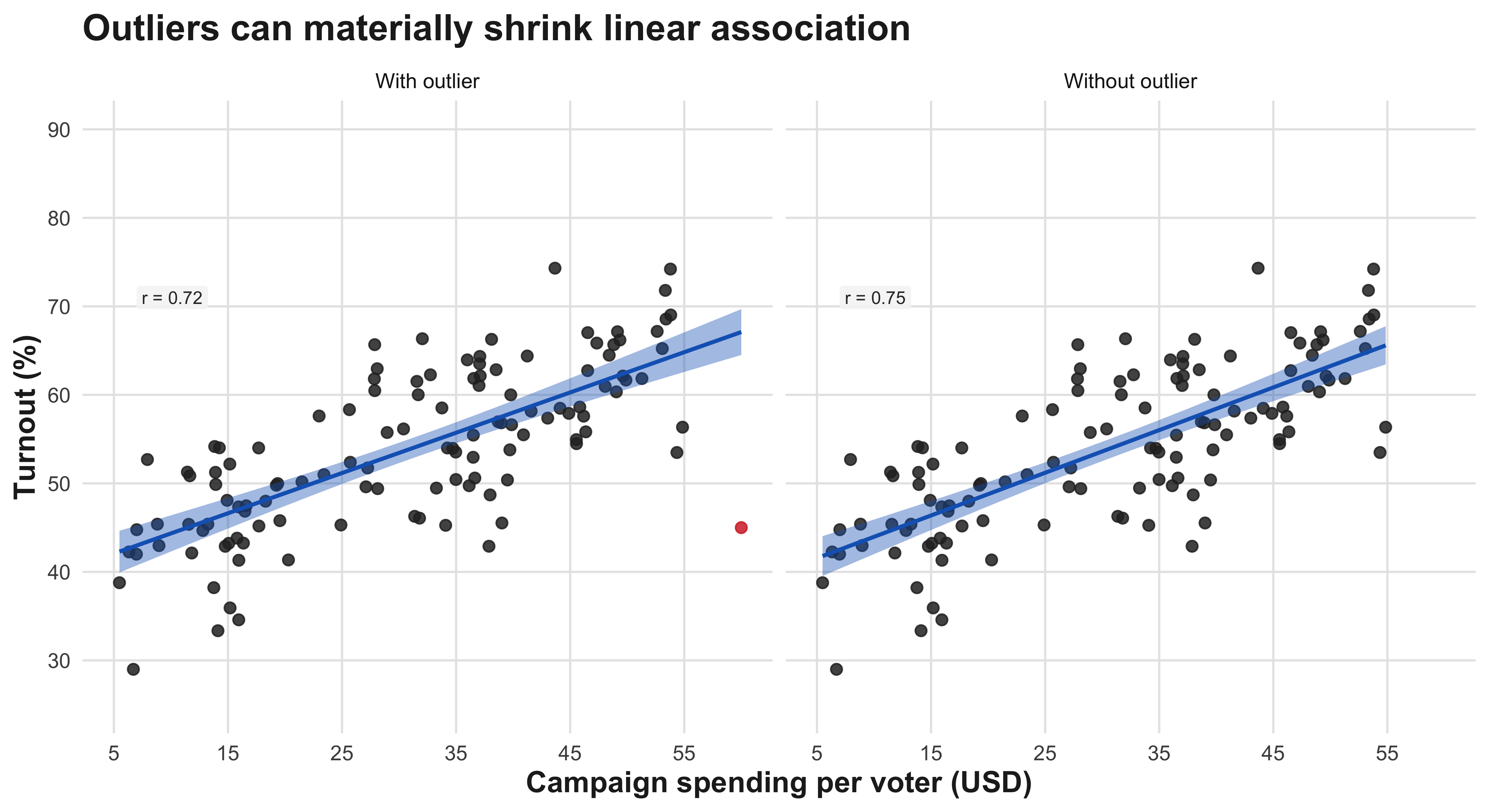

- Two quantitative: scatterplot.

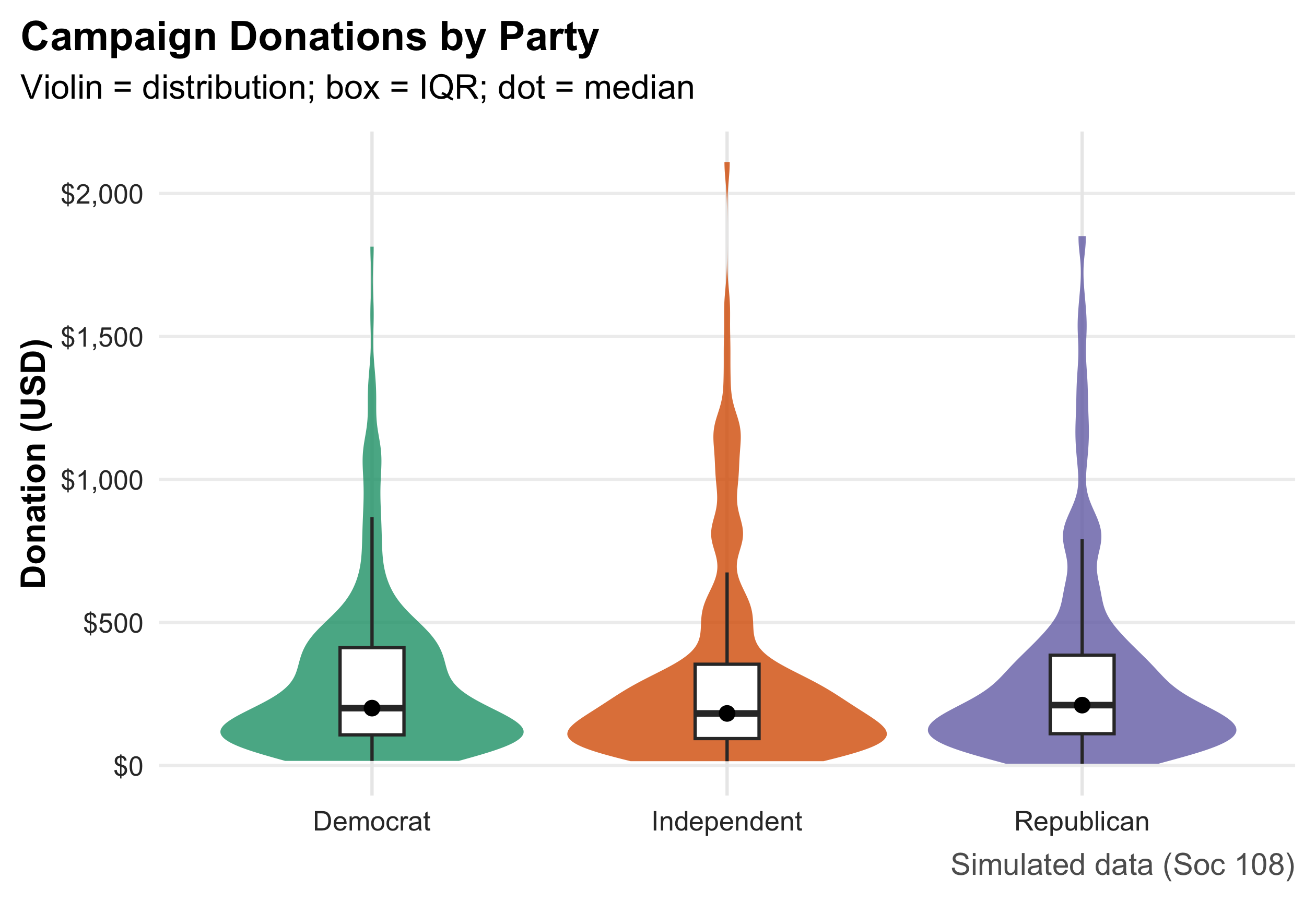

- One quantitative across groups: box/violin.

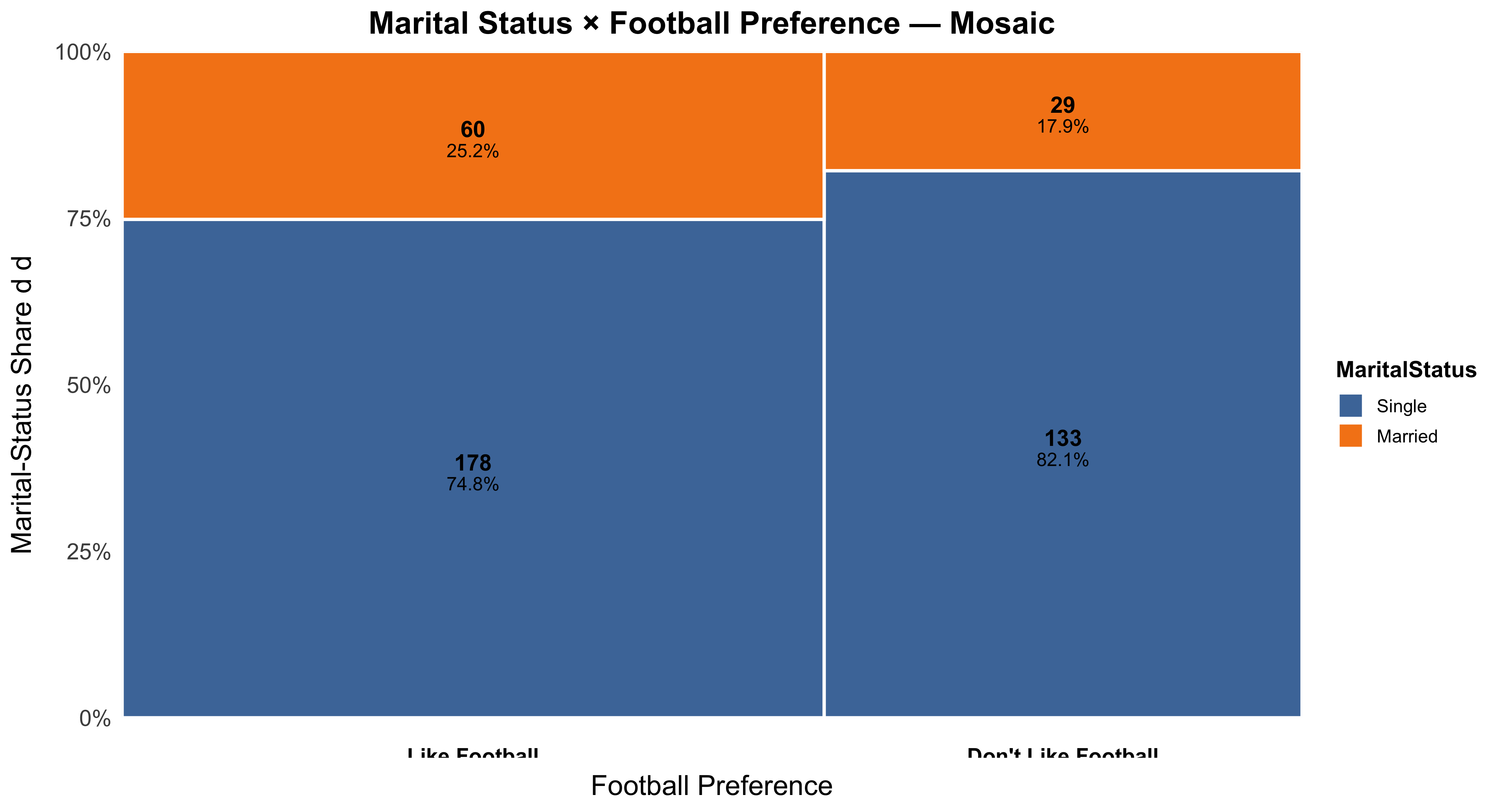

- Categorical across groups: contingency/mosaic.

2.16 A Small, Reproducible Example Dataset

n <- 600

ps <- tibble(

respondent = 1:n,

state = sample(state.abb, n, TRUE),

party = fct_infreq(sample(c("Democrat","Independent","Republican"), n, TRUE, prob = c(.36,.28,.36))),

ideology = rnorm(n, mean = ifelse(party=="Democrat",-0.2, ifelse(party=="Republican",0.3,0)), sd = 0.9),

turnout = pmin(pmax(round(rbeta(n, 5, 3) * 100), 0), 100),

income_thou = round(rlnorm(n, log(60), 0.45)),

incumbent_contact = rbinom(n, 1, ifelse(party=="Democrat", .52, .48)),

method = sample(c("Door-to-Door","Phone"), n, TRUE, prob = c(.55,.45)),

donation = round(rlnorm(n, log(200), 1.1))

)

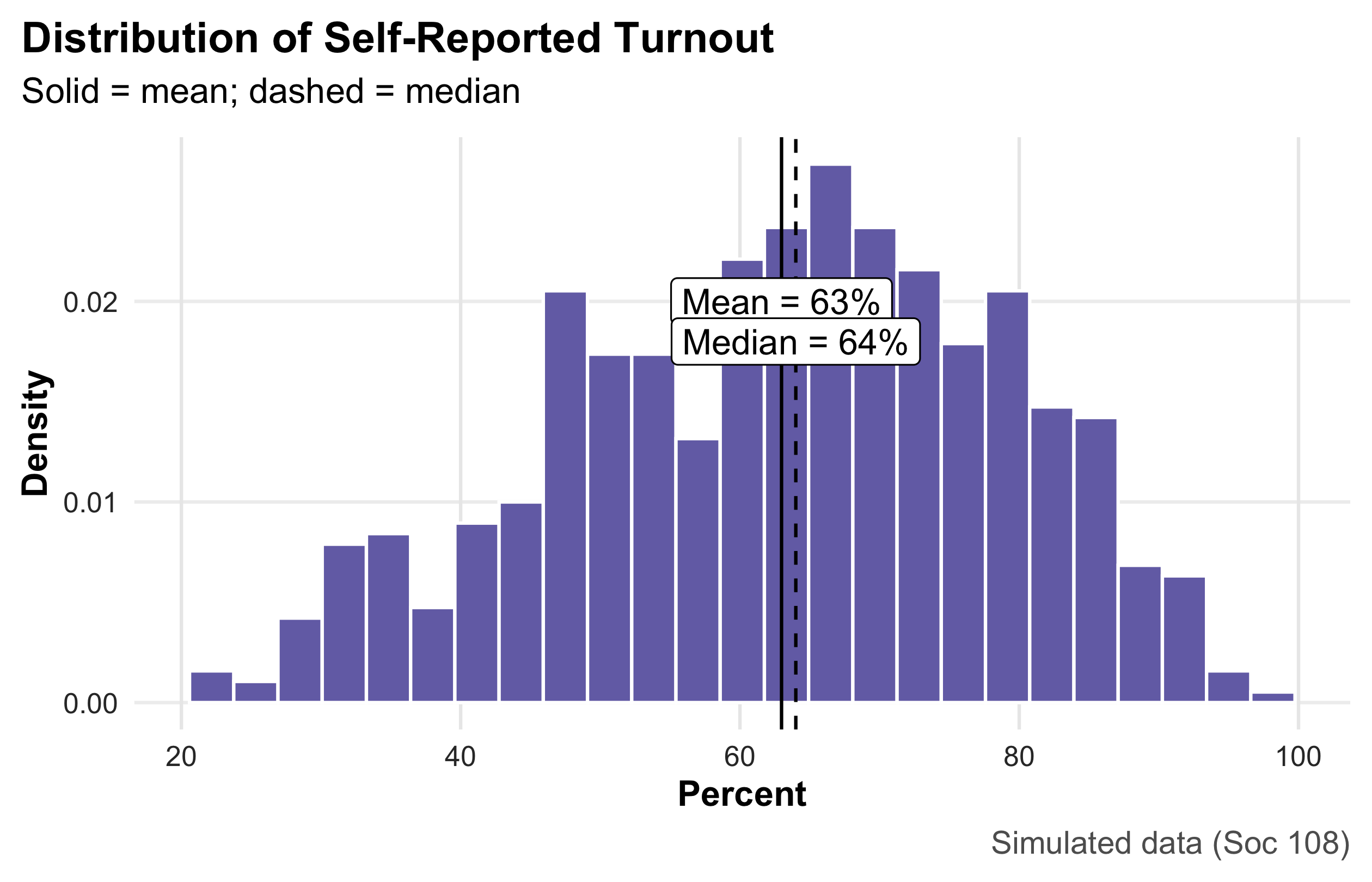

summary(ps) respondent state party ideology

Min. : 1.0 Length:600 Democrat :225 Min. :-2.75901

1st Qu.:150.8 Class :character Republican :223 1st Qu.:-0.59832

Median :300.5 Mode :character Independent:152 Median : 0.01644

Mean :300.5 Mean : 0.01598

3rd Qu.:450.2 3rd Qu.: 0.62120

Max. :600.0 Max. : 2.70922

turnout income_thou incumbent_contact method

Min. :21.00 Min. : 17.00 Min. :0.0 Length:600

1st Qu.:51.75 1st Qu.: 43.00 1st Qu.:0.0 Class :character

Median :64.00 Median : 59.50 Median :0.5 Mode :character

Mean :62.98 Mean : 64.51 Mean :0.5

3rd Qu.:75.00 3rd Qu.: 80.25 3rd Qu.:1.0

Max. :97.00 Max. :216.00 Max. :1.0

donation

Min. : 6.0

1st Qu.: 105.0

Median : 212.0

Mean : 383.8

3rd Qu.: 426.2

Max. :11138.0 Party Identification

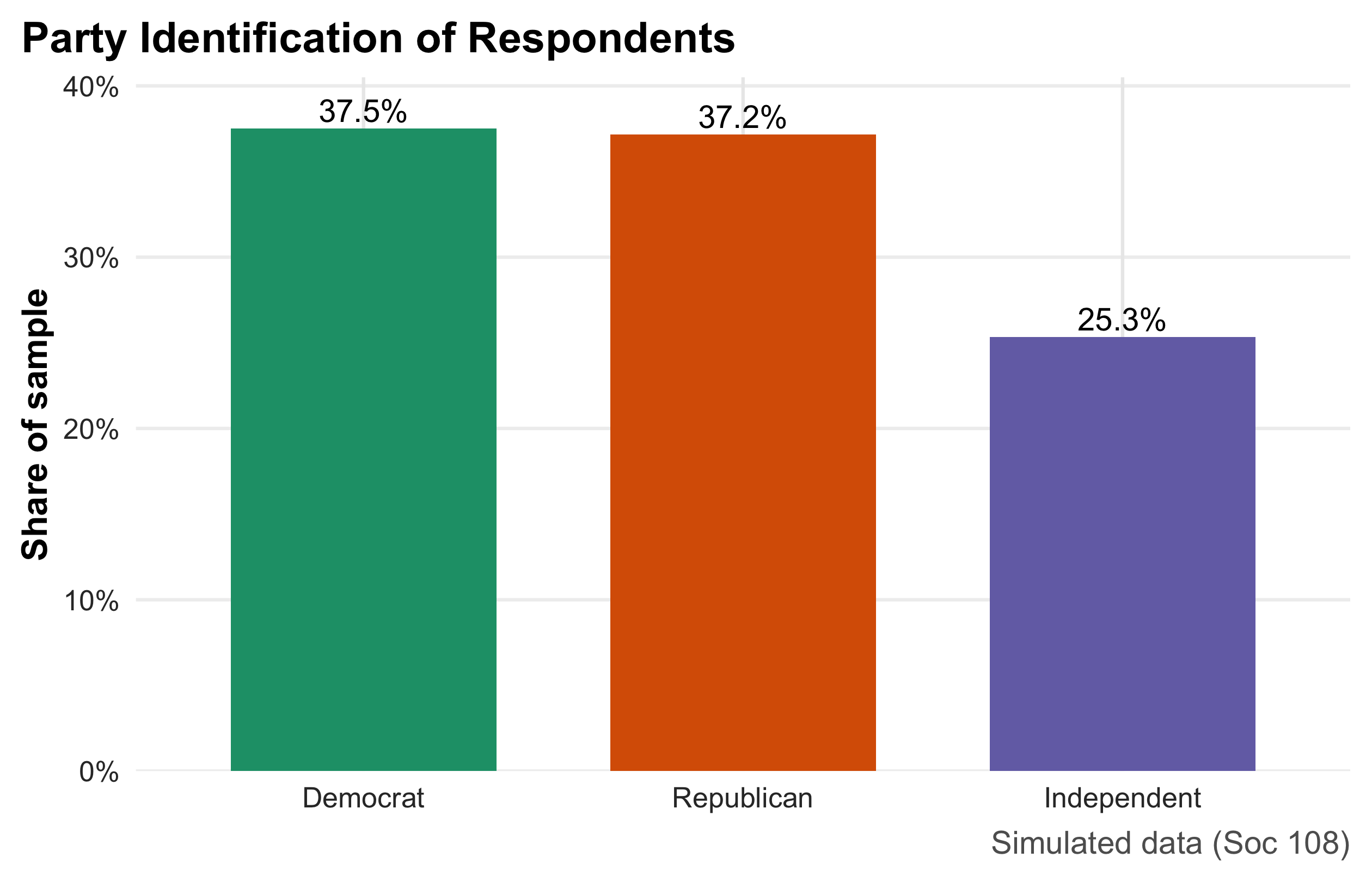

This bar chart summarizes a categorical variable by displaying the share of respondents in each party category. Heights encode proportions (y-axis in percent), and direct labels above the bars make exact values easy to read without consulting the axis. Because bars compare parts of a whole, the figure immediately communicates relative size (e.g., whether Democrats and Republicans are similarly common and how Independents compare). This is the preferred display for nominal categories; pies are harder to compare precisely, and frequency tables lack visual punch. Interpretation is purely descriptive—no uncertainty bands are shown—so readers should treat the reported shares as sample estimates that would vary across samples.

party_share <- ps |> count(party) |> mutate(pct = n/sum(n))

party_share |>

ggplot(aes(party, pct, fill = party)) +

geom_col(width = 0.7, show.legend = FALSE) +

geom_text(aes(label = percent(pct, .1)), vjust = -0.3, size = 4.1) +